John is square

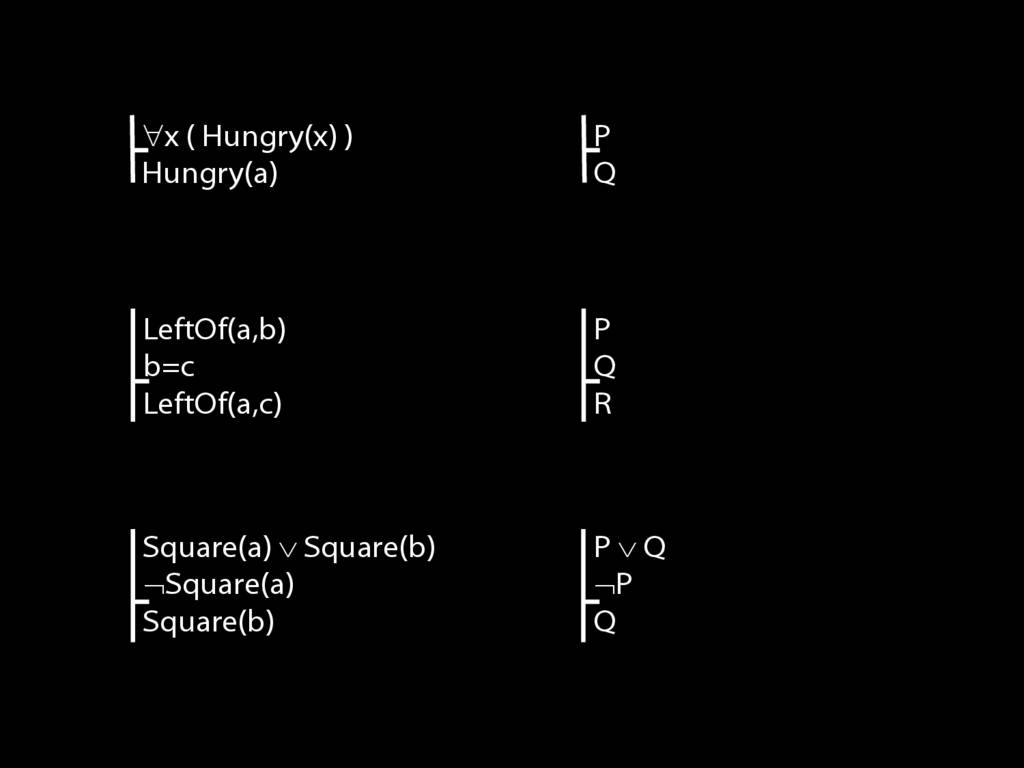

Square( a )

John is to the left of Ayesha

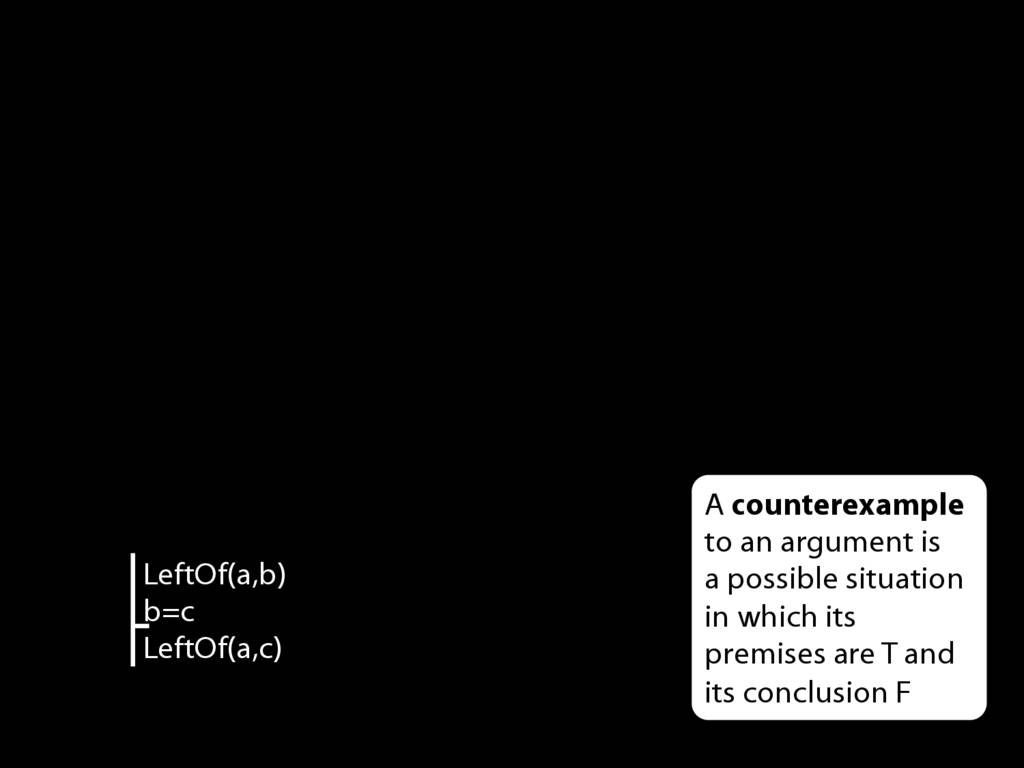

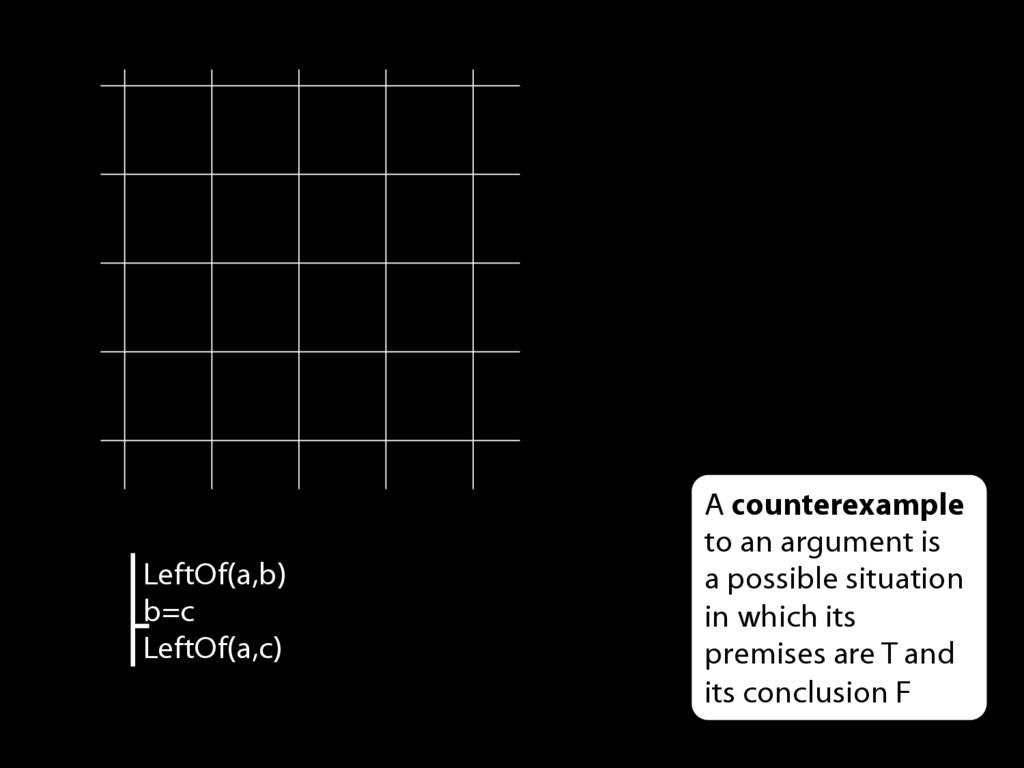

LeftOf( a , b )

John is square or Ayesha is trinagulra

Square( a ) ∨ Trinagulra( b )

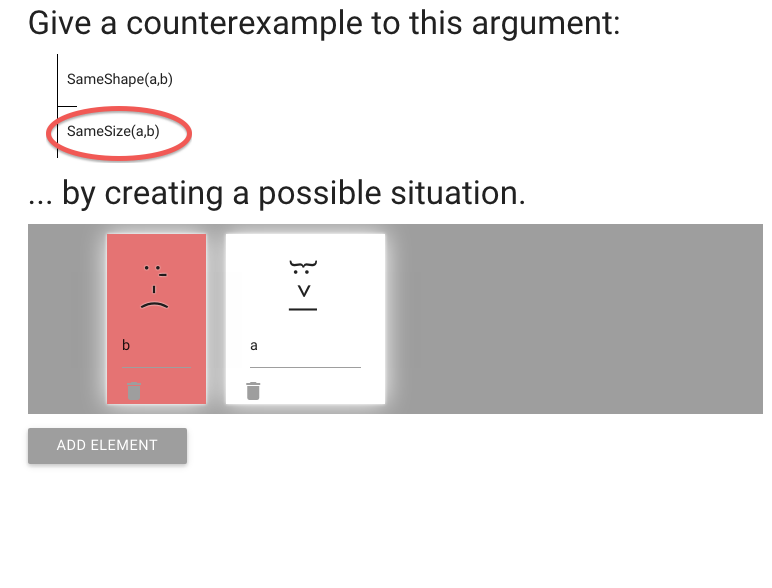

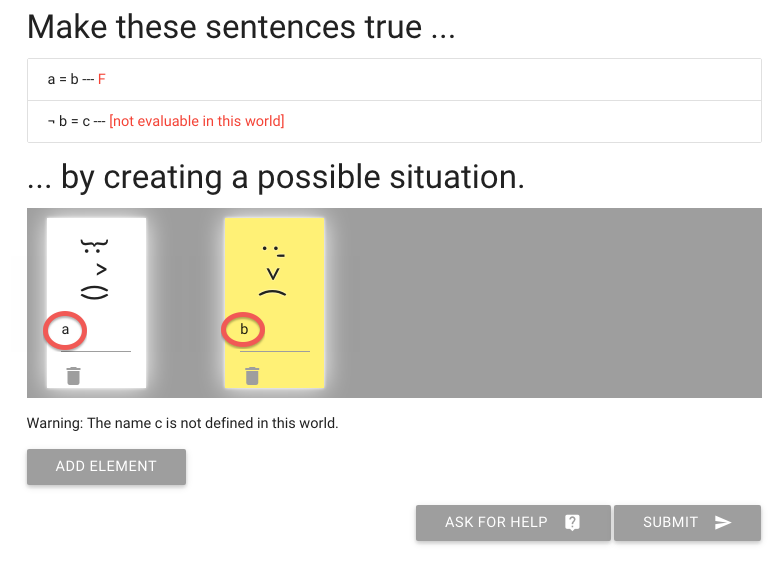

name (refers to an object)

predicate (refers to a property)

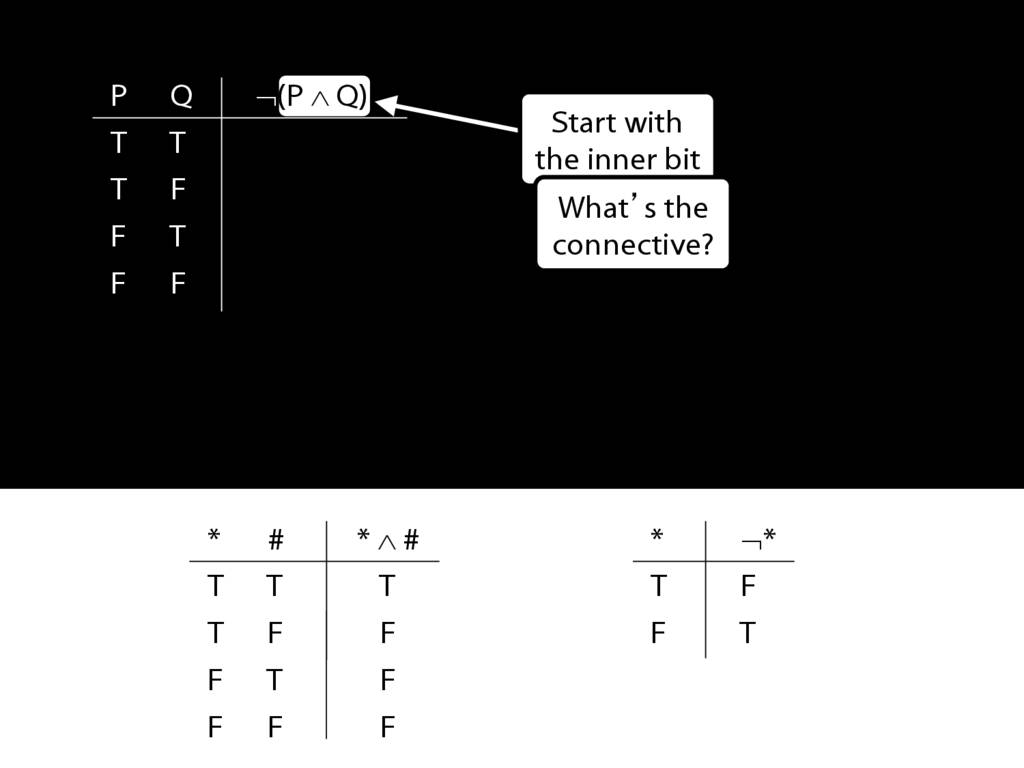

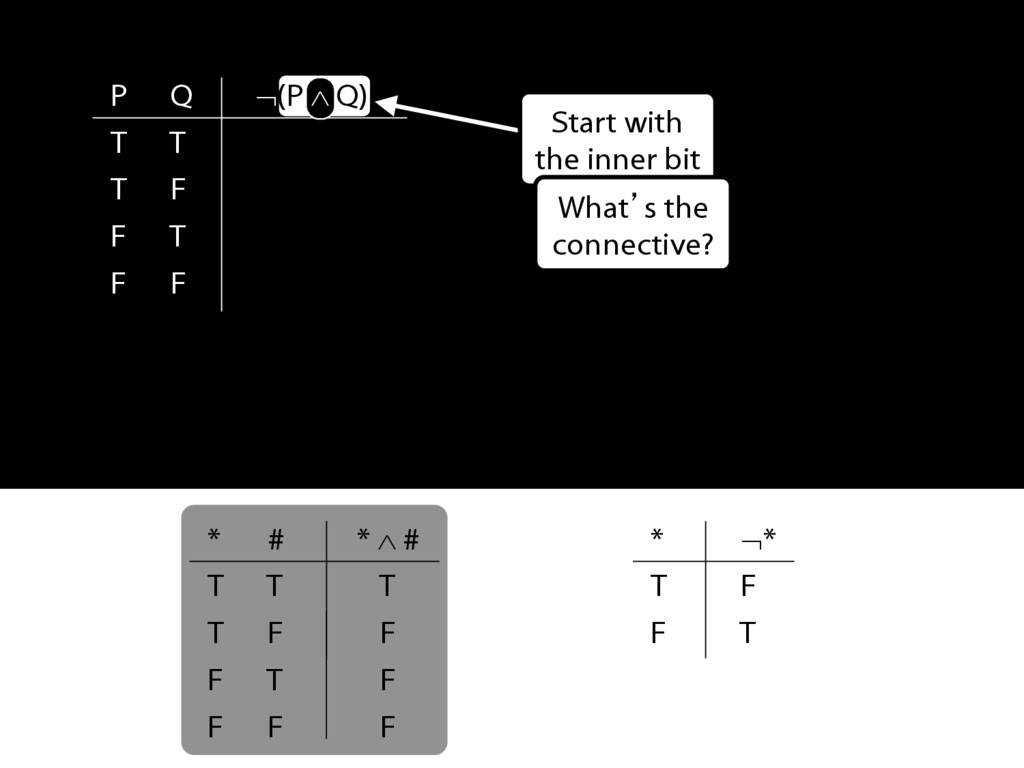

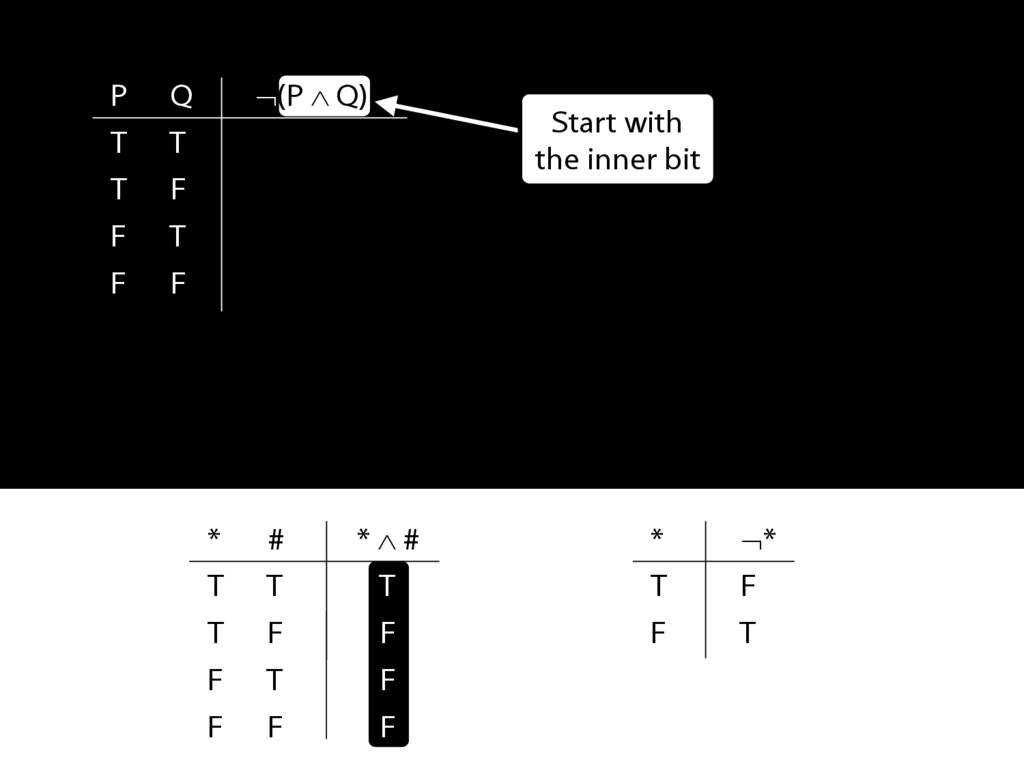

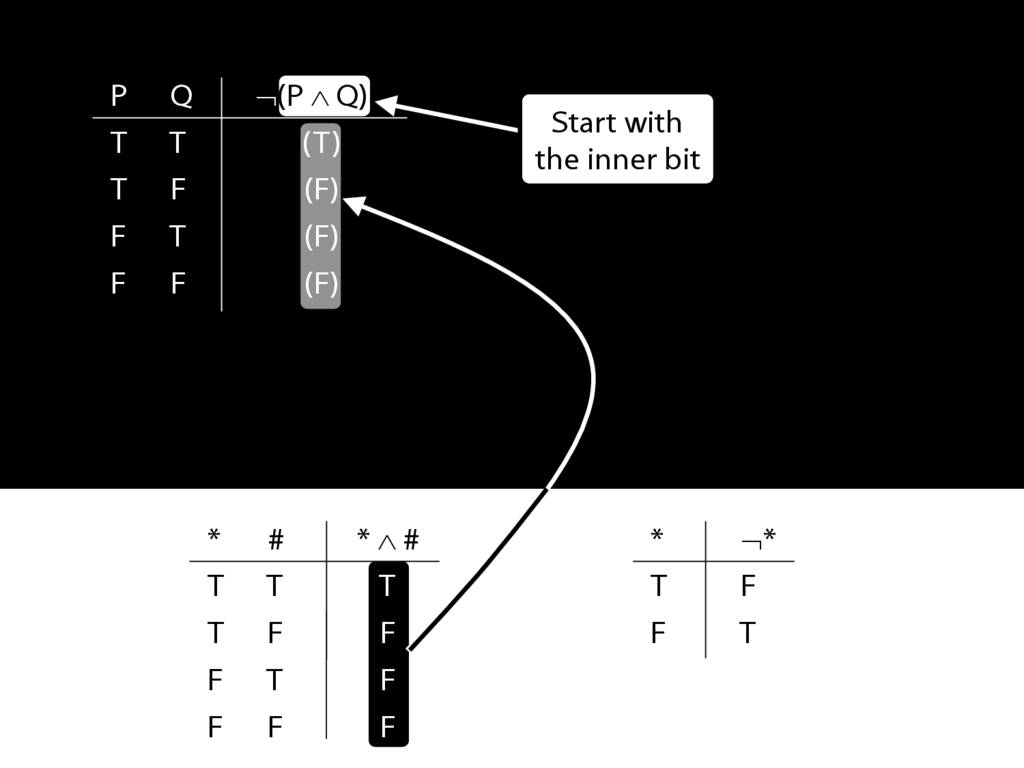

connective (joins sentences)

sentence (can be true or false)

atomic sentence (no connectives)

non-atomic sentence (contains connectives)

Our approach to studying logic will involve a formal language called awFOL.

`FOL' stands for first order language, and I call this particular first-order language

`awFOL` because, like nearly all first-order languages used in textbooks, it’s awful.

(Where are the binary quantifiers? Why are brackets used with two completely

different meanings? ...)

The language of the textbook is called ‘FOL’.

‘awFOL’ is basically the same as FOL except that you can replace symbols with

words which makes typing it easier.

Also 'FOL' is a really stupid name because there are lots of first-order languages.

It's a bit like I ask you what language you speak and instead of saying

'Farsi' or 'English' or 'Cantonese' you say 'Language, I speak Language'.

But this is trivial, it doesn't really matter what you call things. Let's move on.

As I was saying, for the purposes of logic we are going to use a formal language.

In order to get a sense for this language, let's compare it to English.

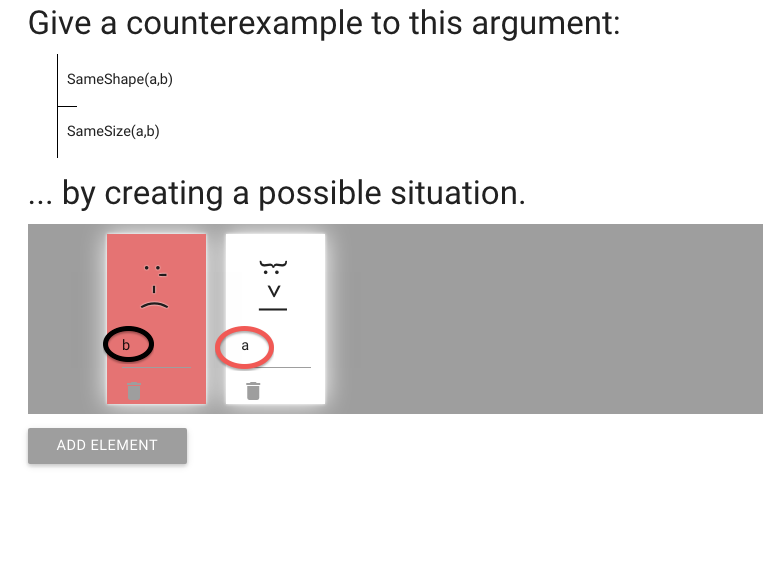

Take a look at this sentence, John is square.

This is a sentence.

For now a sentence is just something capable of being true or false.

(In a longer course we would define what it is to be a sentence more carefully.)

In English there are names ...

... these are terms that function to refer to objects.

There are also predicates, like 'Square'.

Predicates are things that refer to properties.

In this case the property is that of being square.

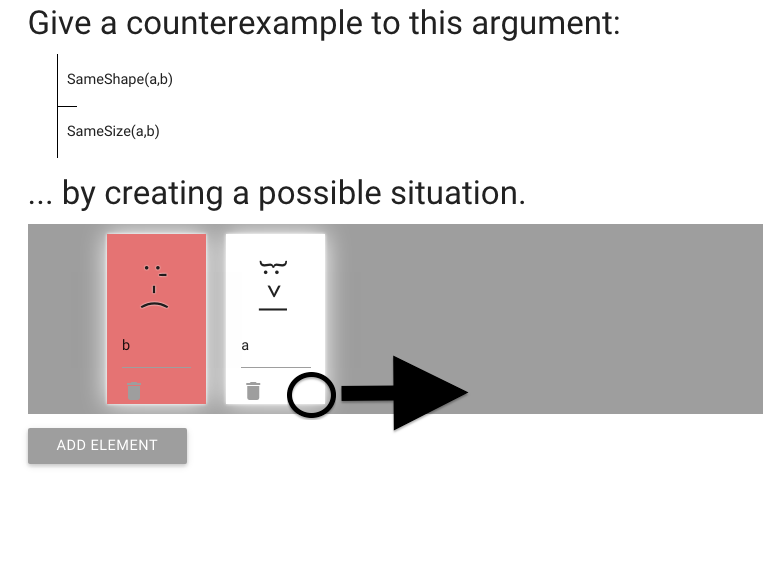

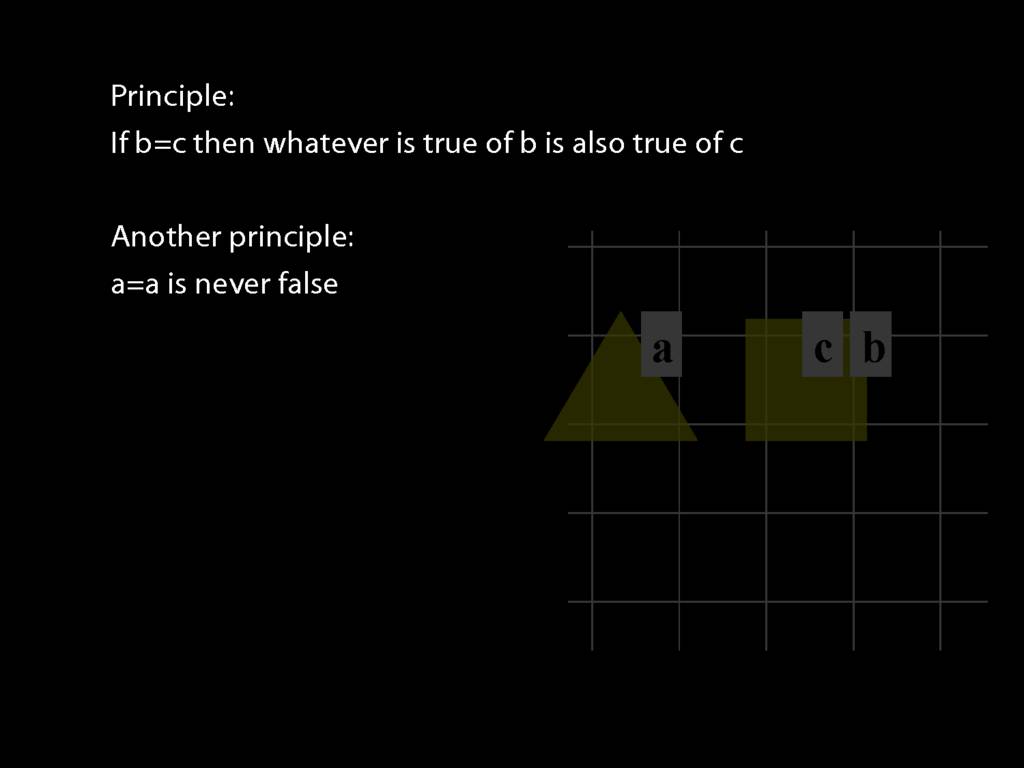

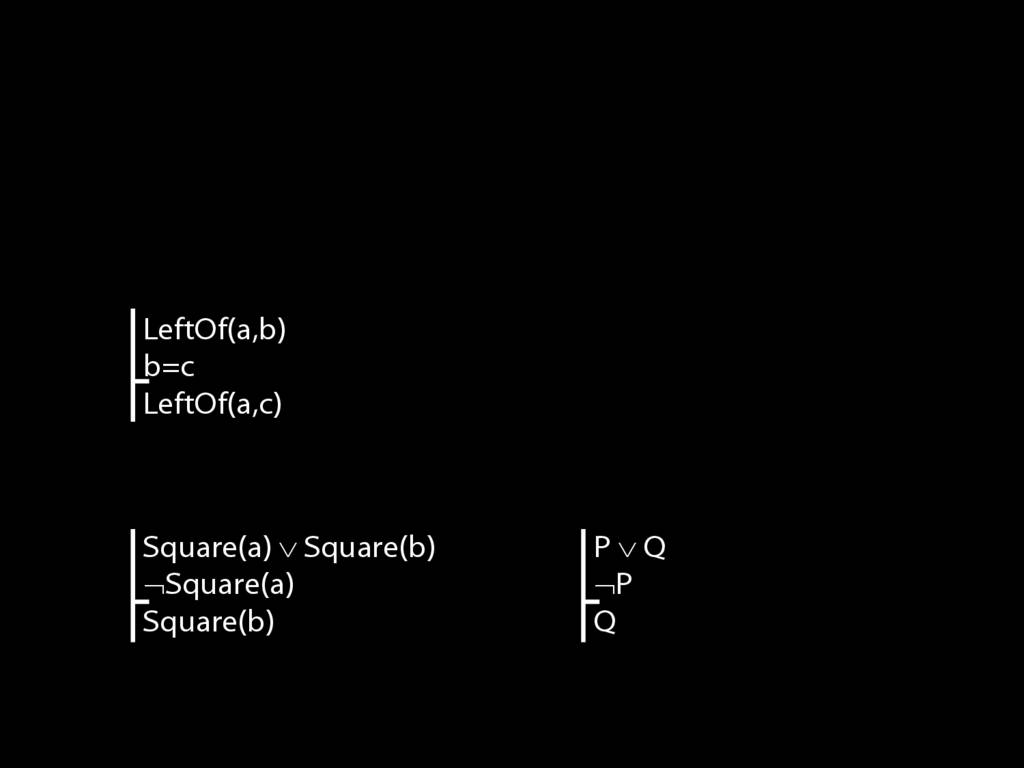

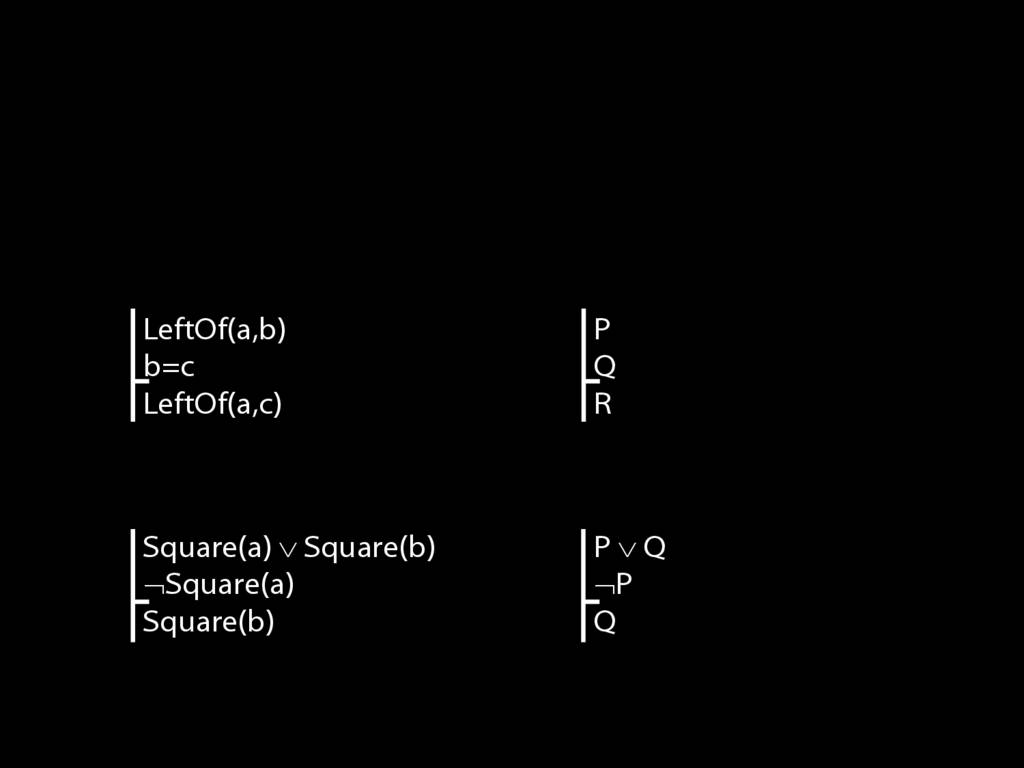

Take a look at this sentence.

Some properties relate several things; for example,

being 'to the left of' involves two things rather than one.

The expressions for these relational properties are also called predictaes.

By the way, this is also a sentence containing multiple names, 'John' and 'Ayesha'.

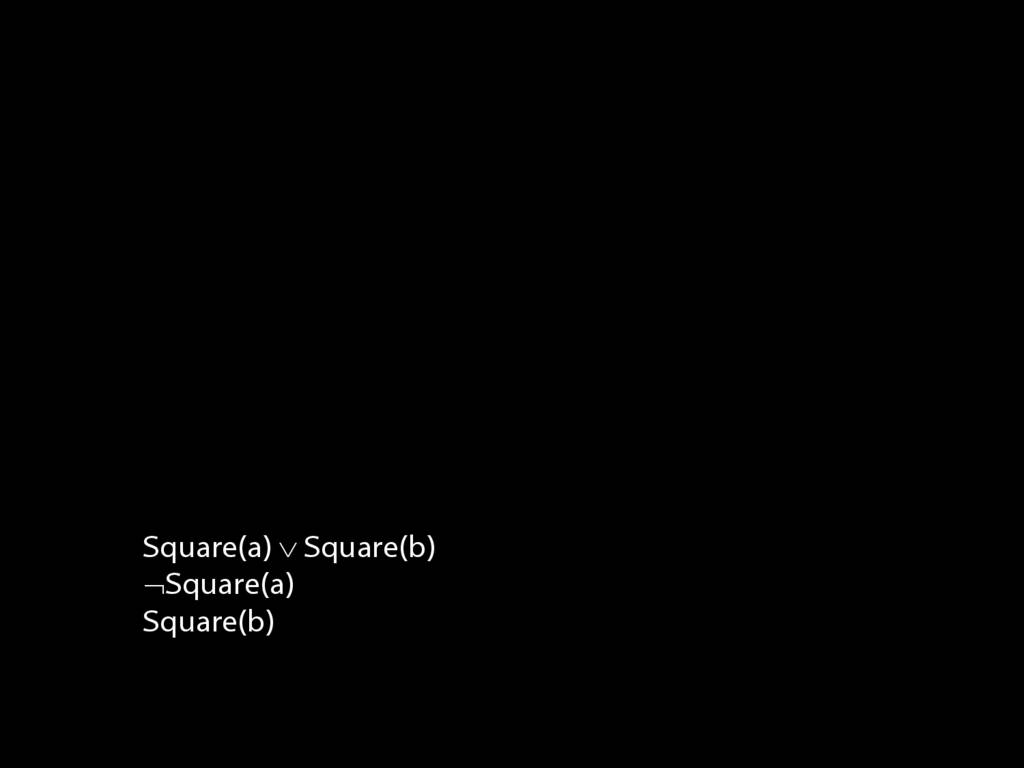

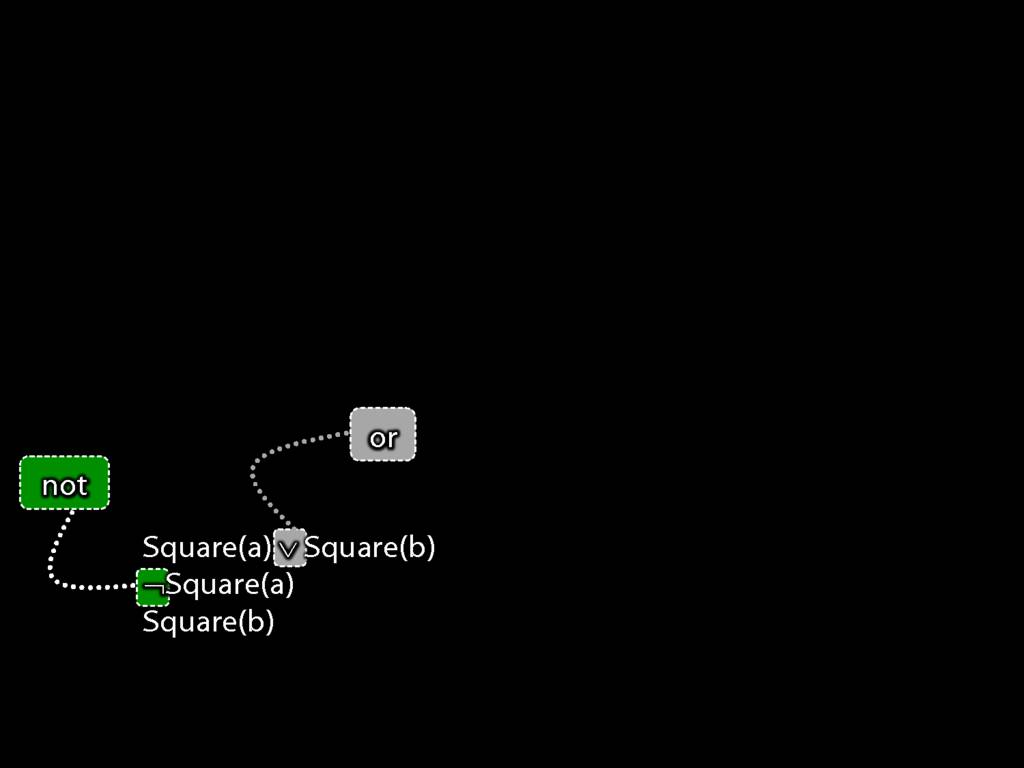

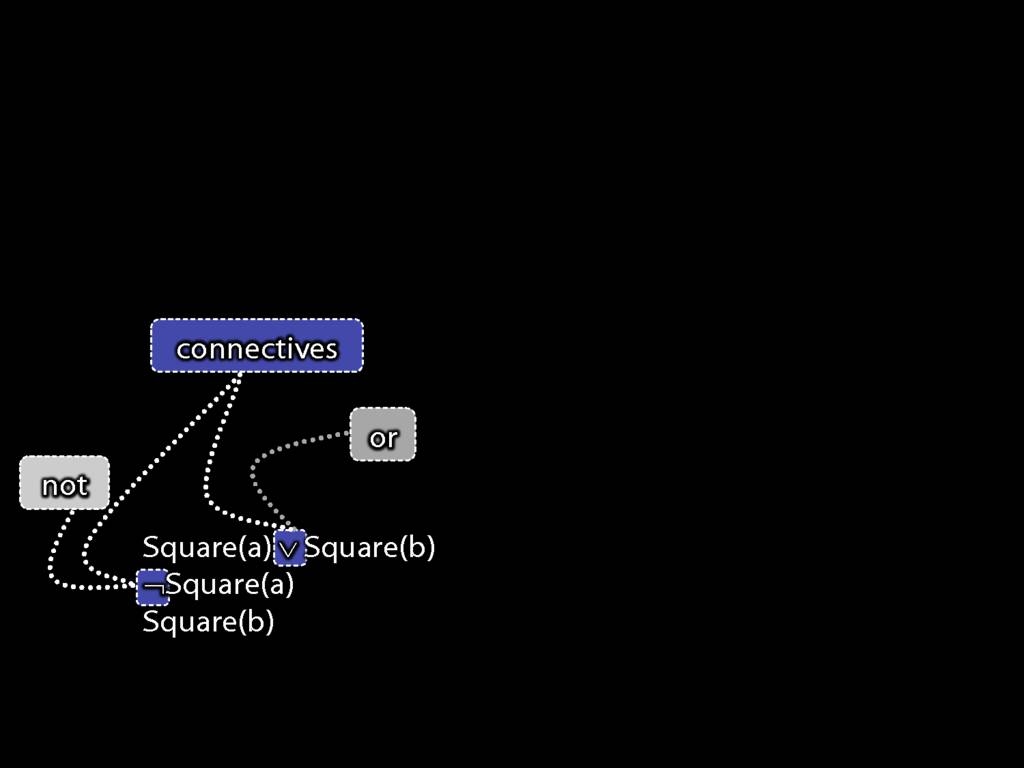

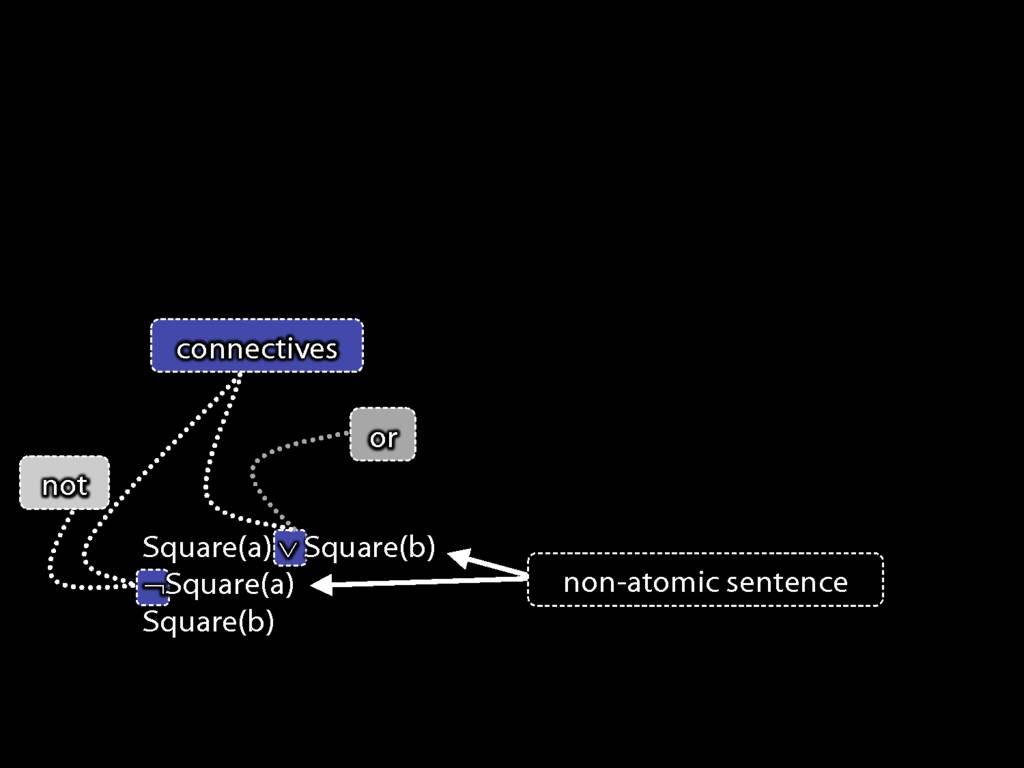

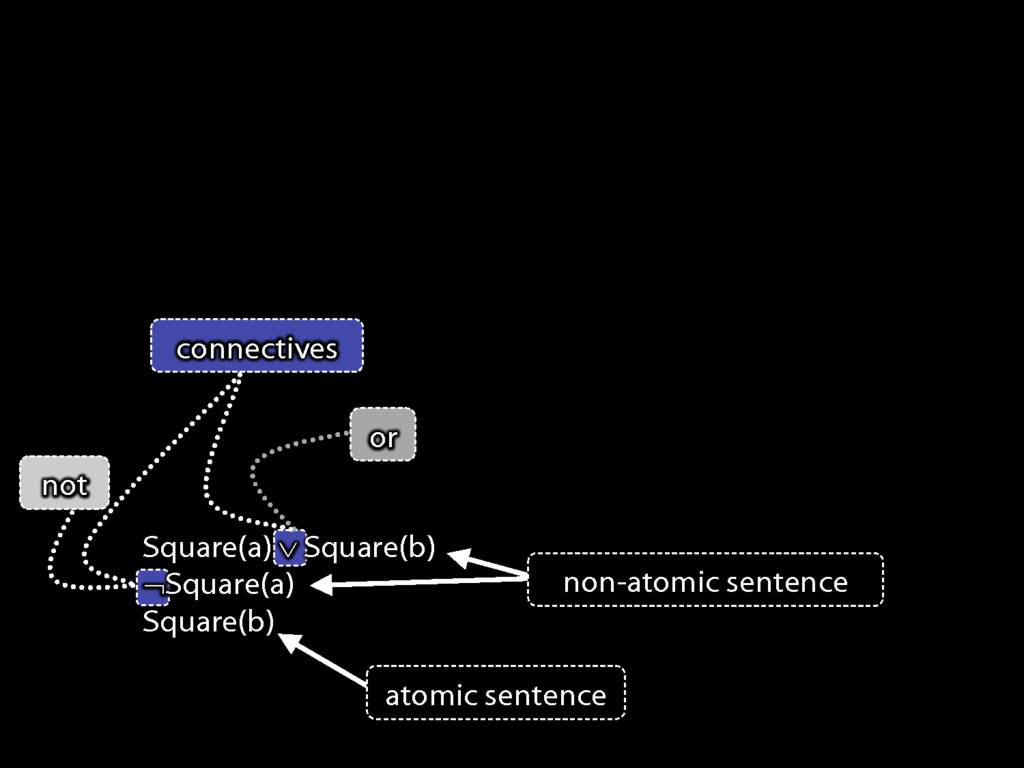

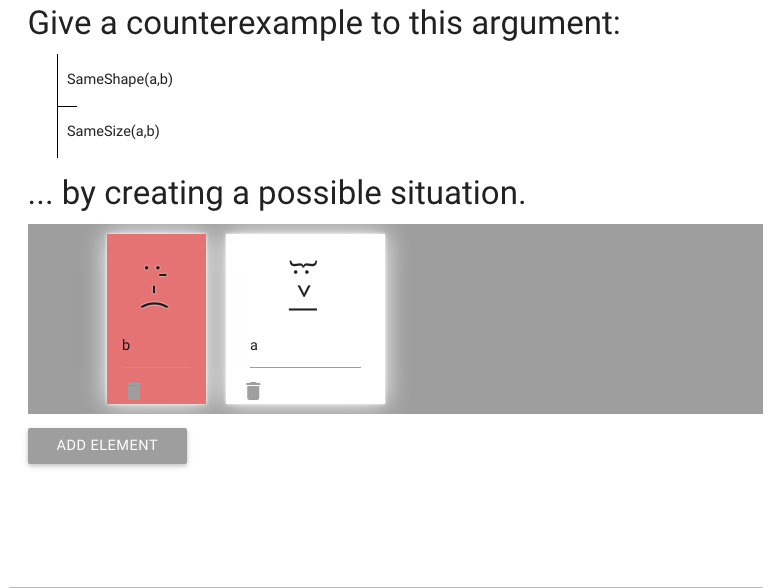

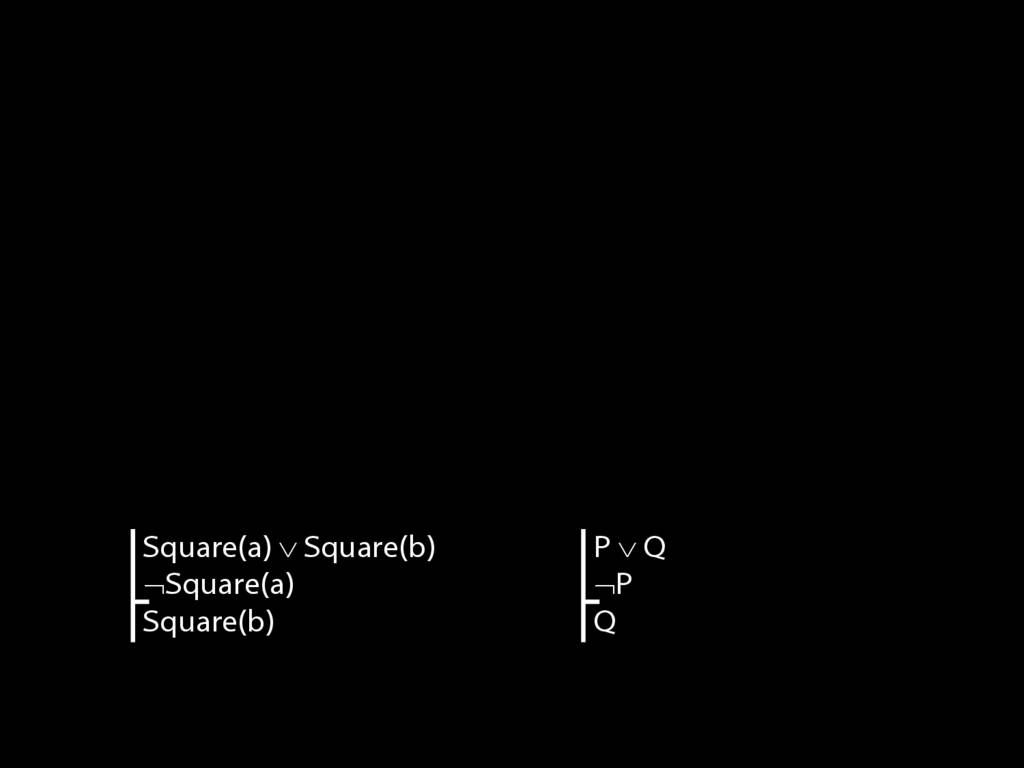

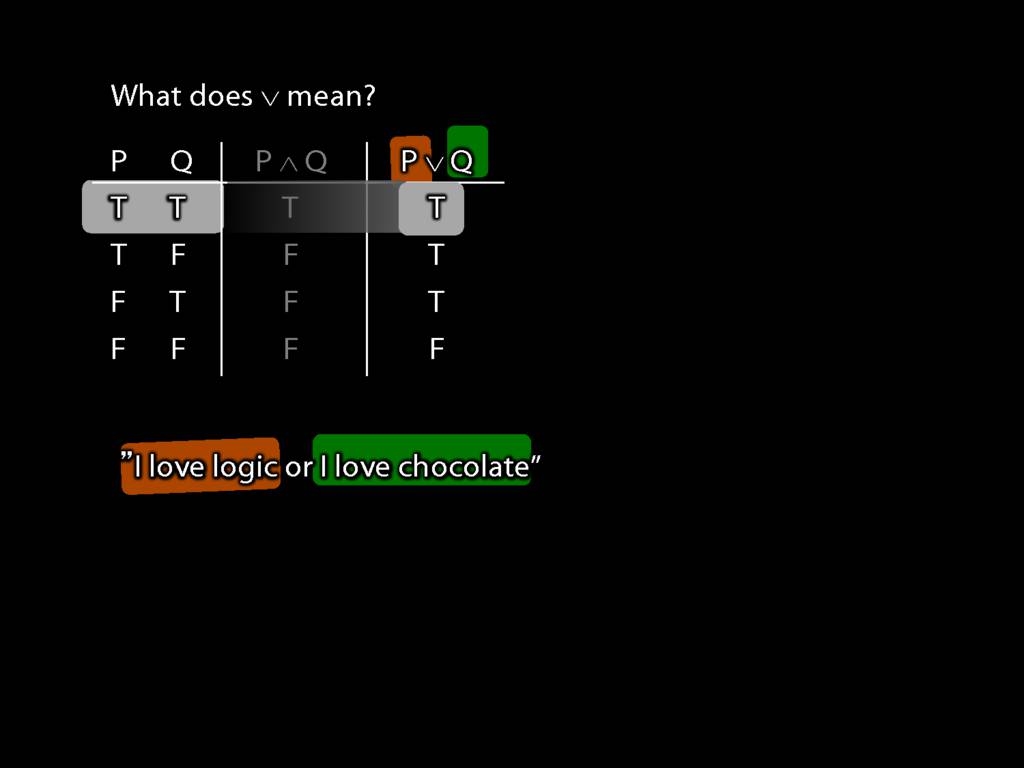

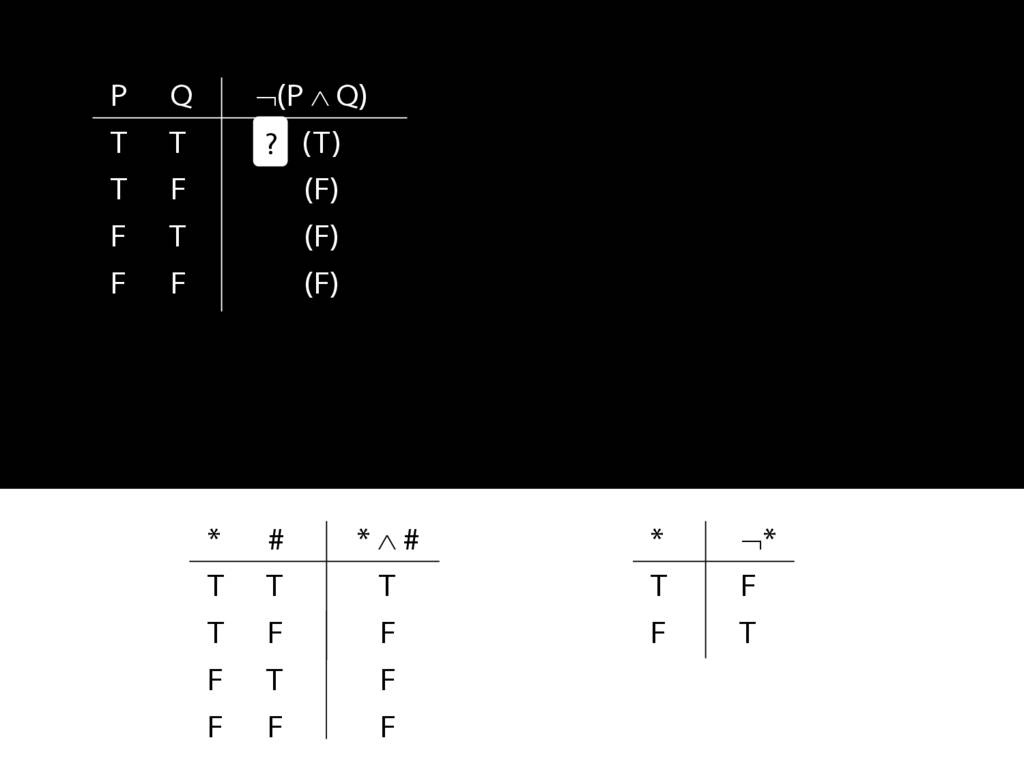

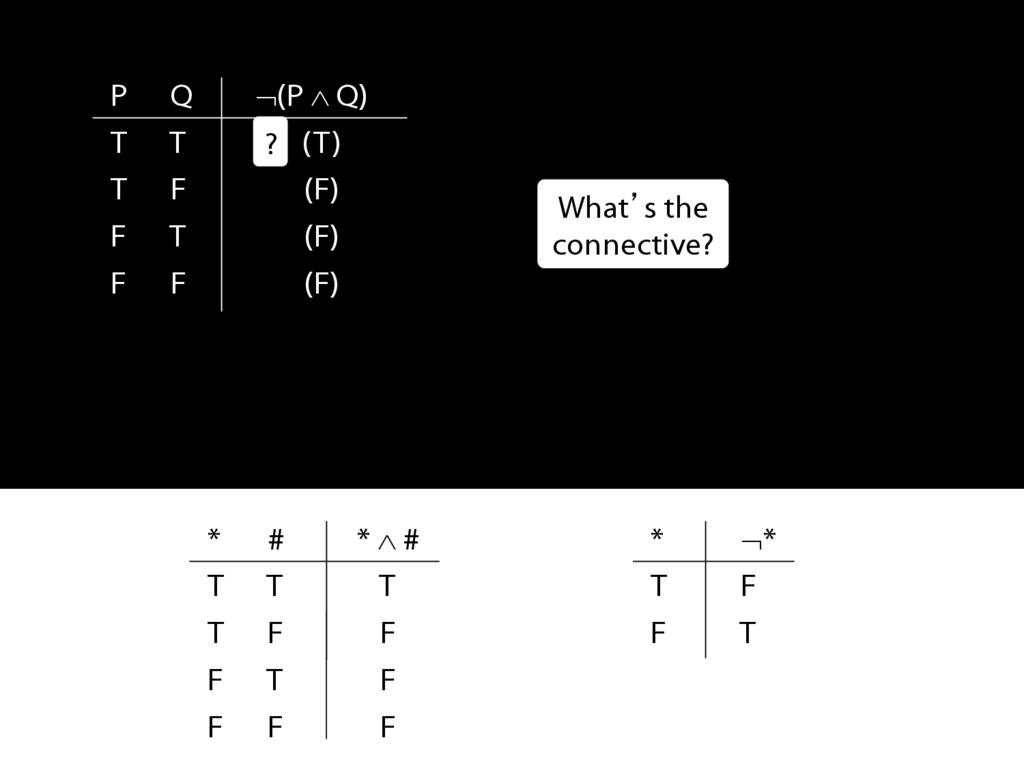

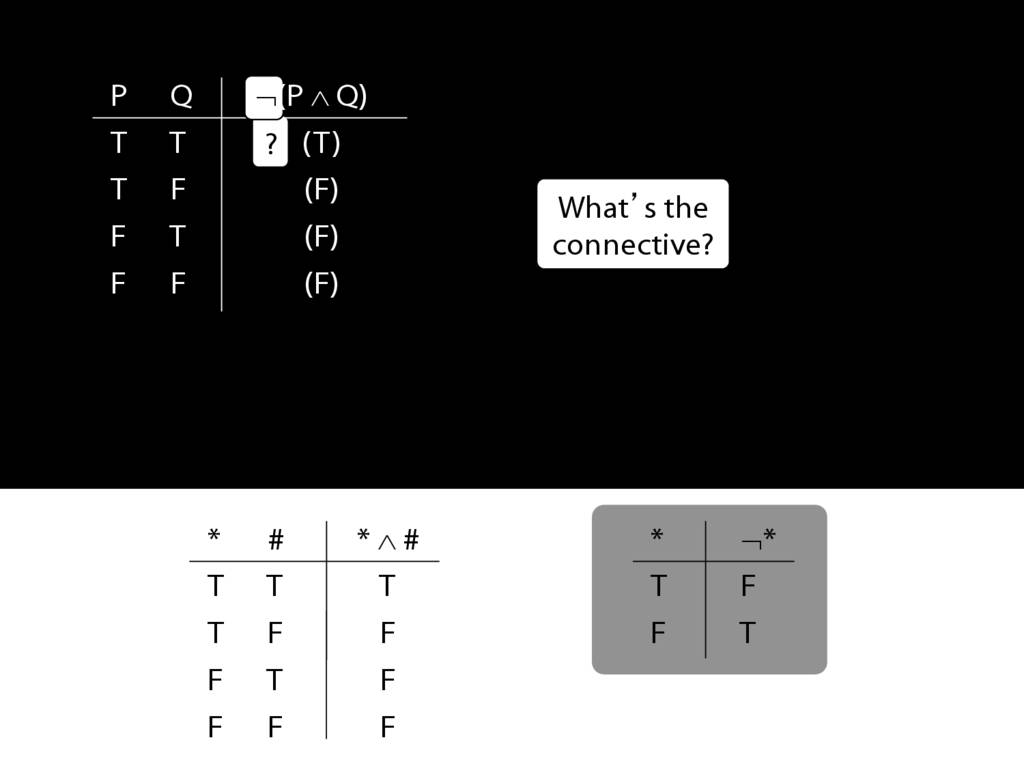

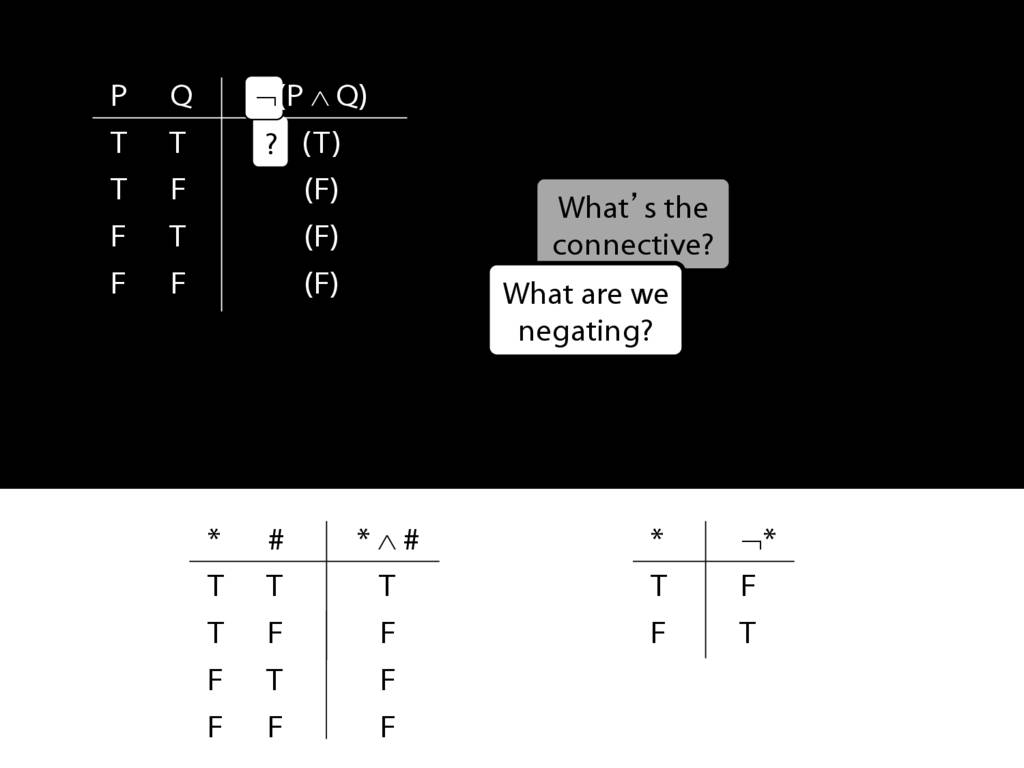

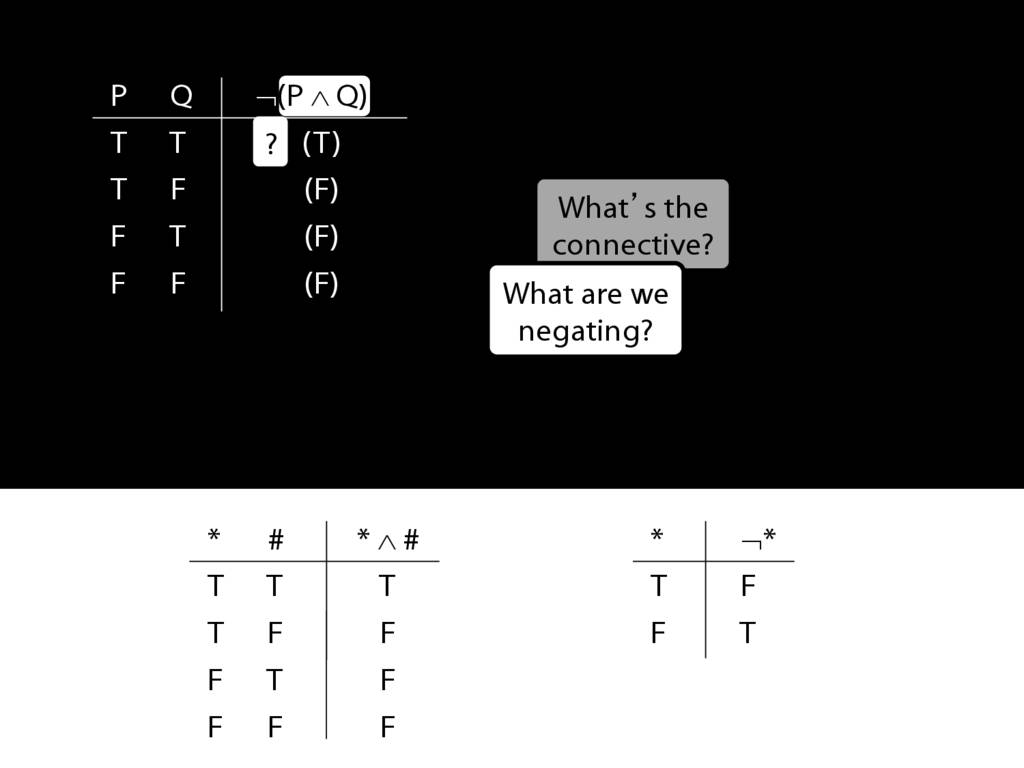

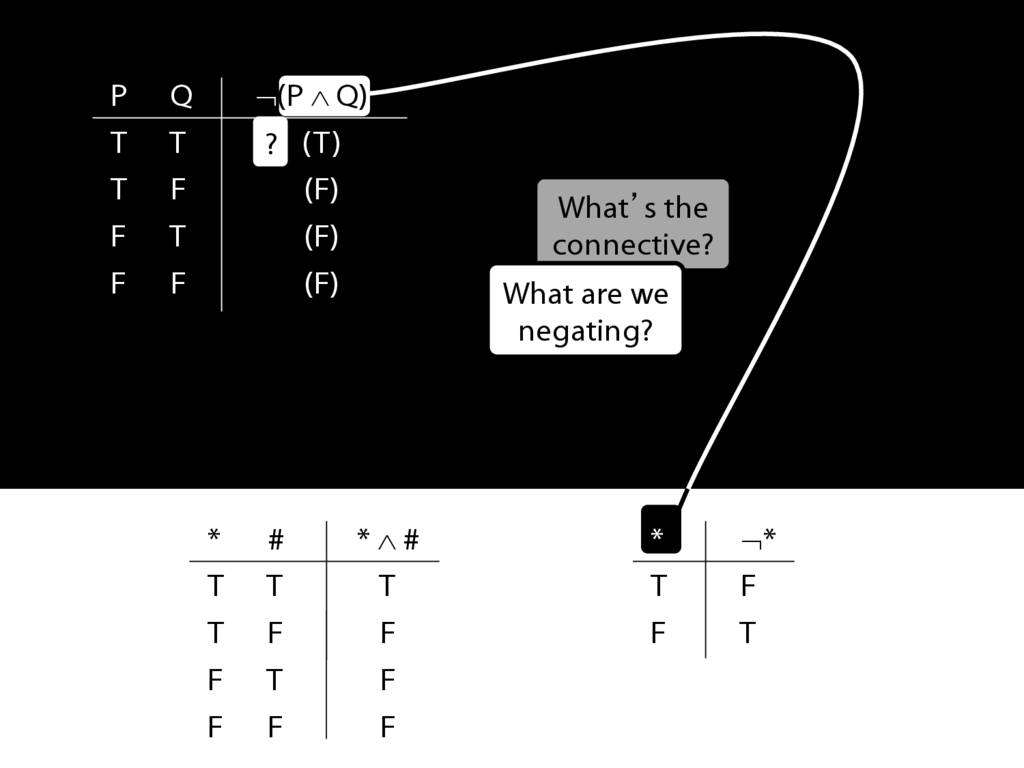

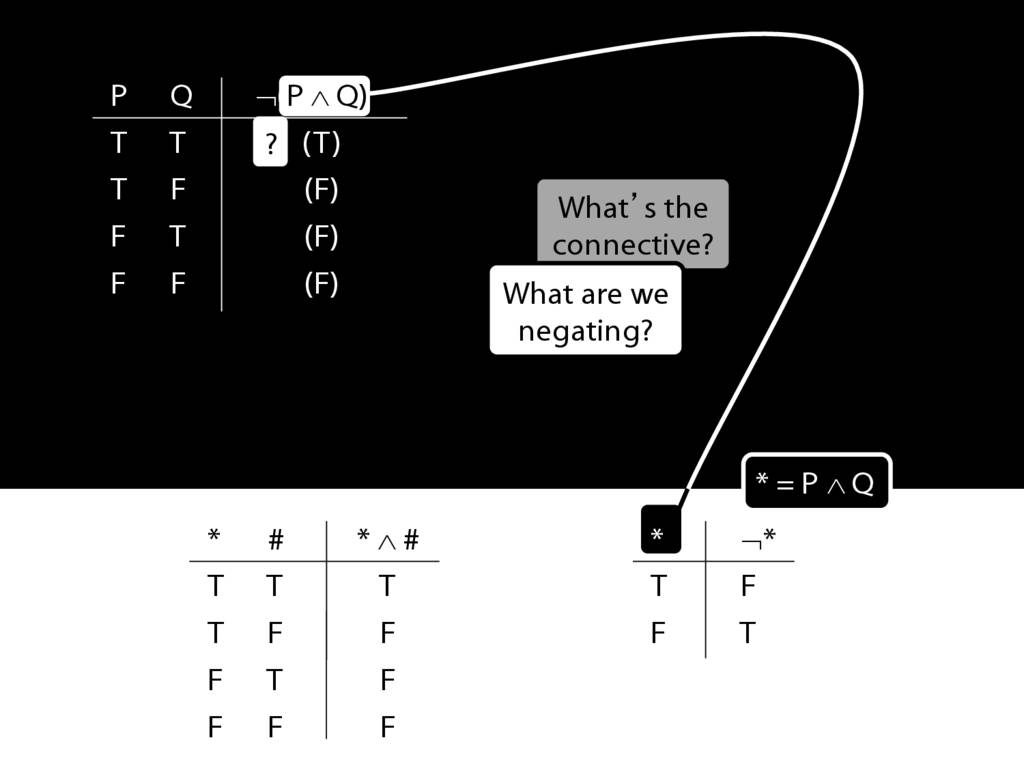

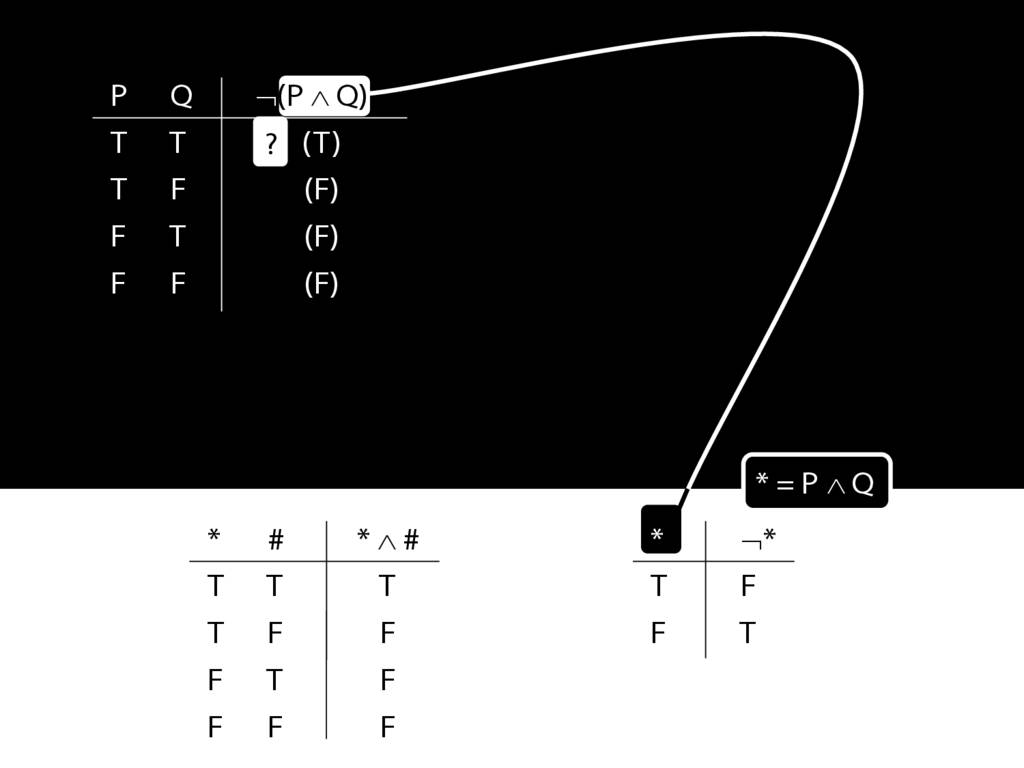

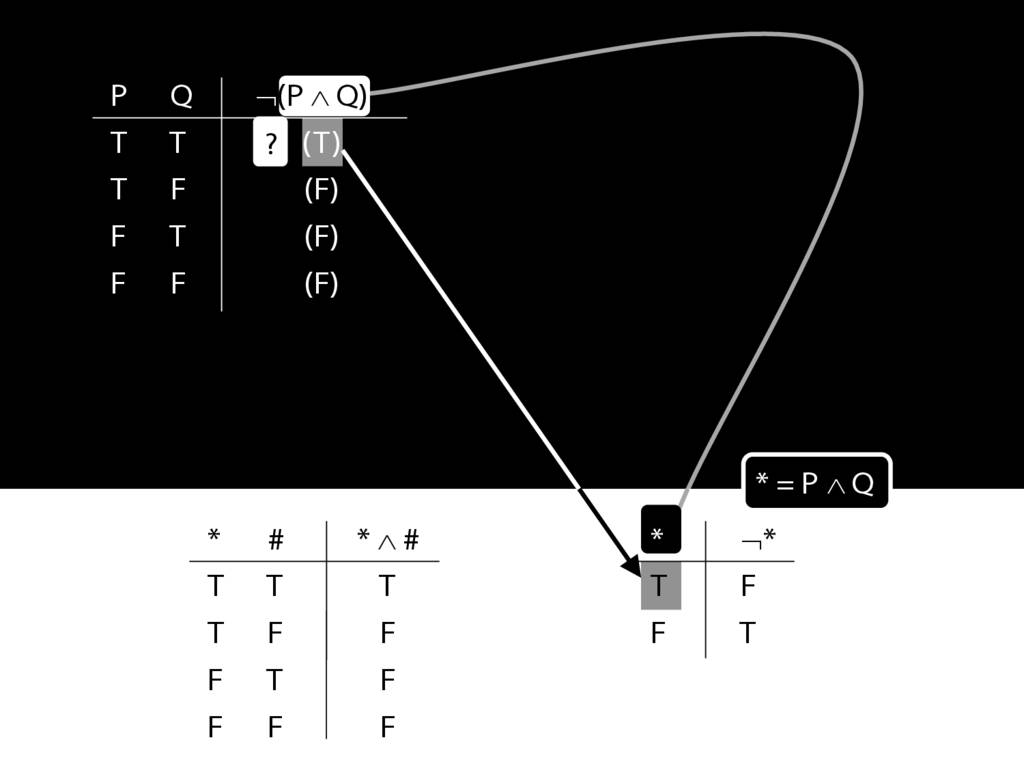

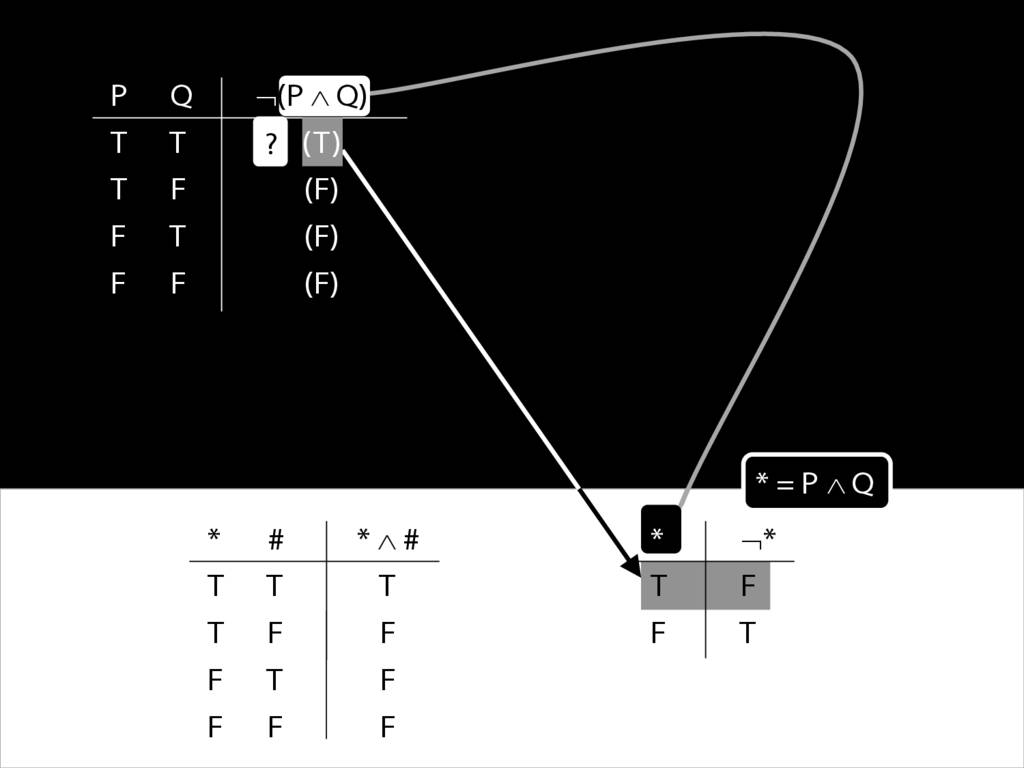

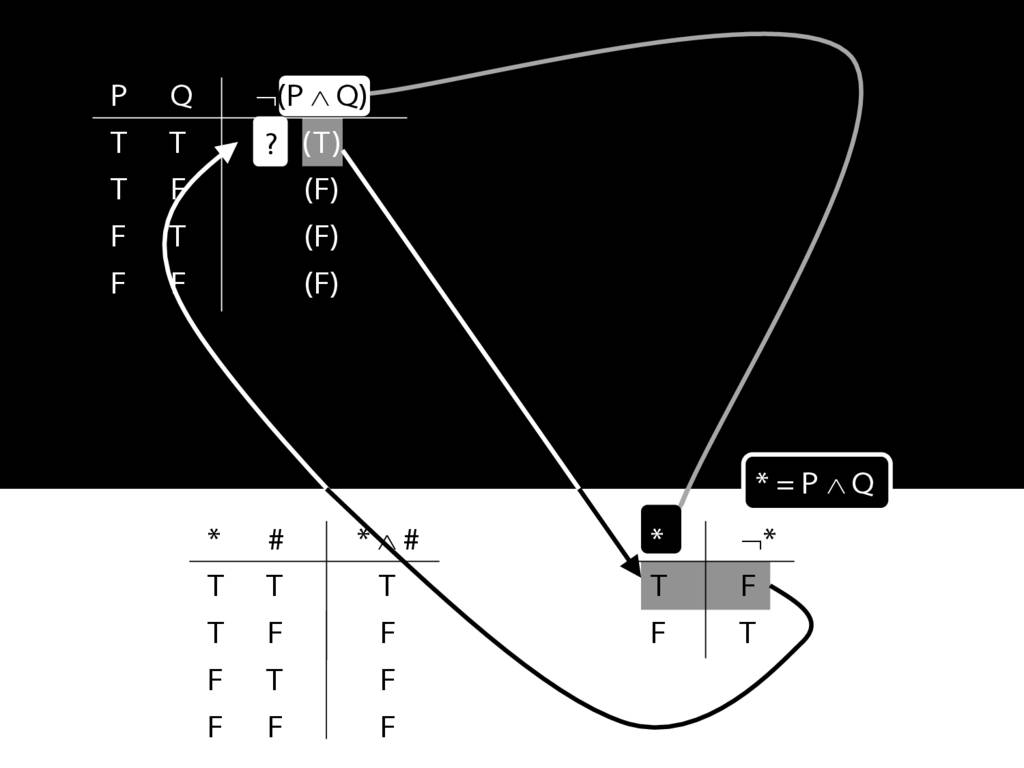

Now have a look at this sentence, 'John is square or Ayehsa is triangular' ...

or, as I perfer to say, 'trinagulra'. (Did you spot the mistake? Well done.)

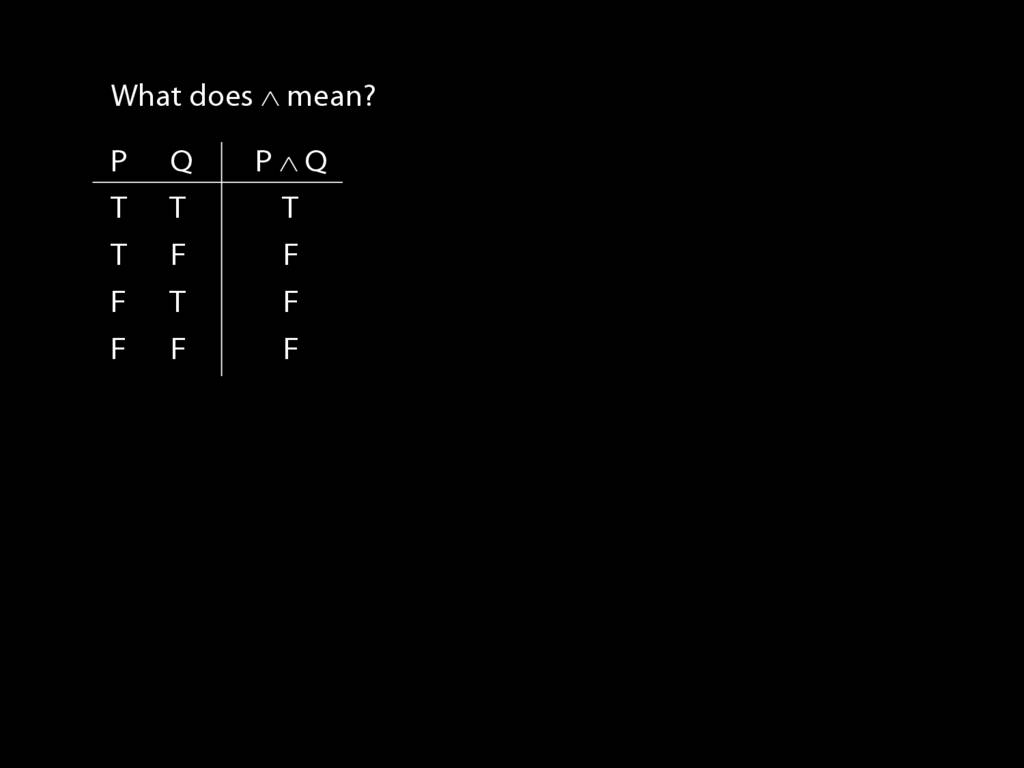

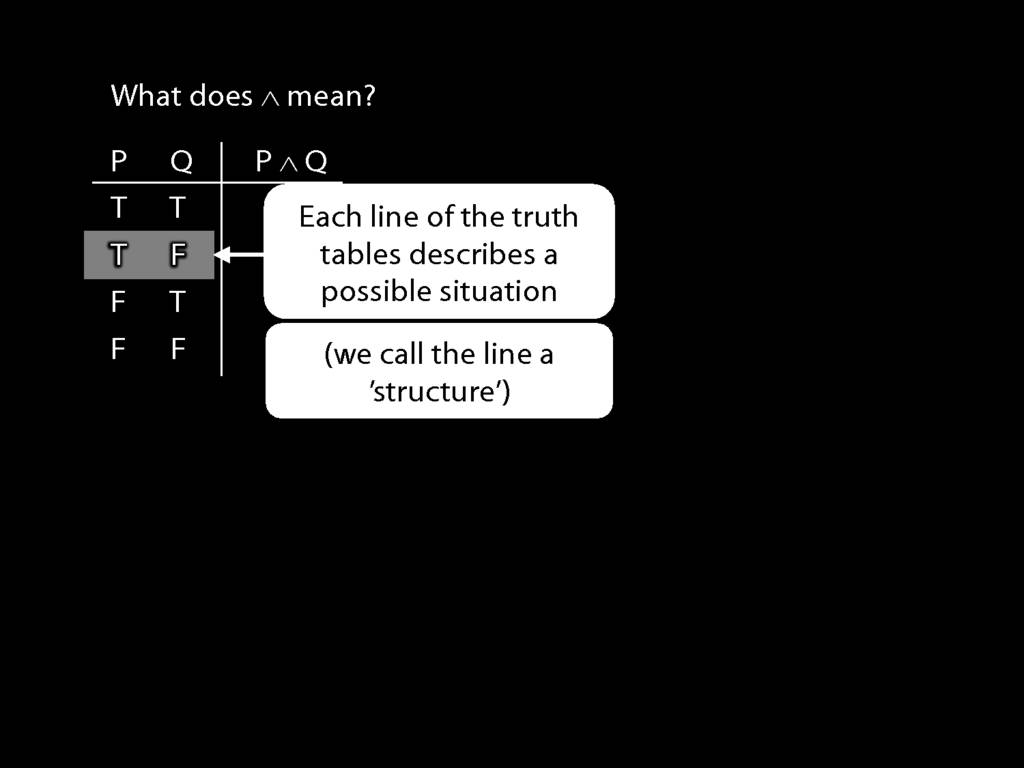

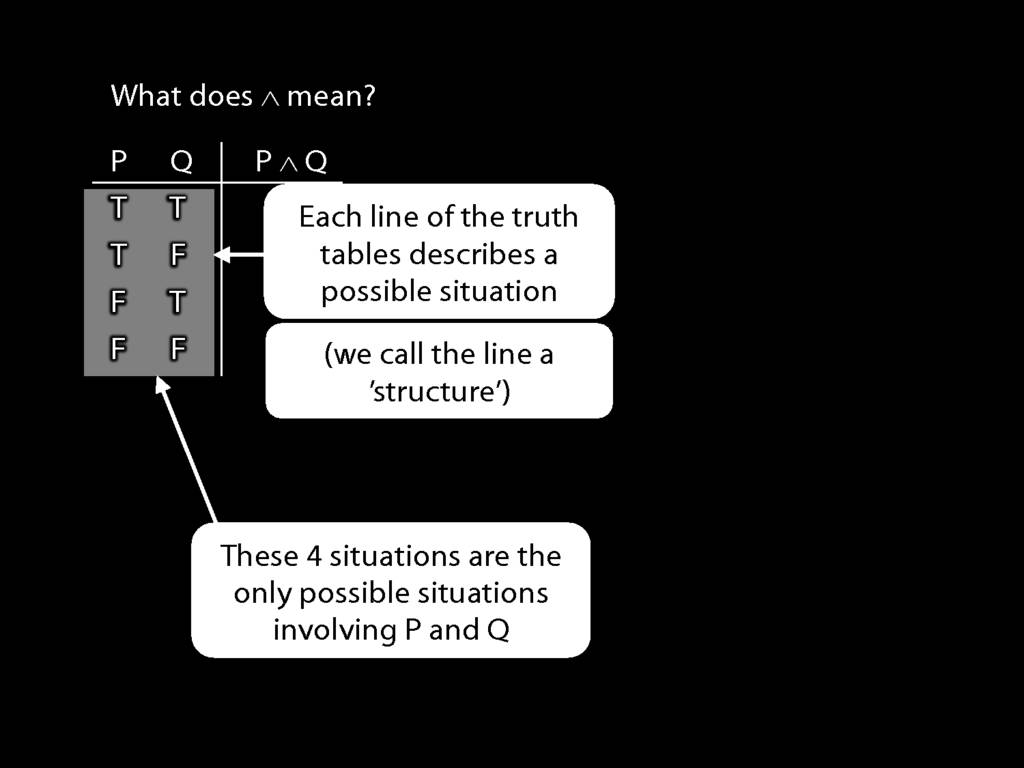

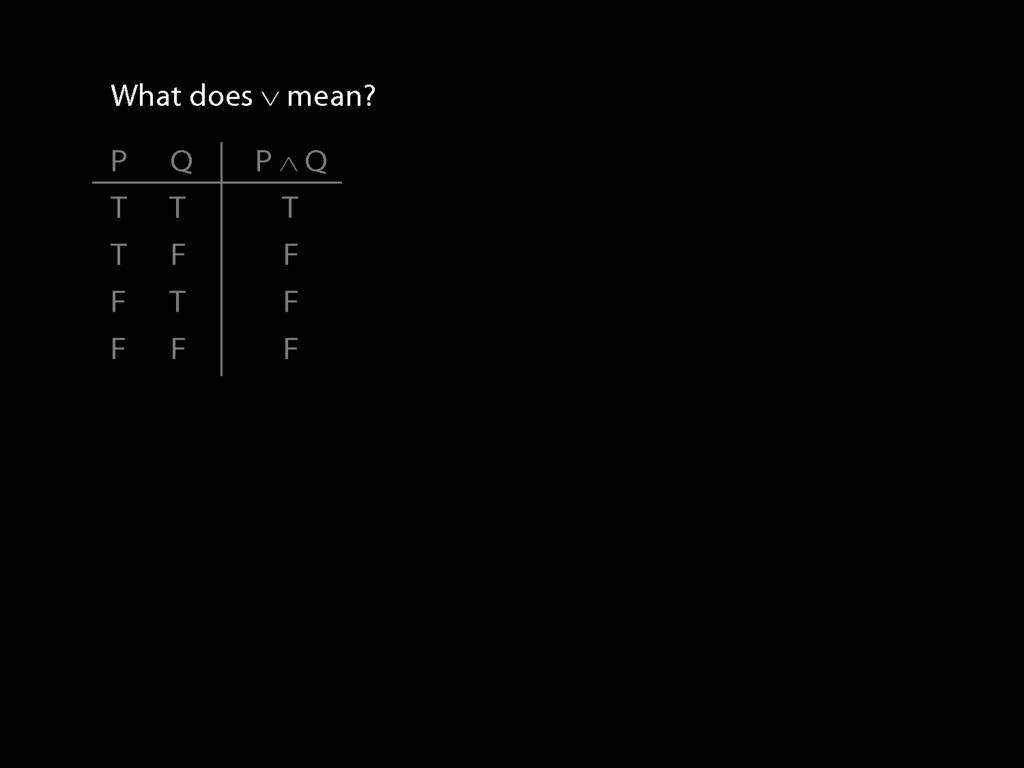

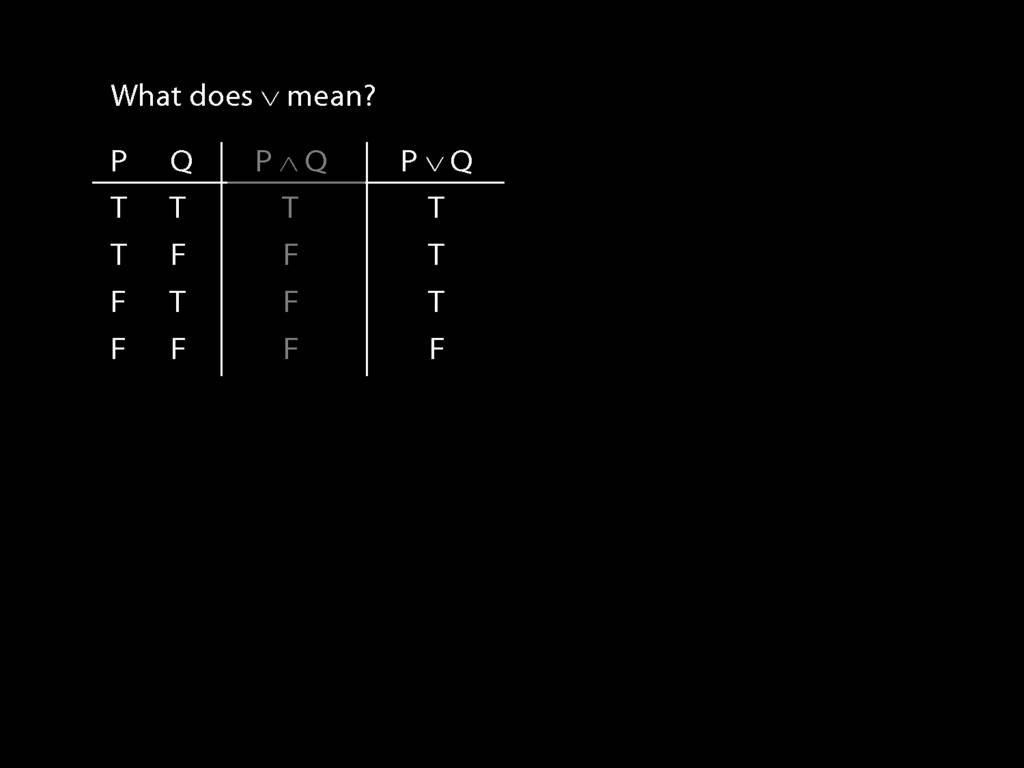

Consider the word 'or' in this sentence. It isn't a name or a predicate.

It doesn't refer to an object, nor to a property.

Instead its function is to join two sentences, making a new one.

We'll call things like this 'connectives'.

A connective is anything that you can combine with zero or more sentences and to make a new sentence.

Here's another piece of terminology: a sentence with one or more connectives is 'non-atomic'

And, as you'd expect, a sentence with no connectives is 'atomic';

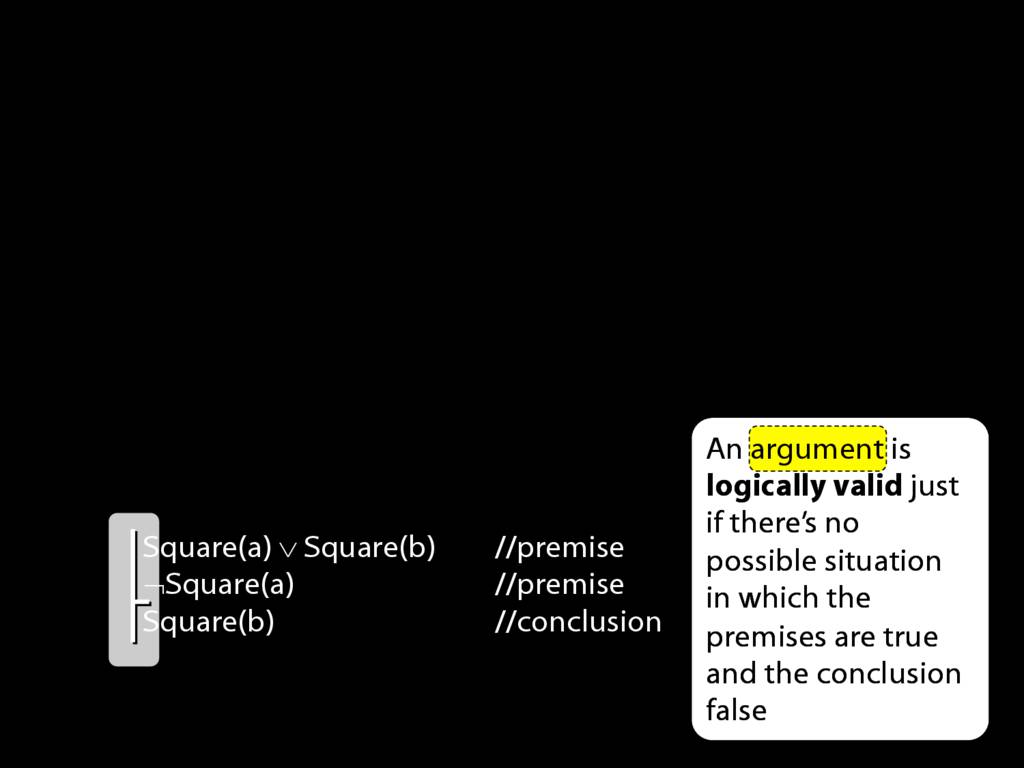

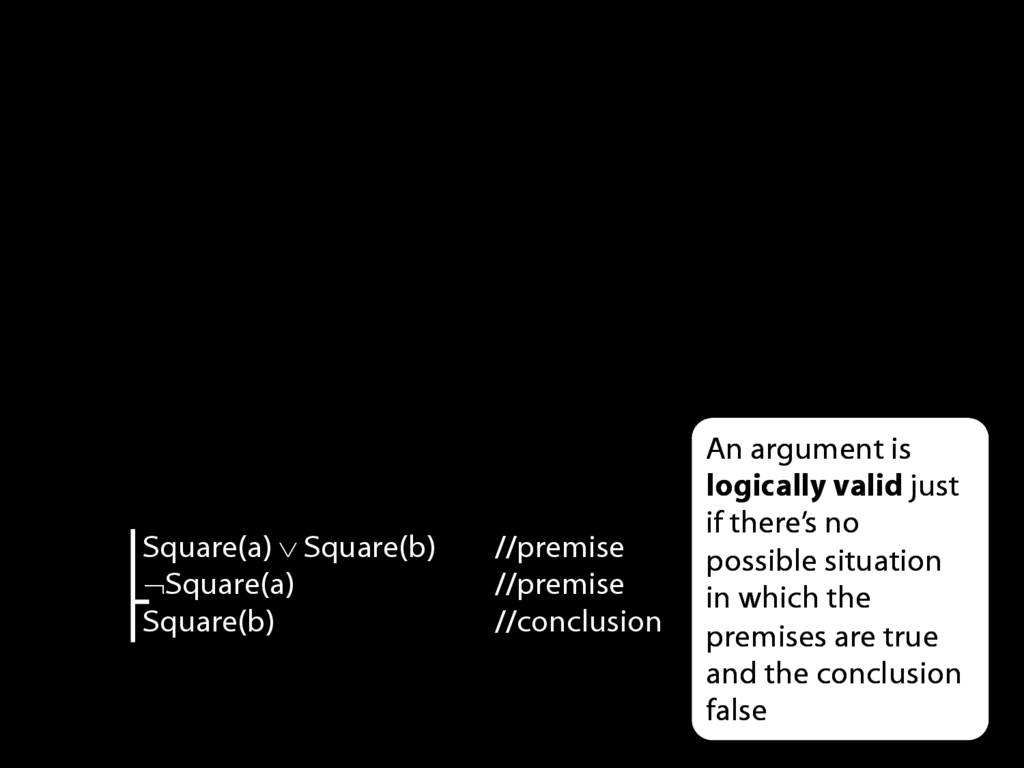

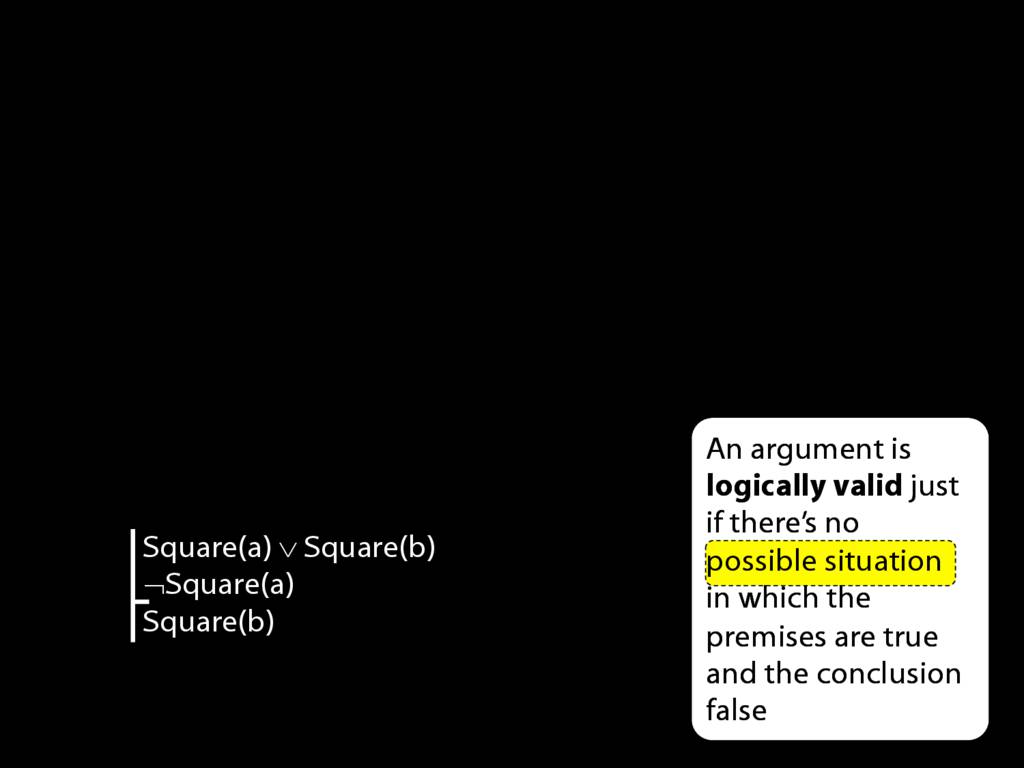

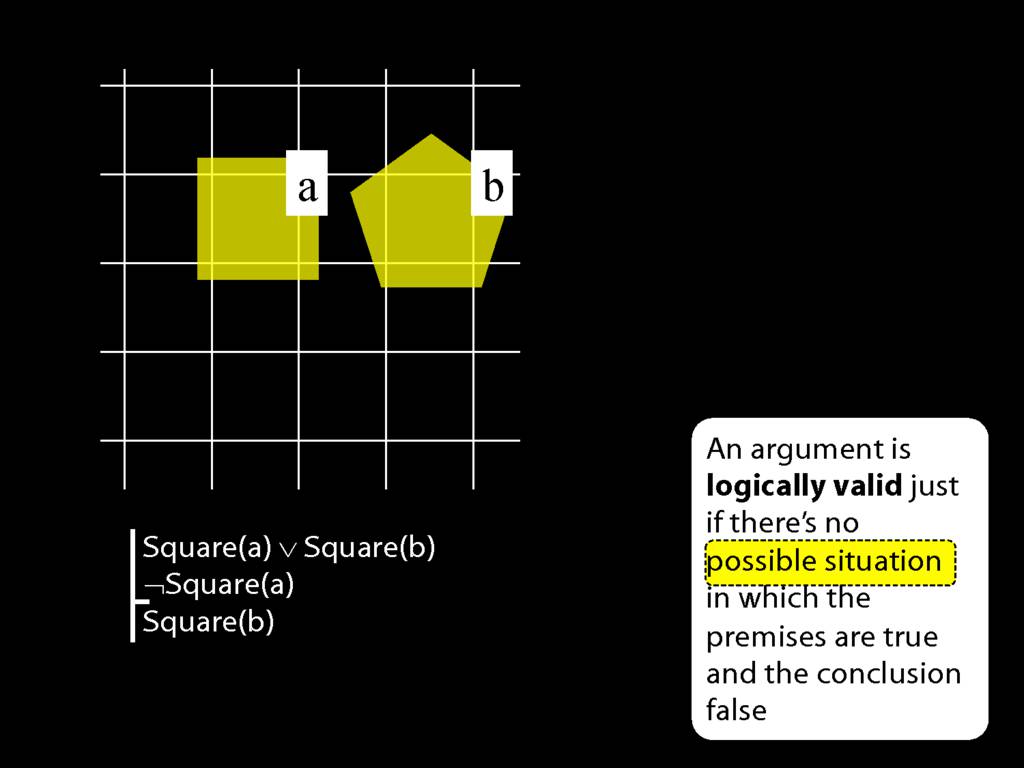

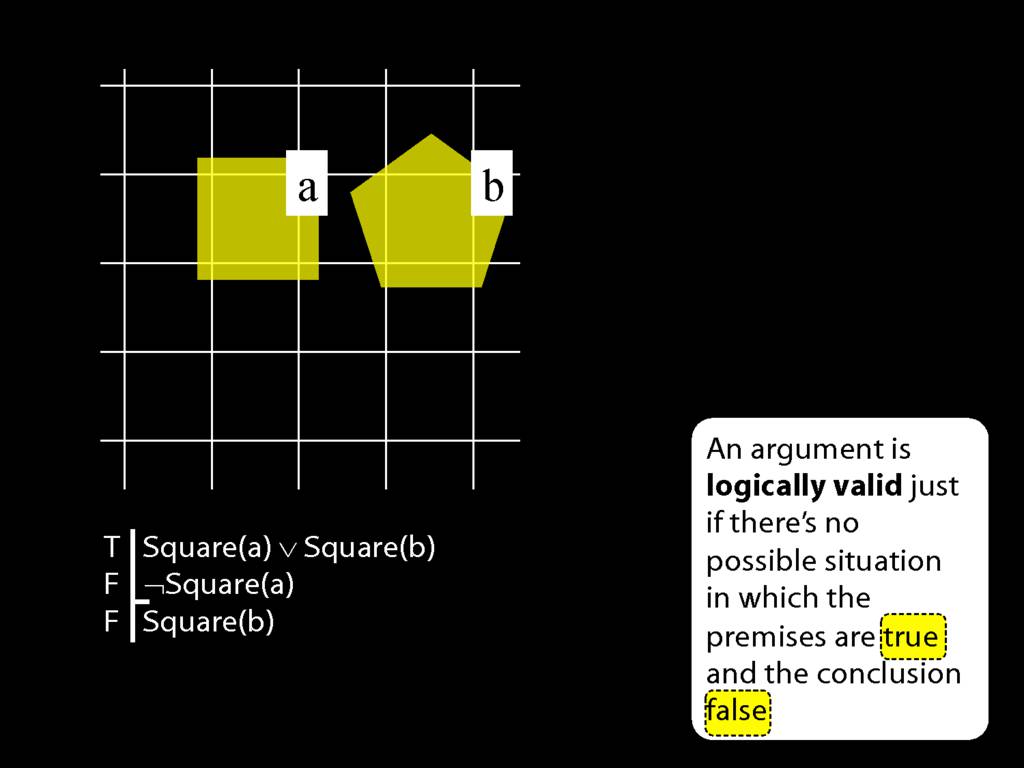

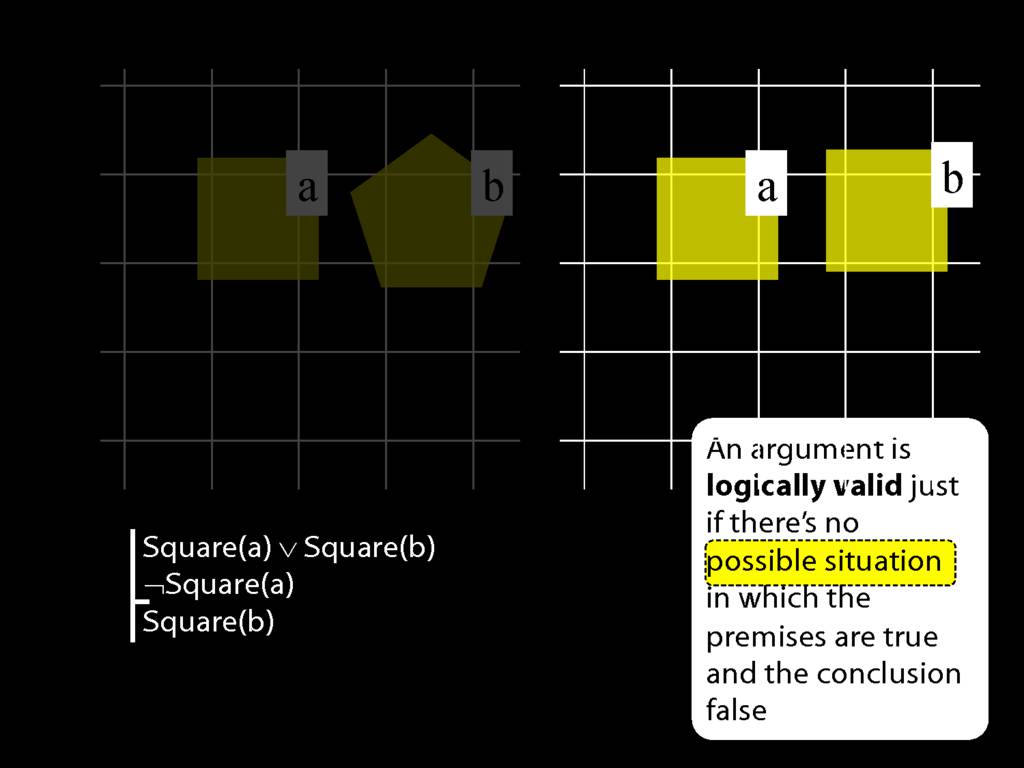

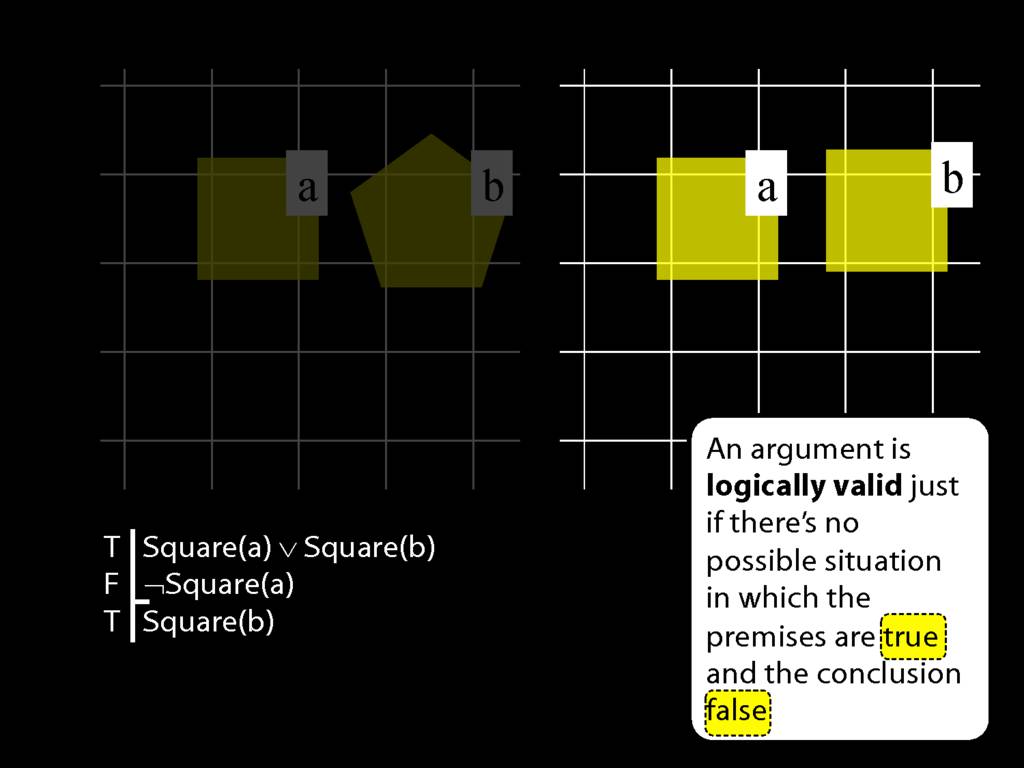

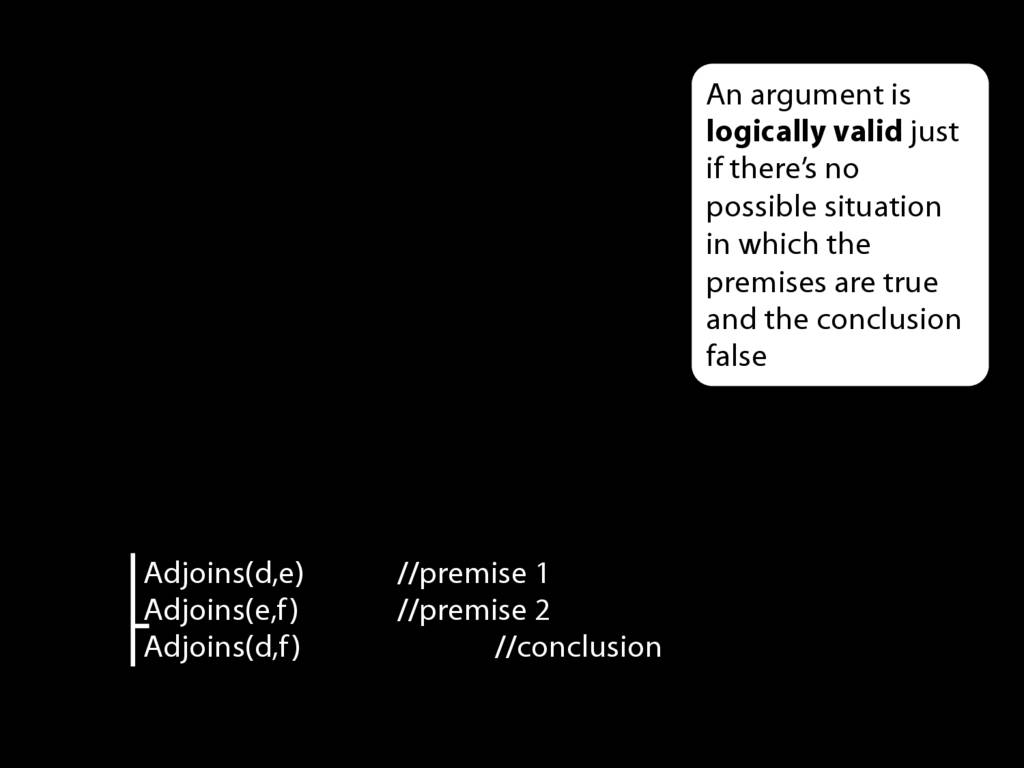

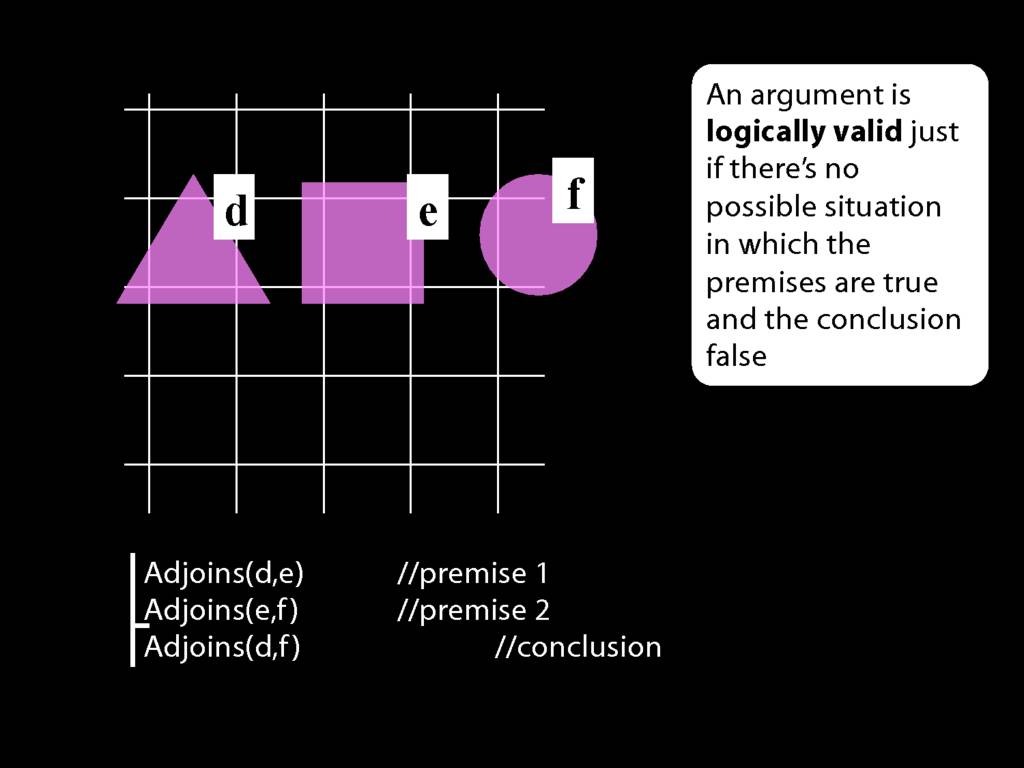

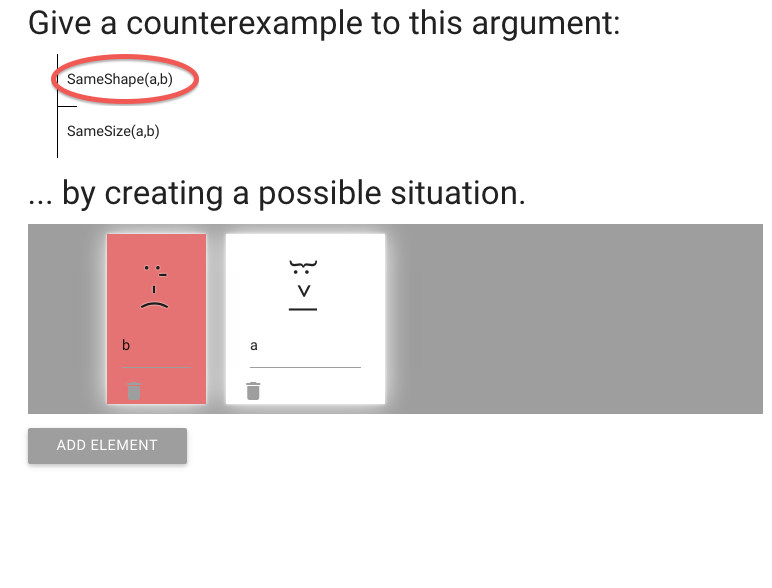

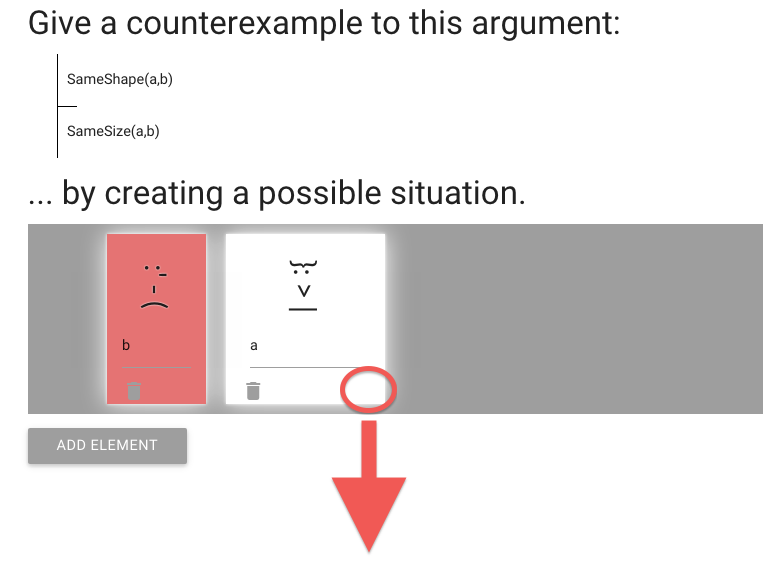

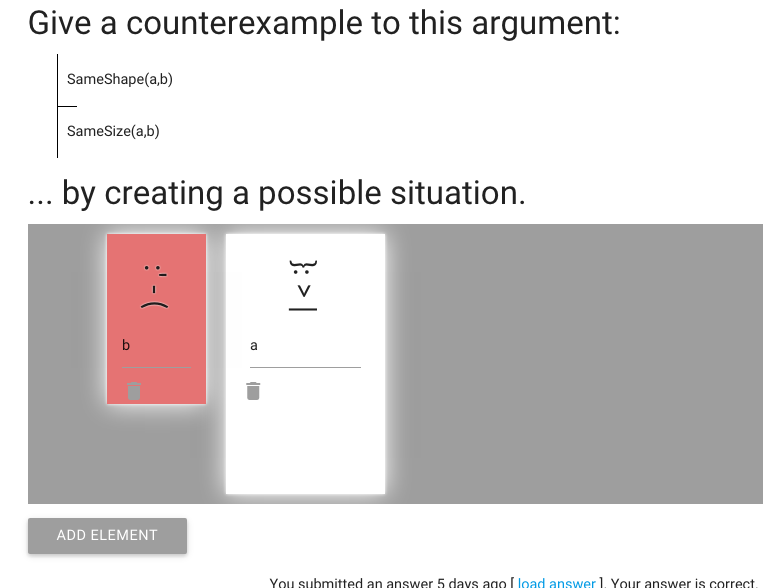

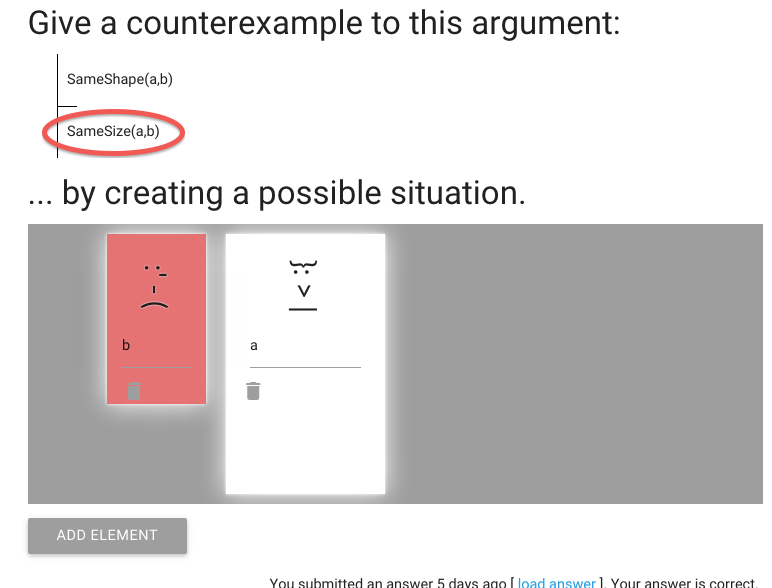

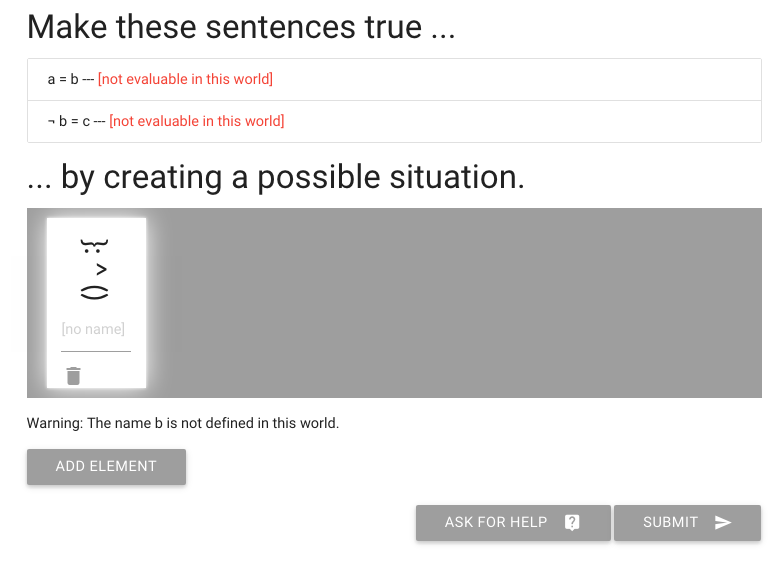

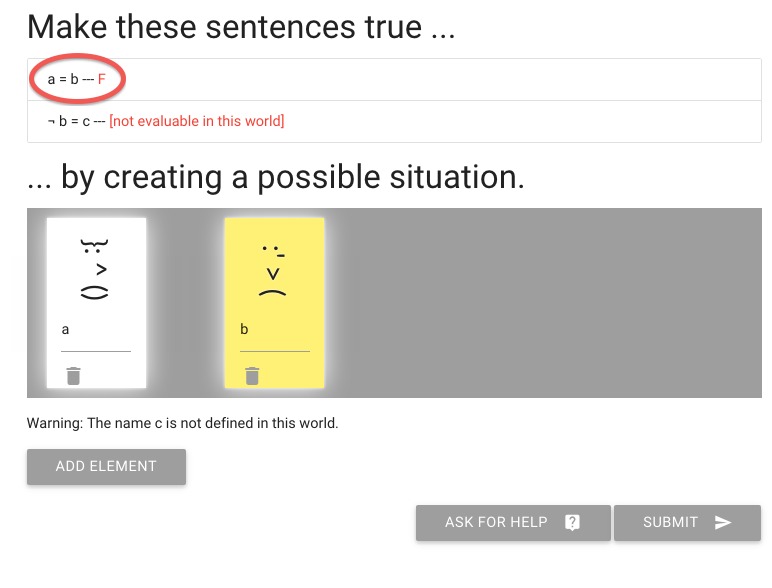

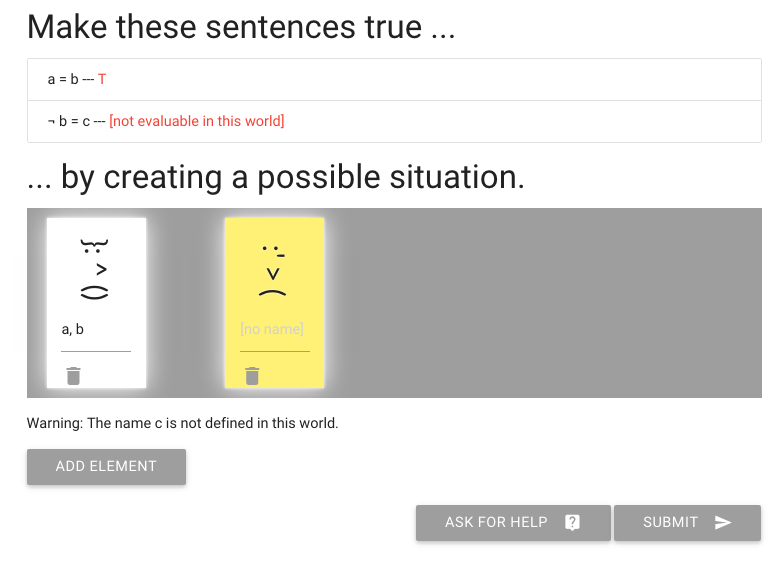

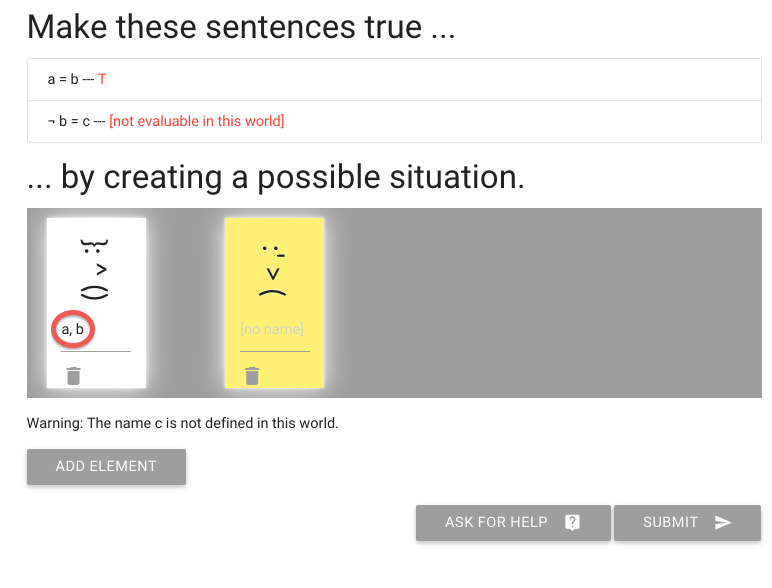

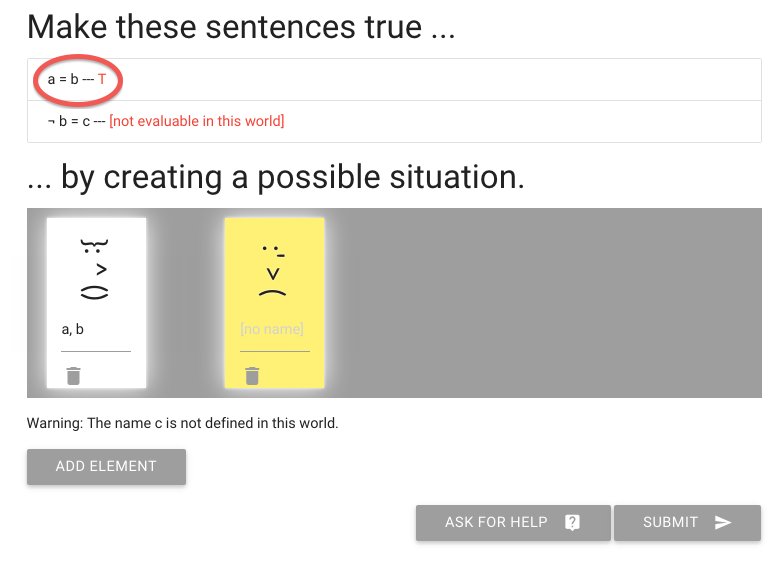

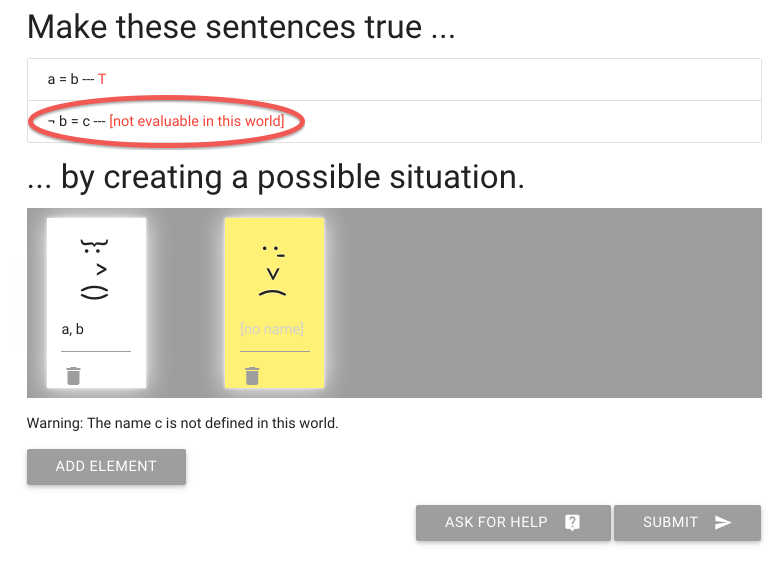

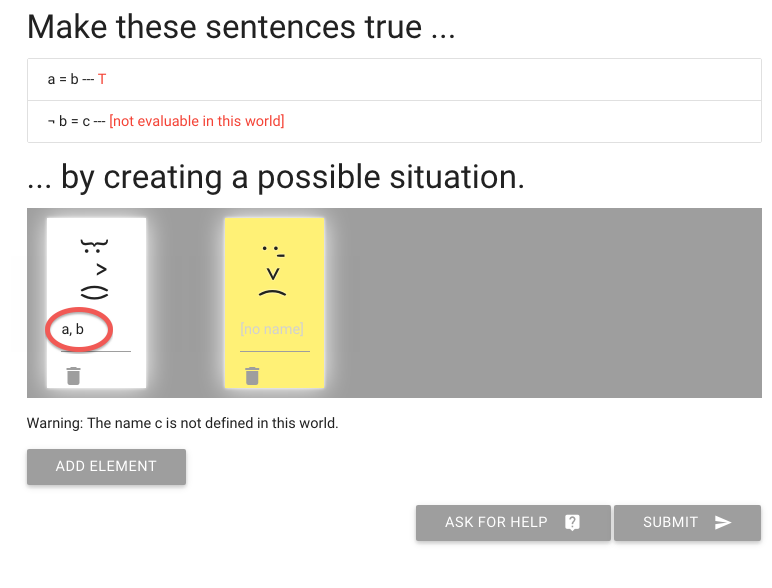

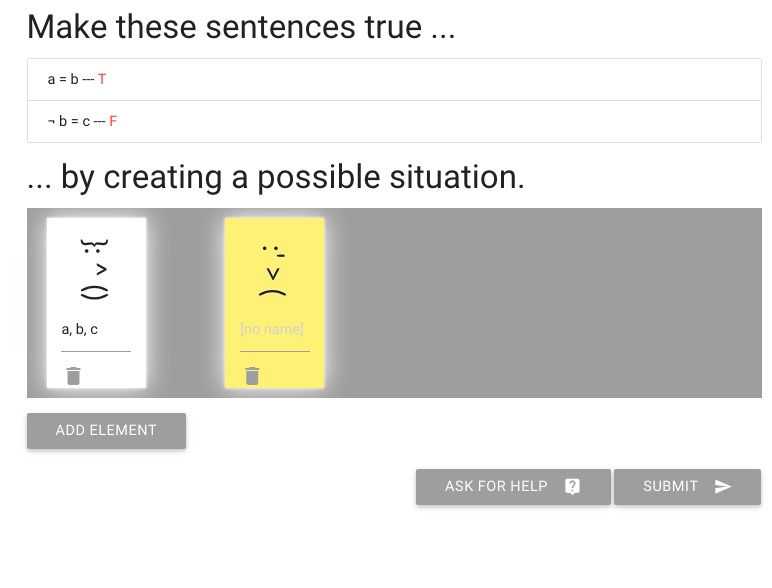

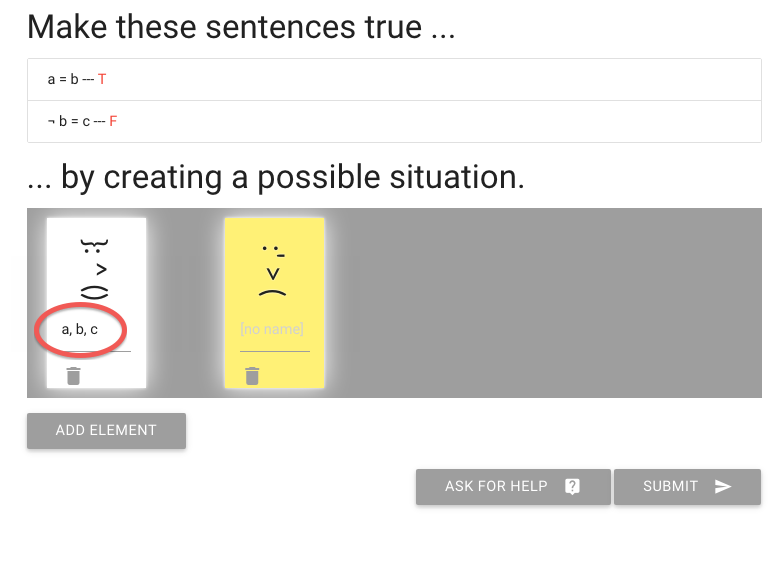

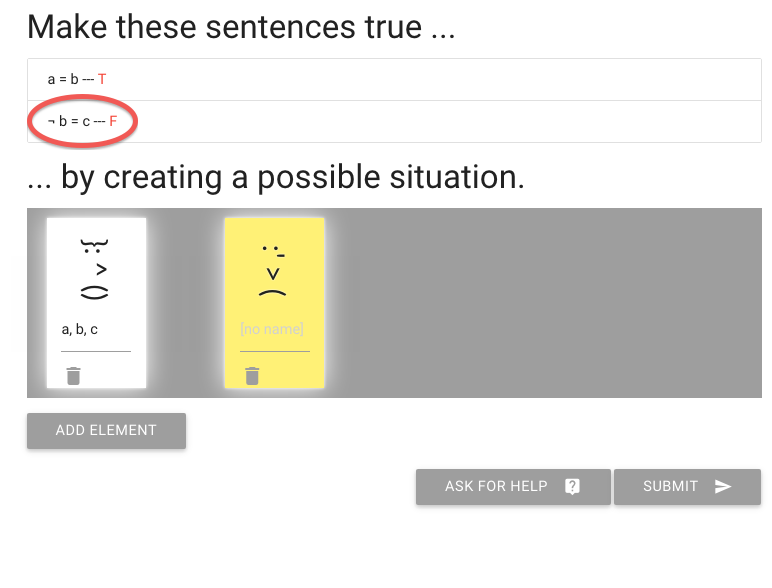

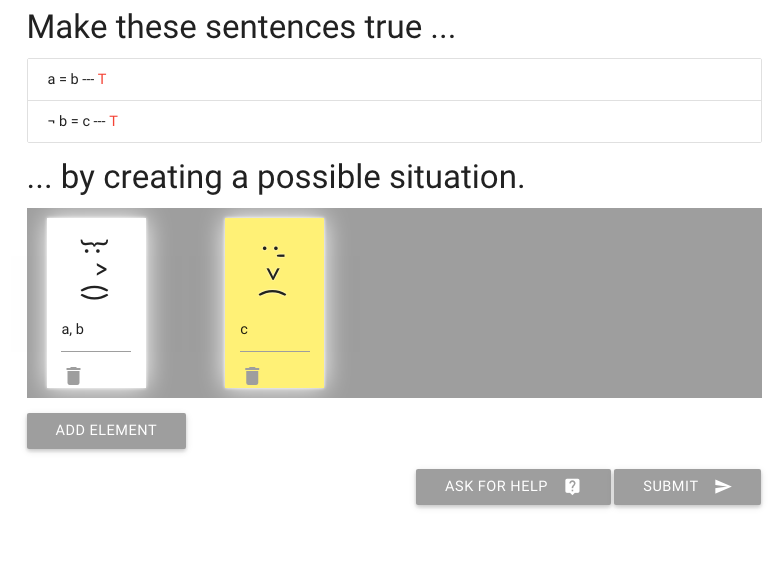

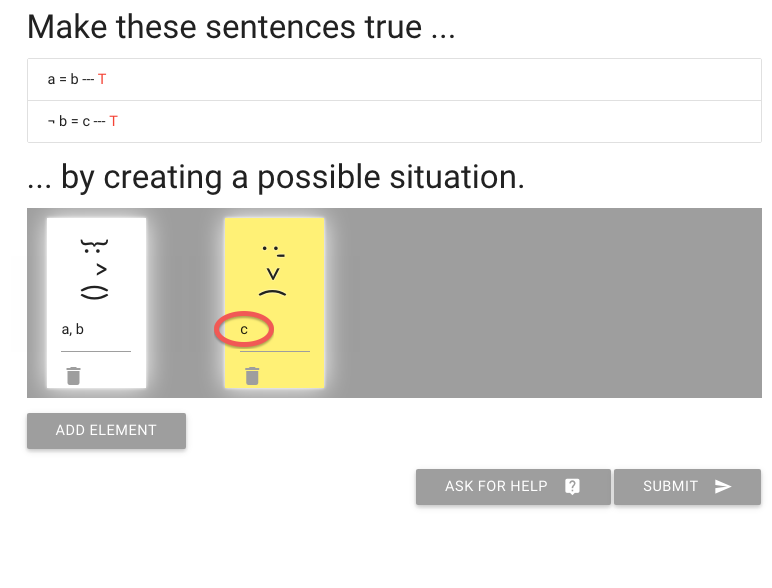

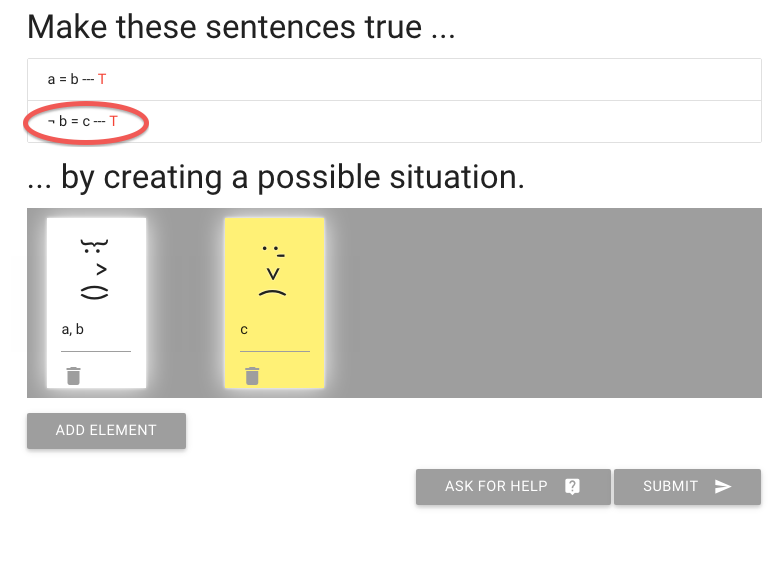

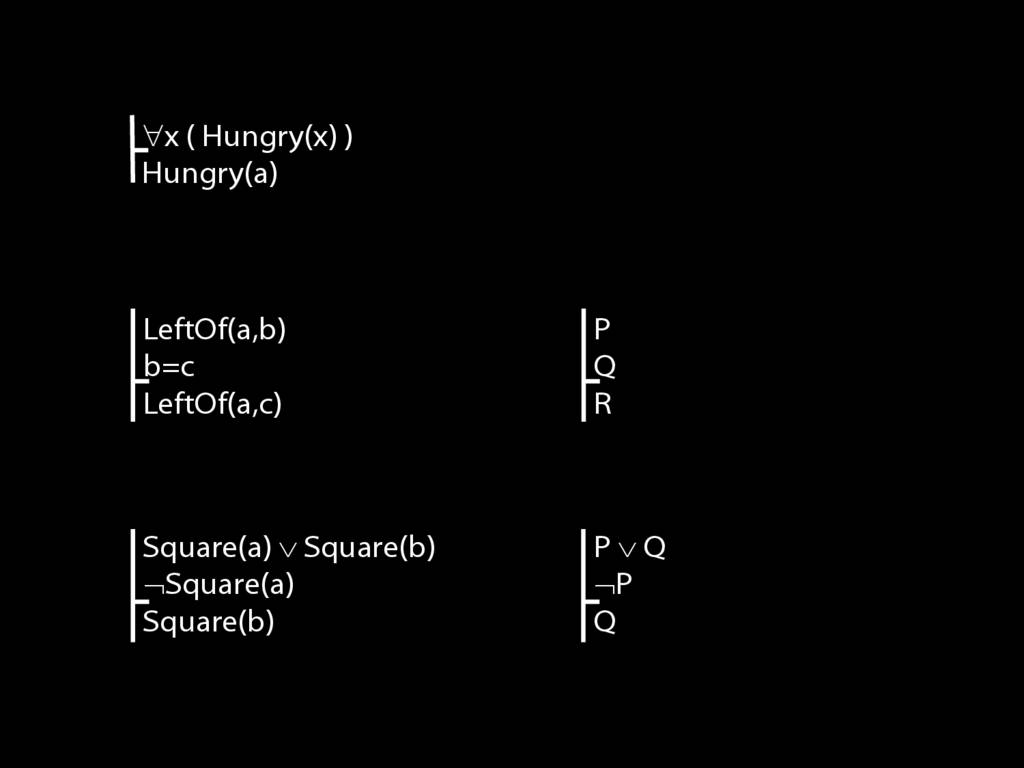

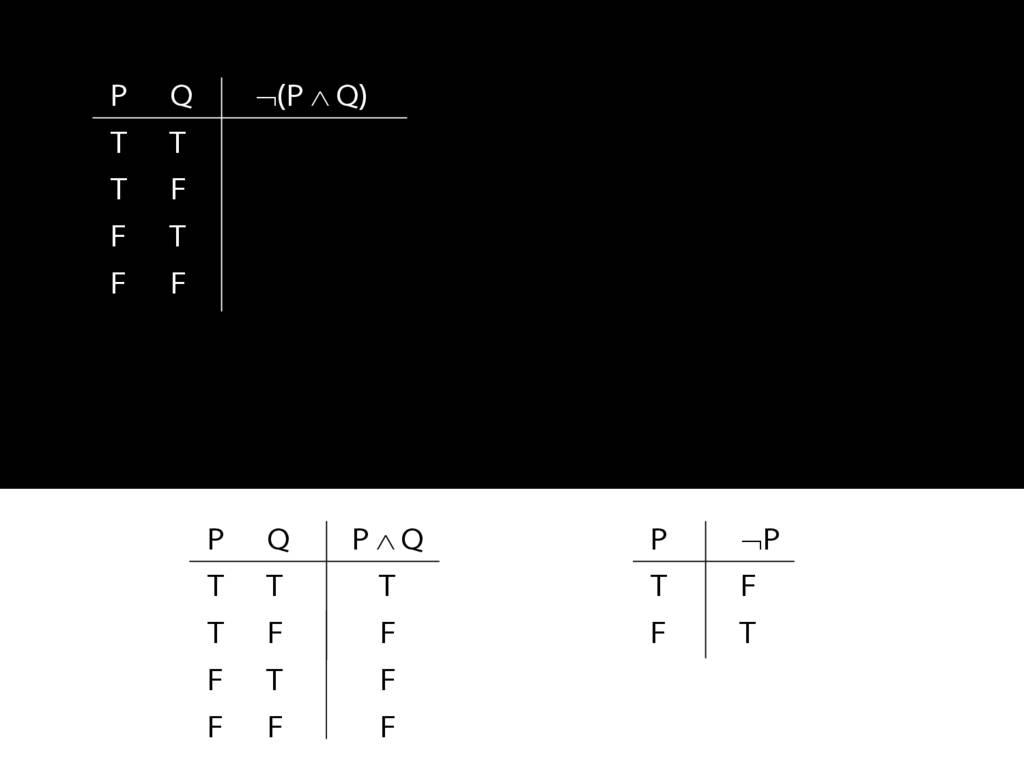

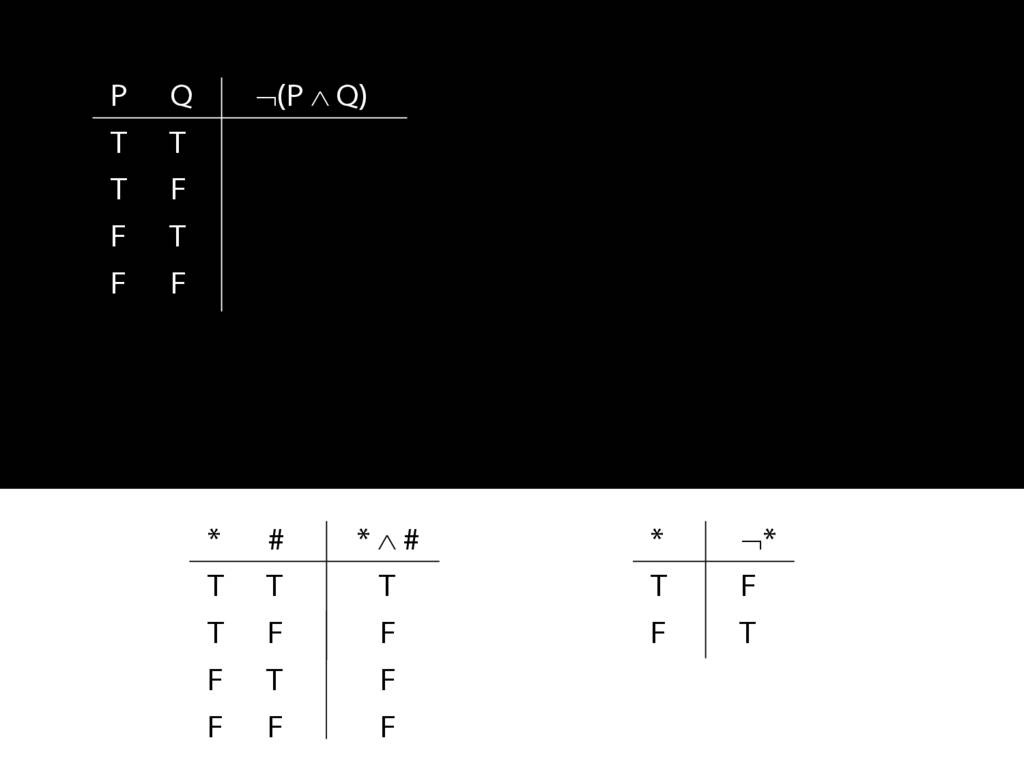

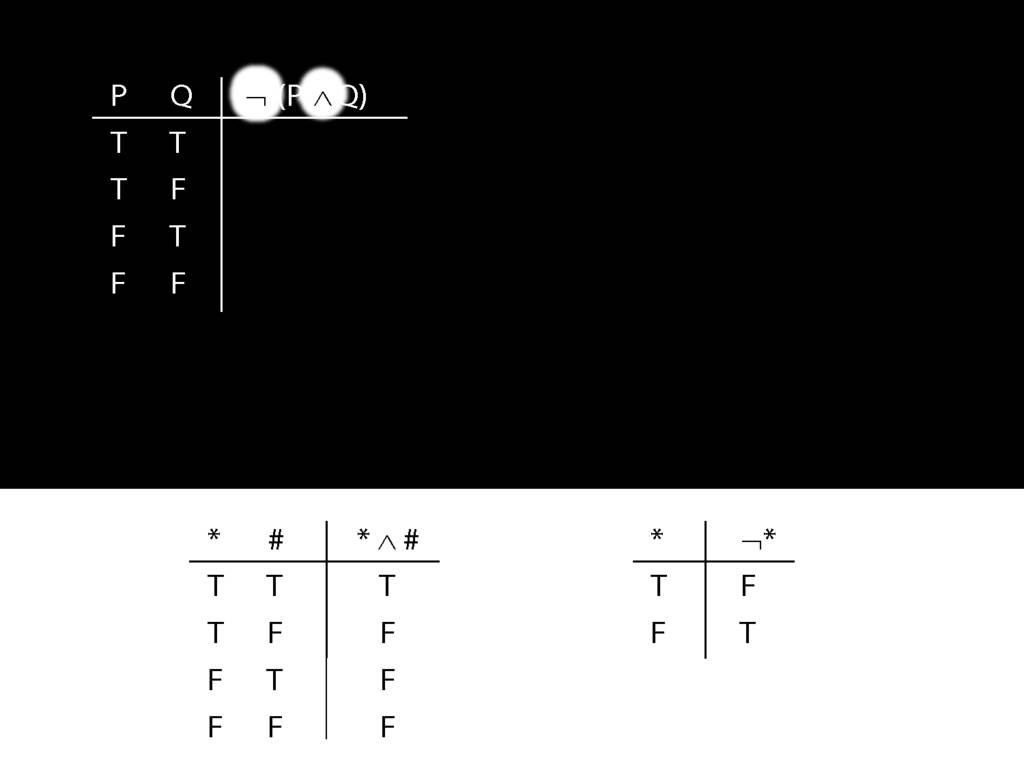

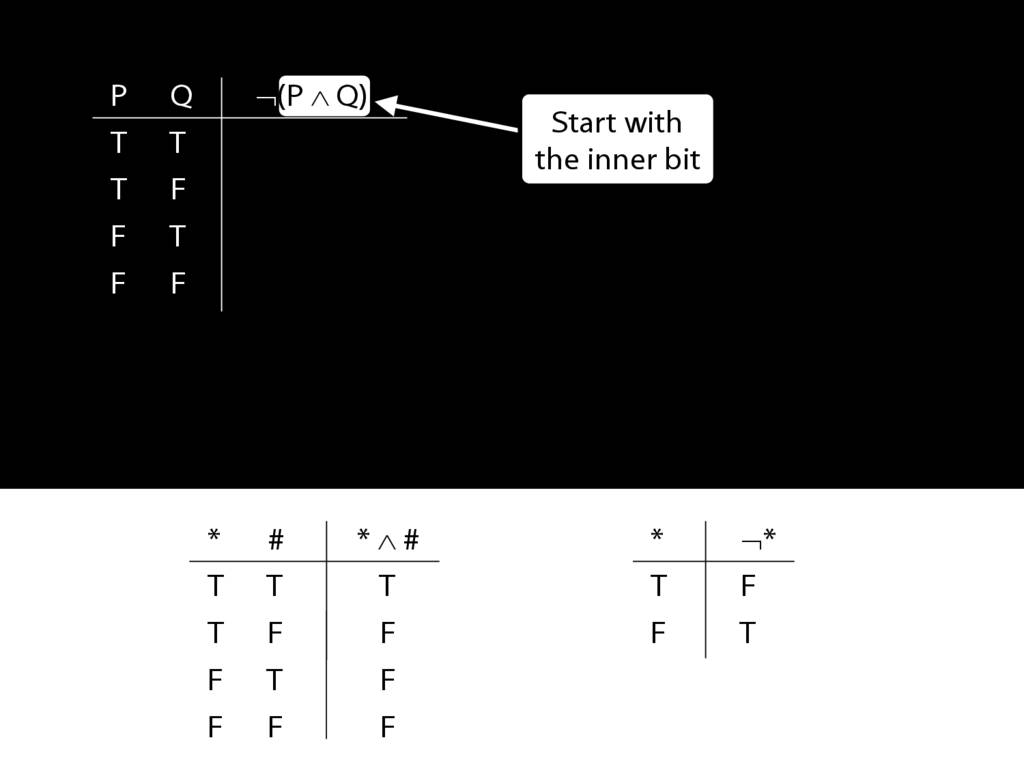

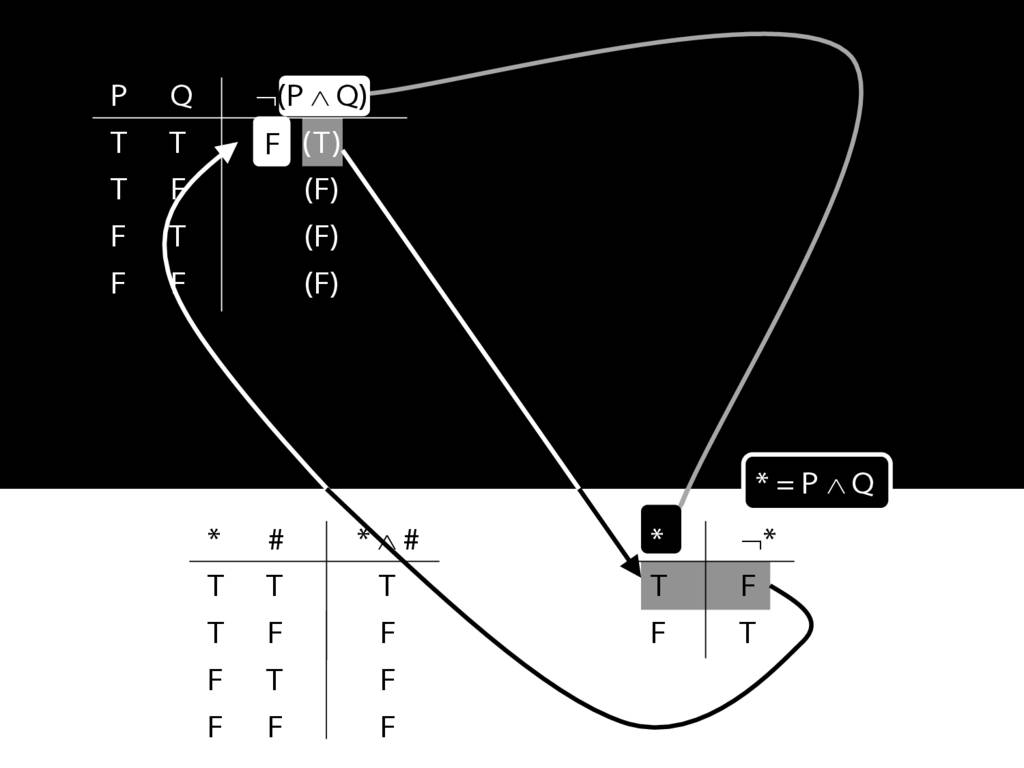

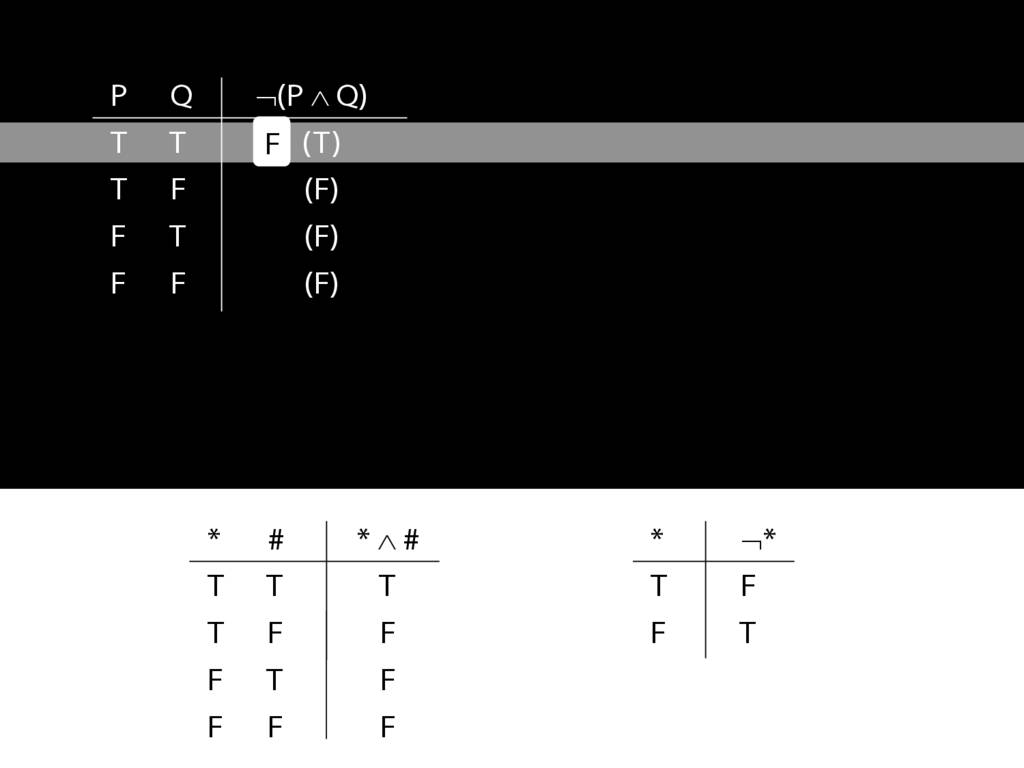

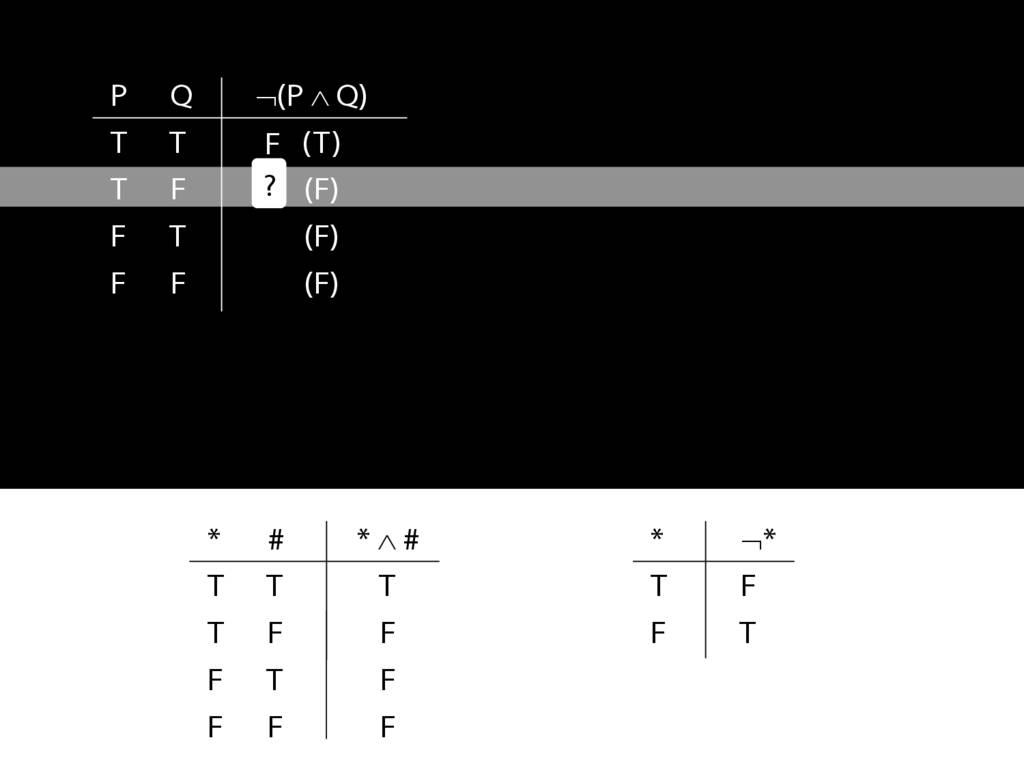

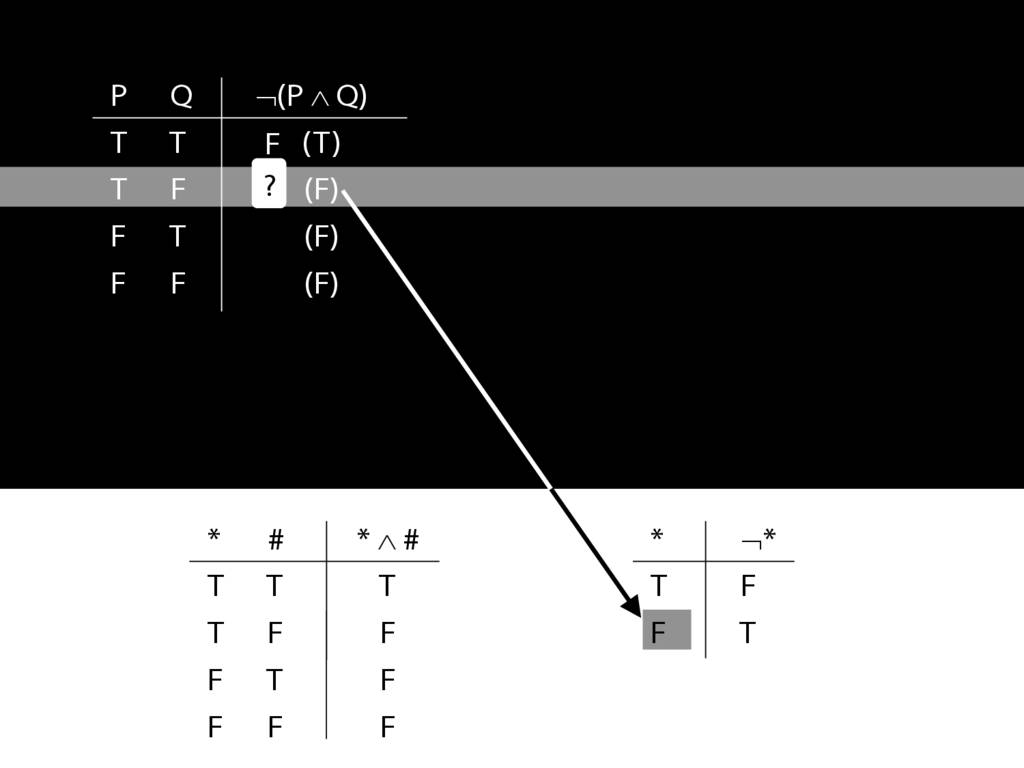

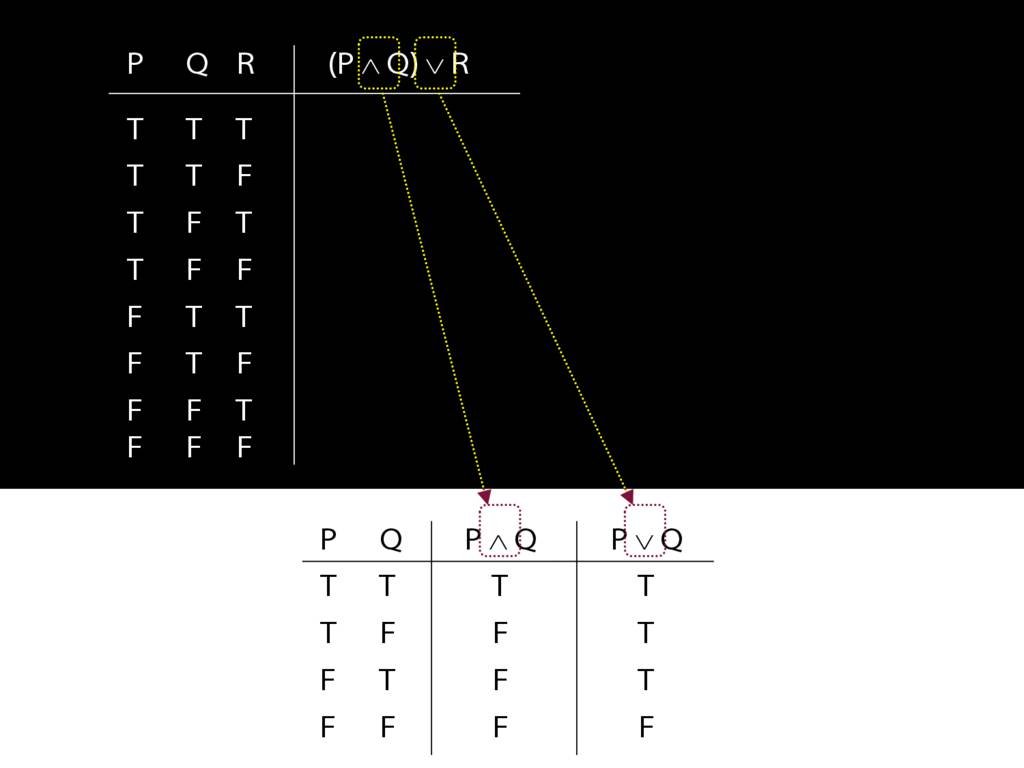

Now let's see how these sentences look in our formal language, awFOL.

Here's how the equivalent of 'John is square' looks in awFOL.

The whole thing is a sentence of awFOL.

The letter 'a' is a name; just like the English name 'John', the function of 'a' is to refer to an object (in this case, John)

And 'Square( )' is the predicate.

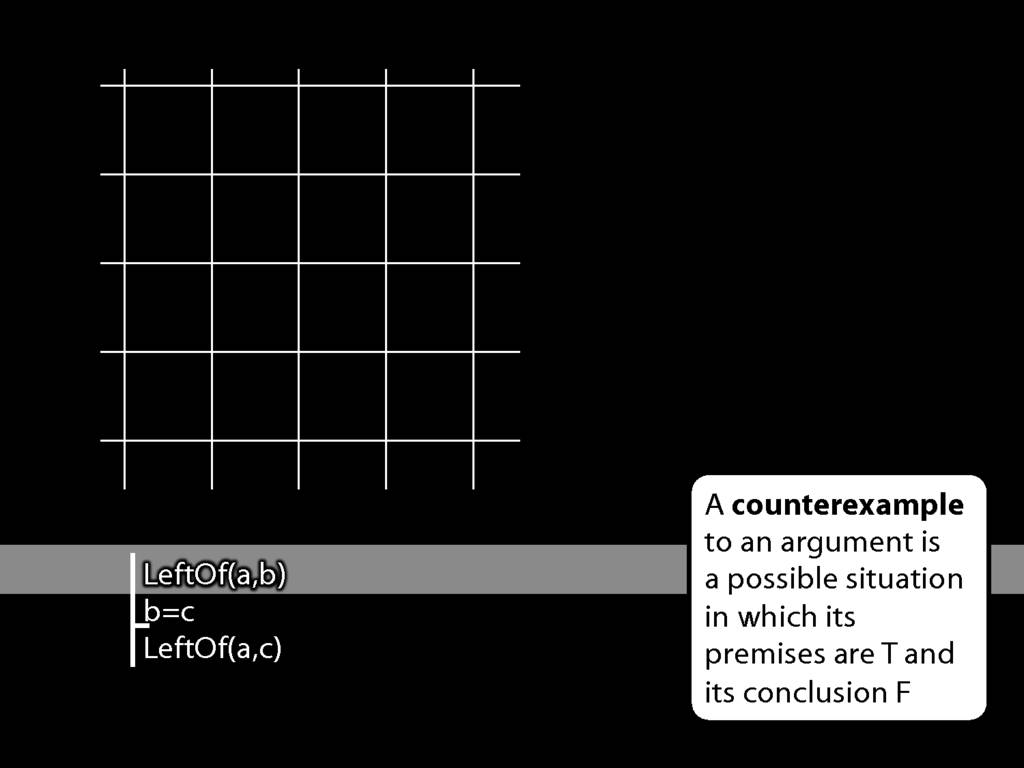

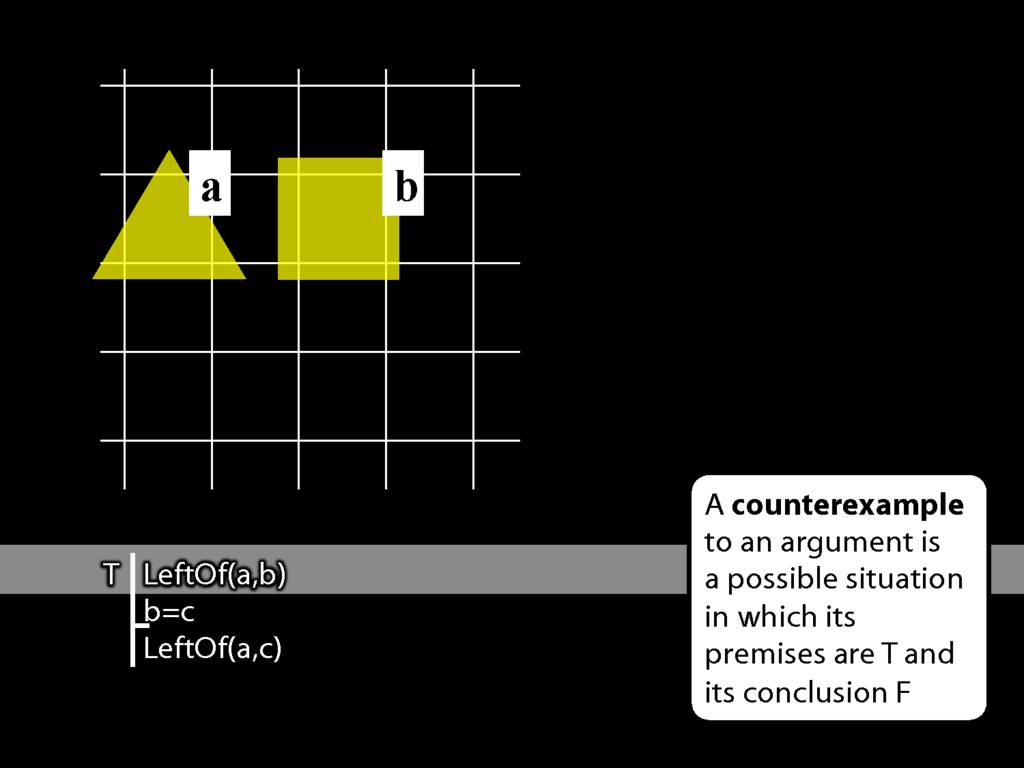

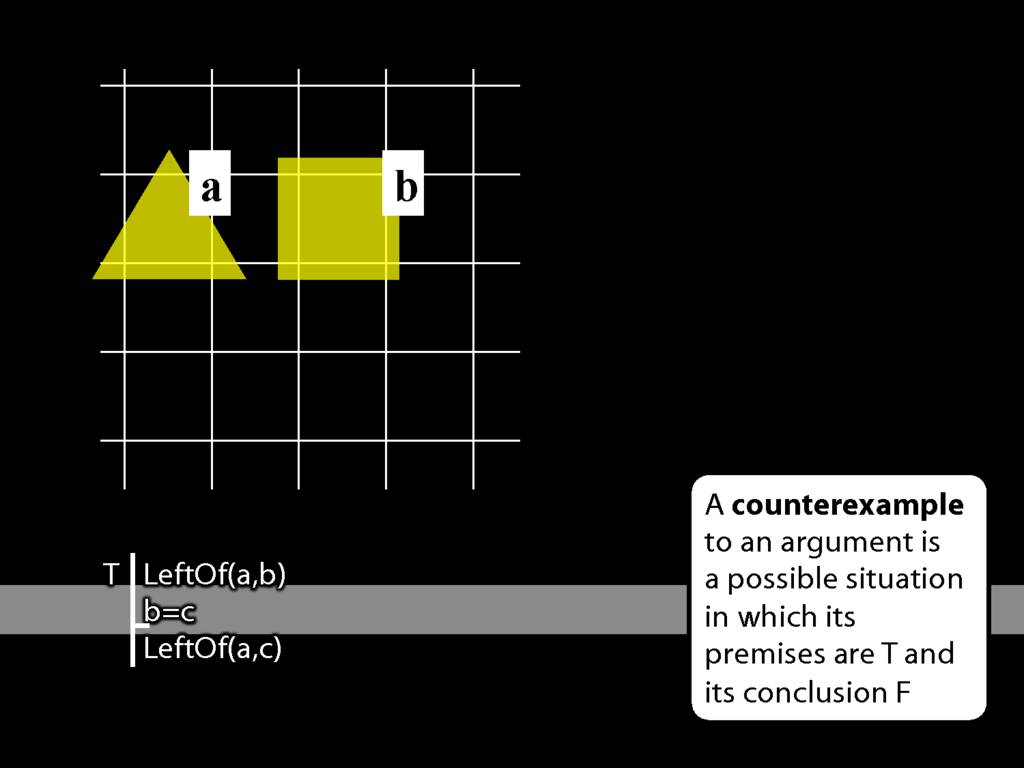

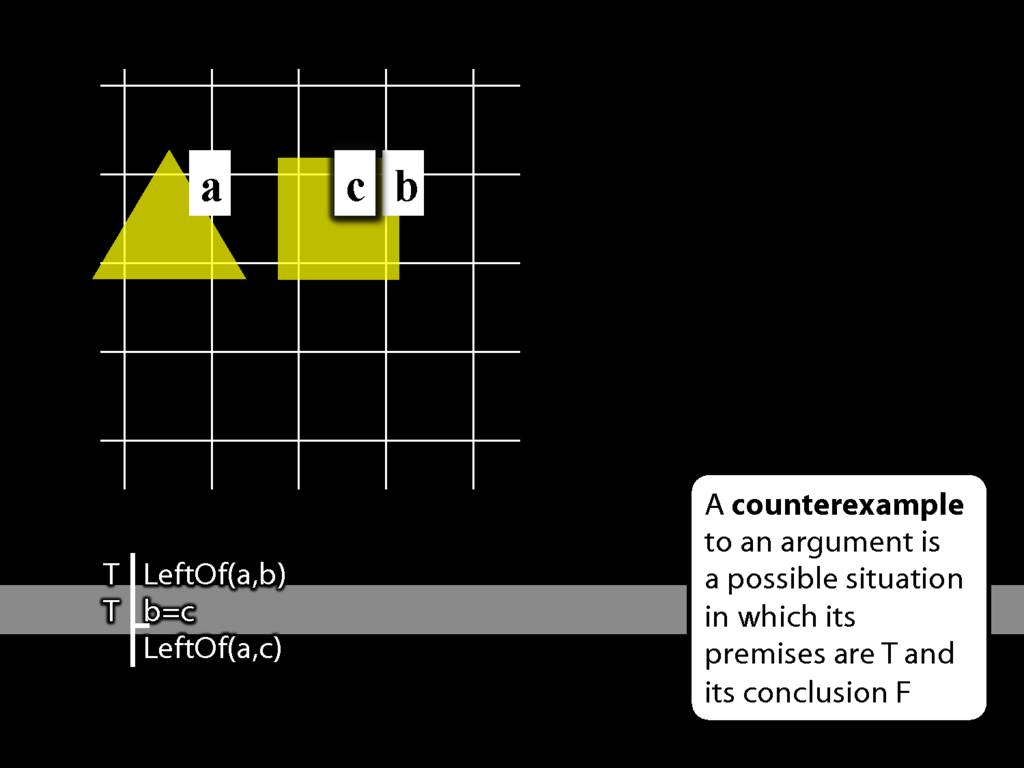

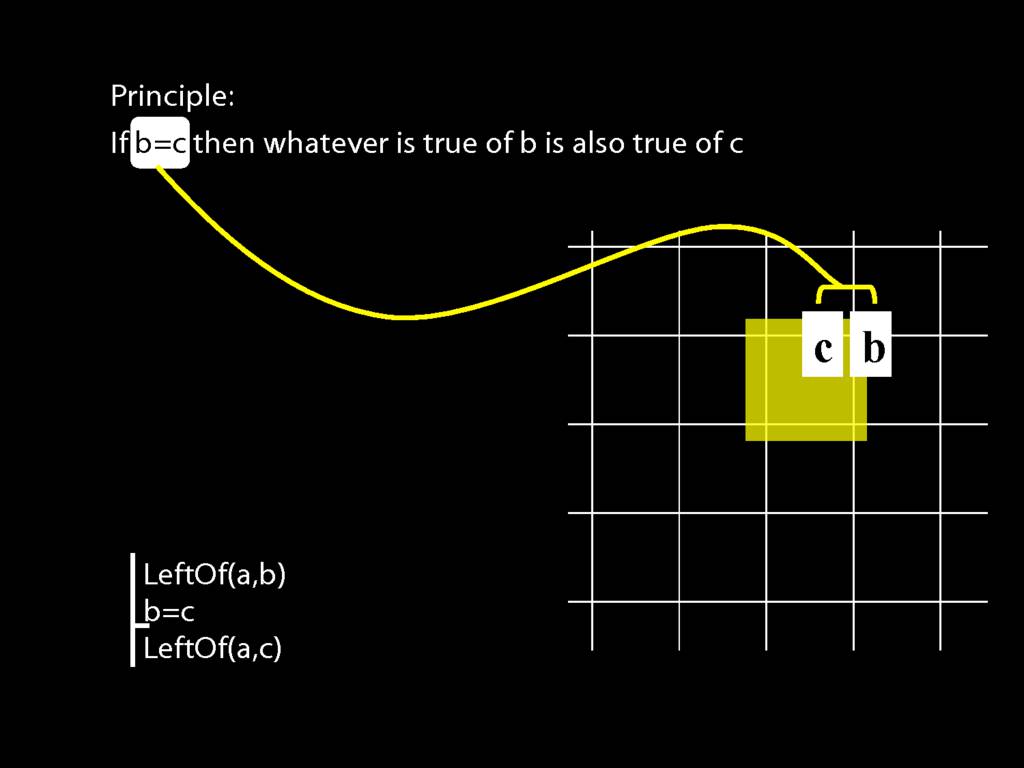

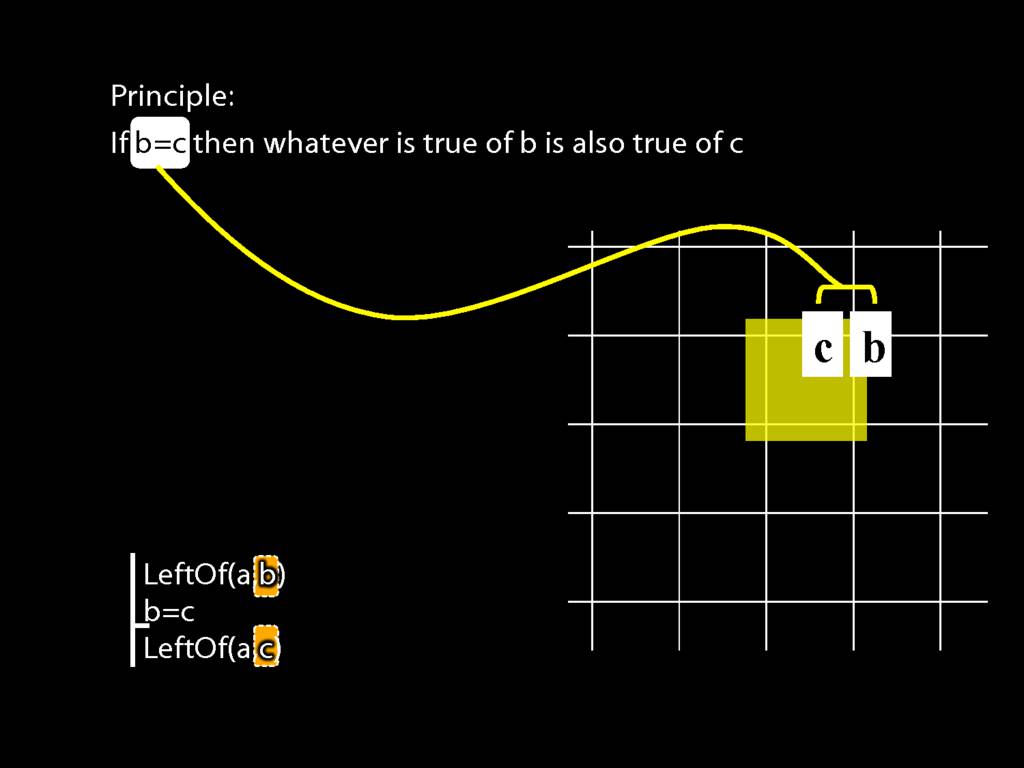

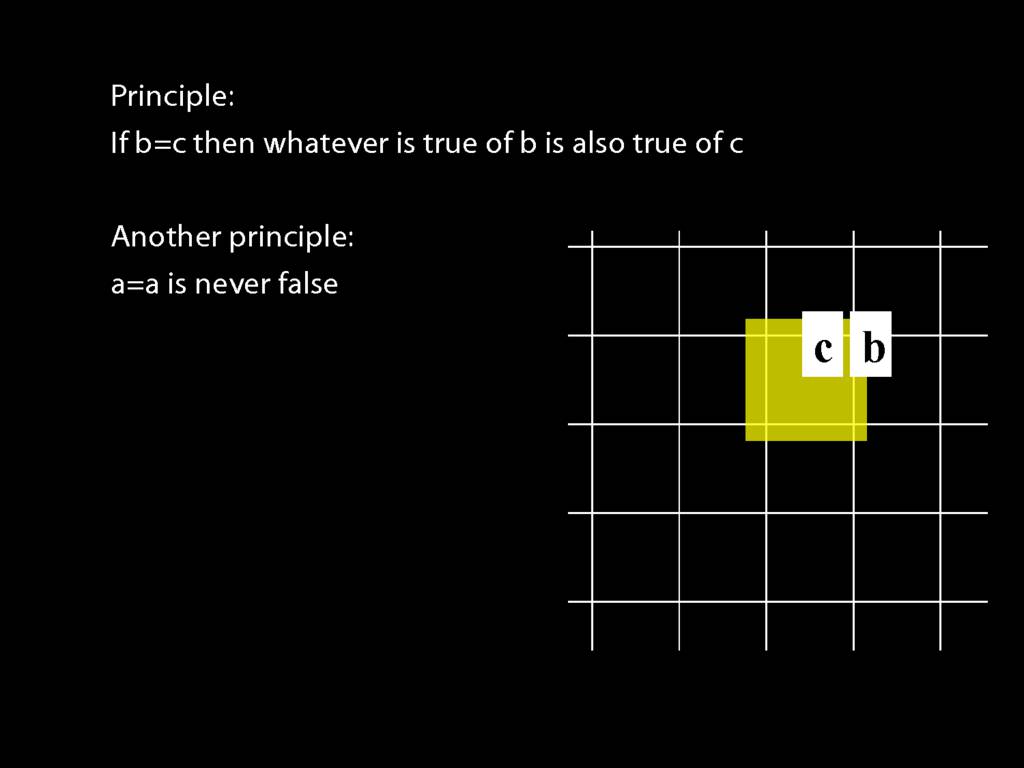

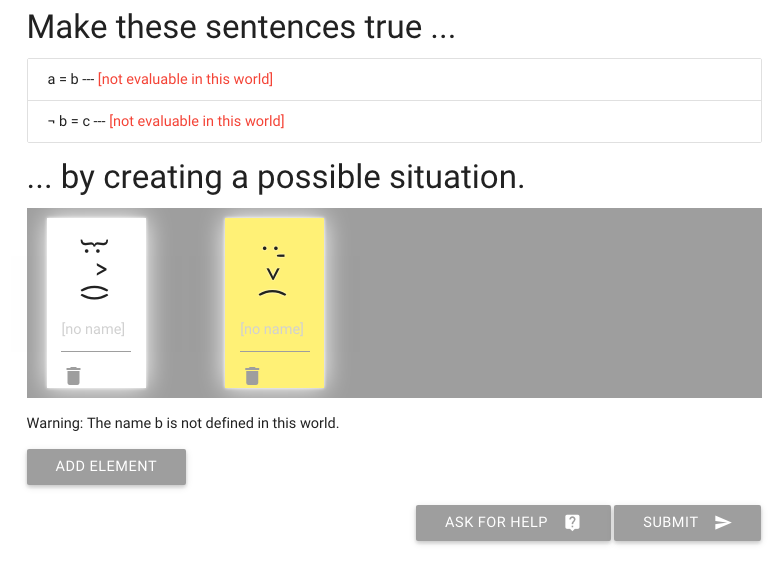

What about 'John is to the left of Ayesha', how can we say something like this in awFOL?

Here's the equivalent of 'John is to the left of Ayesha in awFOL'

Again, the single letters a and b are names.

And 'LeftOf( )' is the predicate.

Note that, as in English, the order of the names matters.

It affects who we are saying is to the left of who.

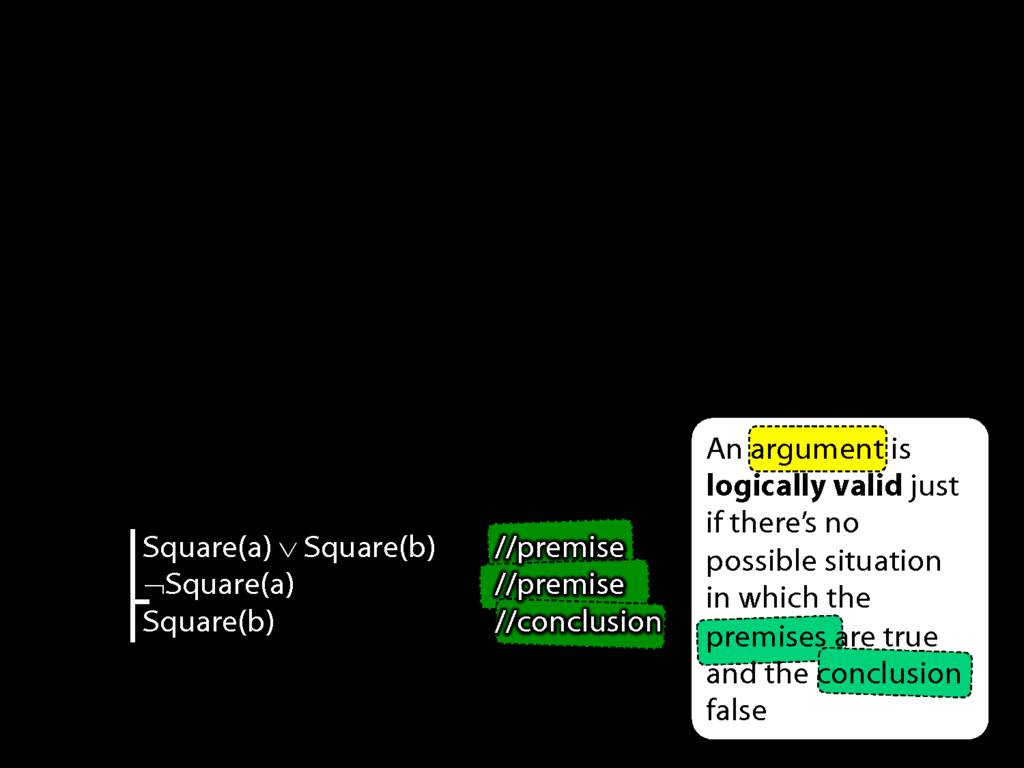

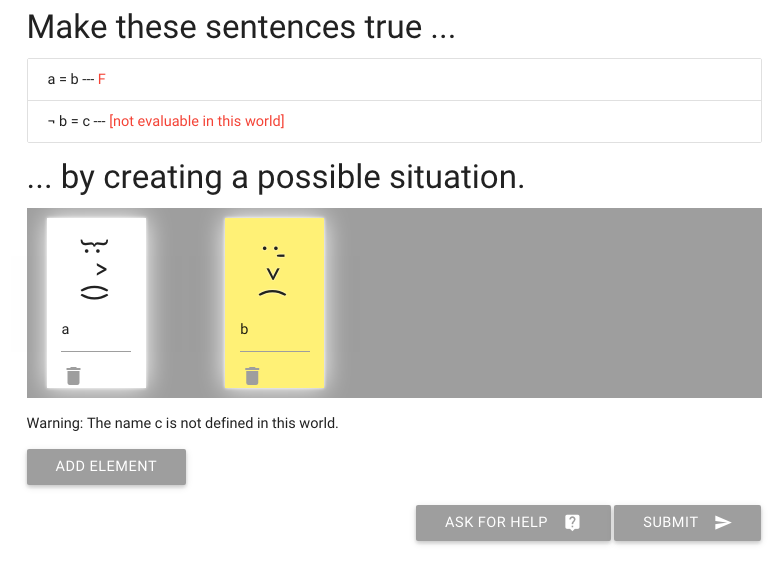

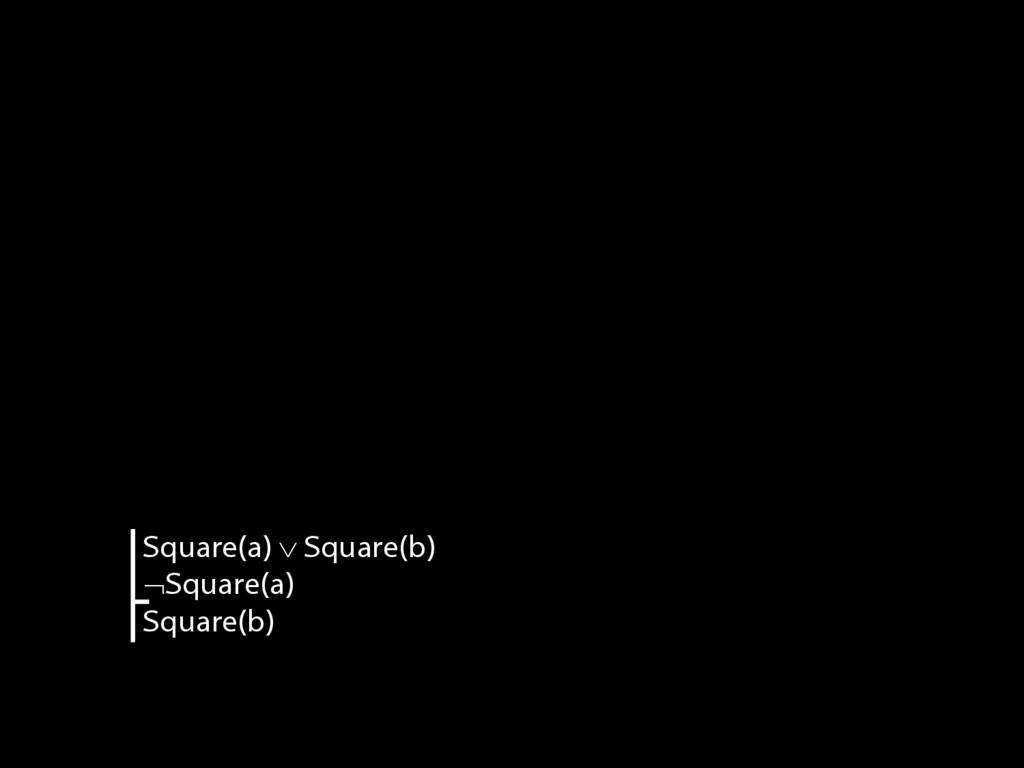

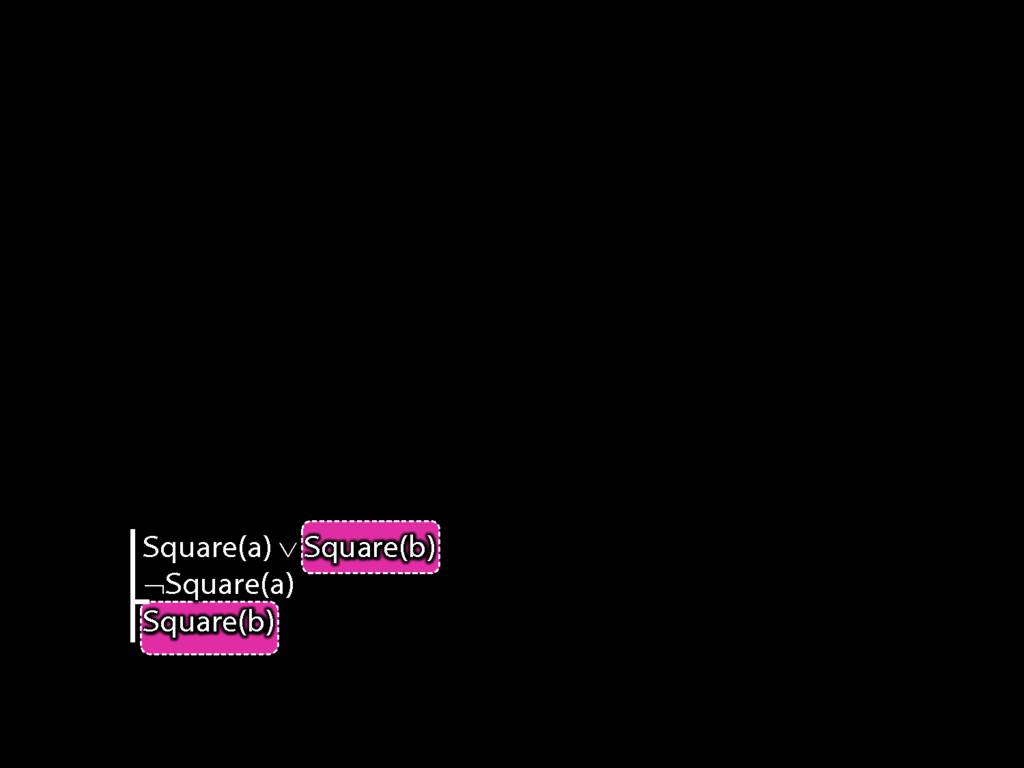

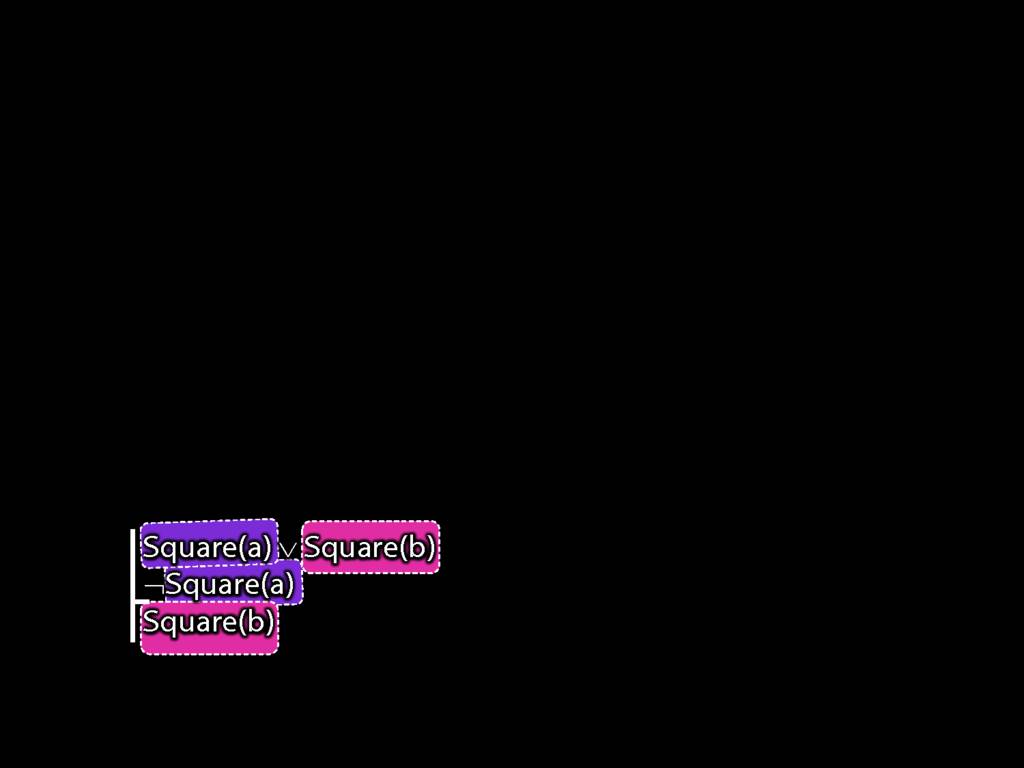

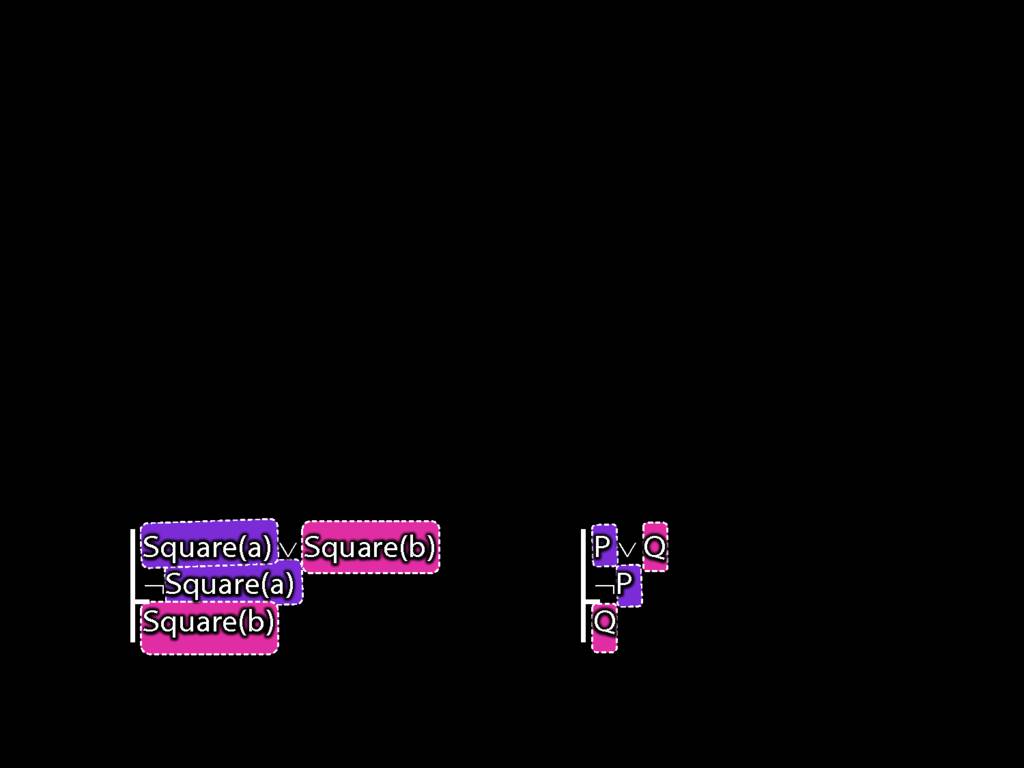

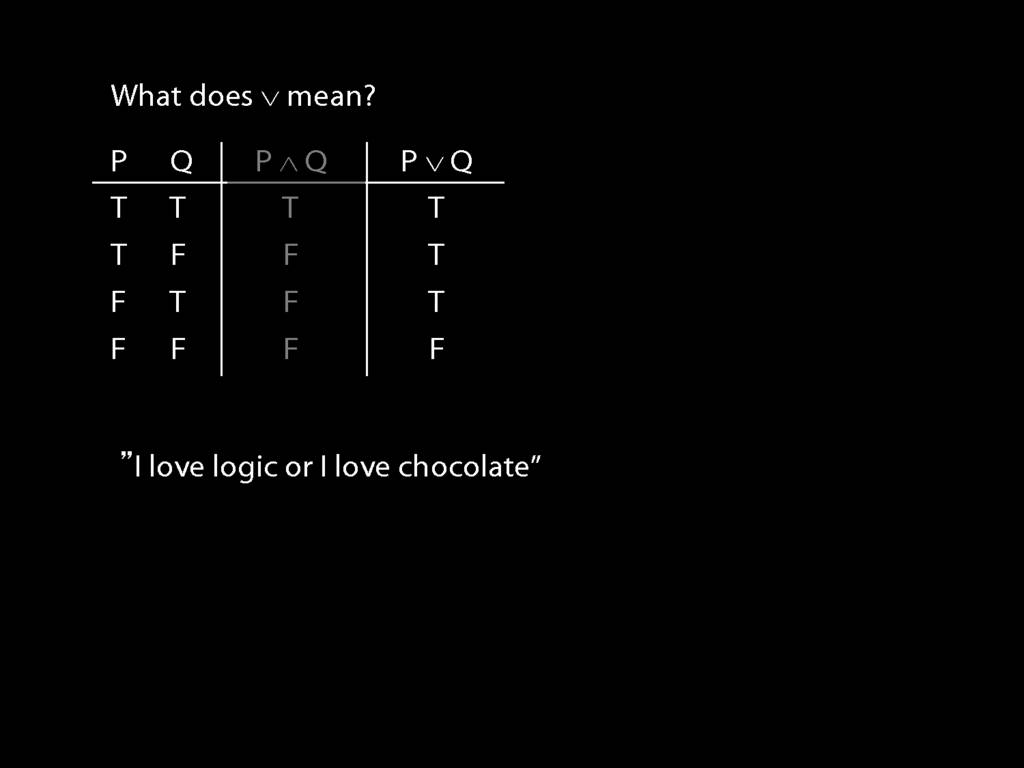

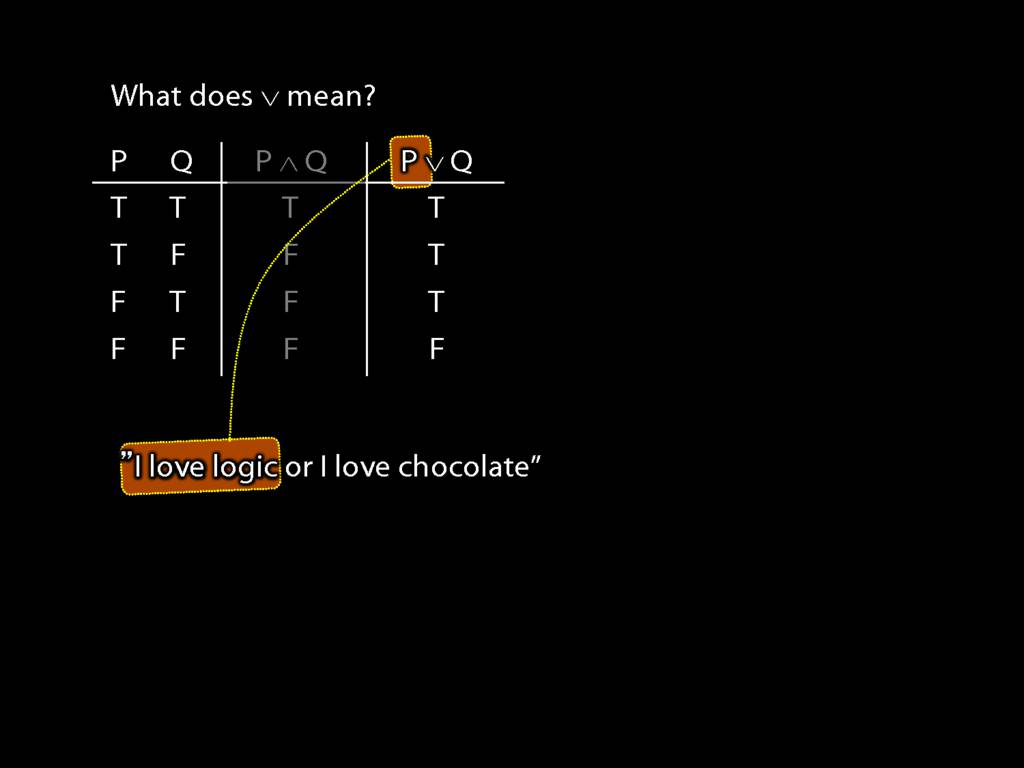

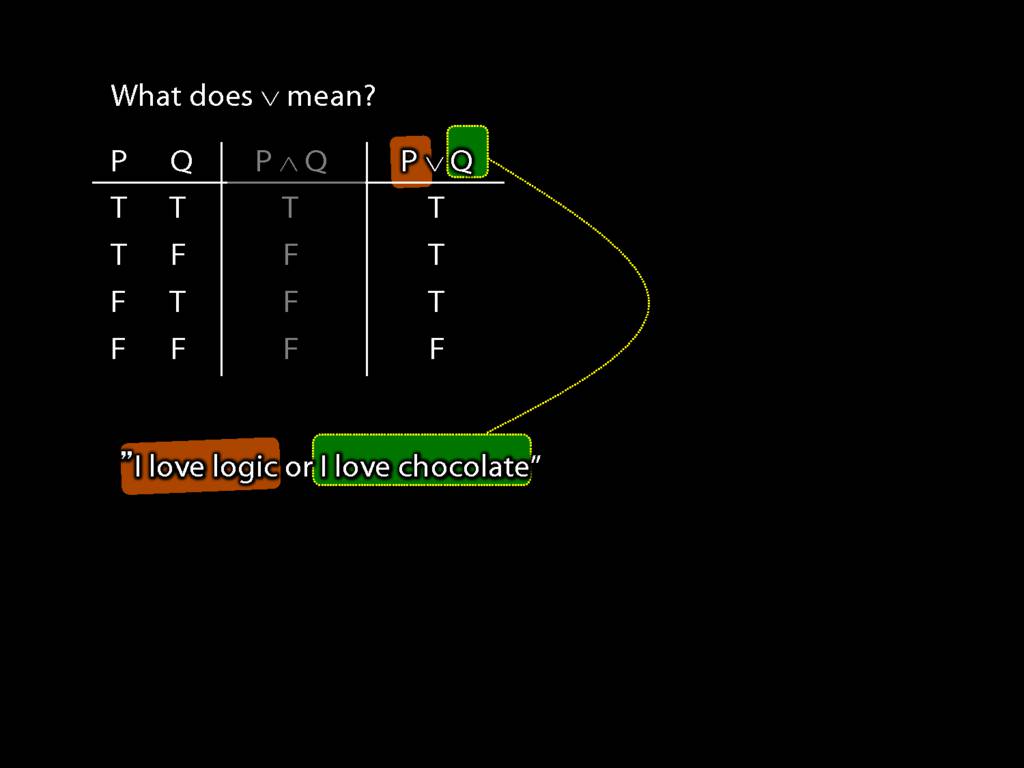

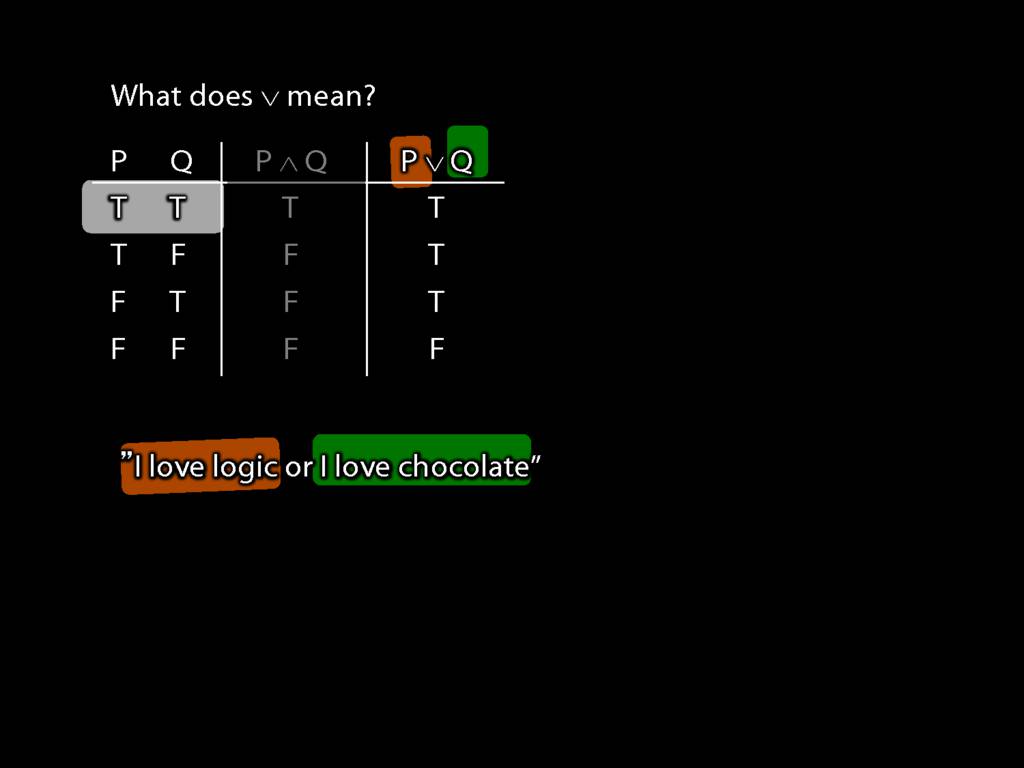

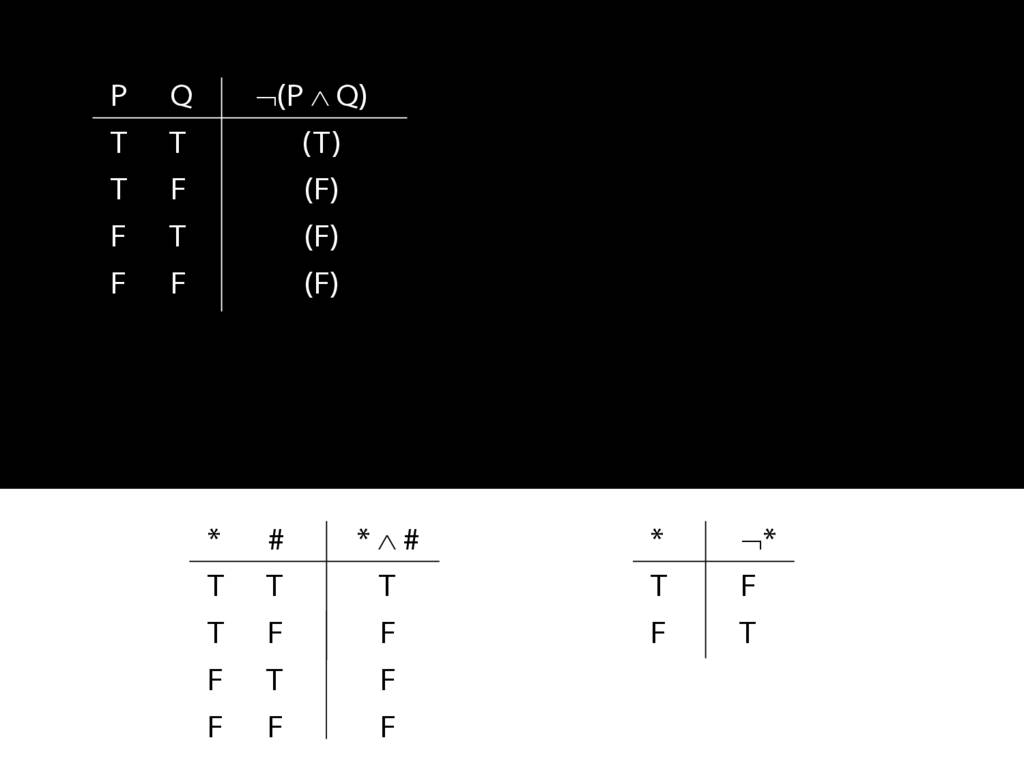

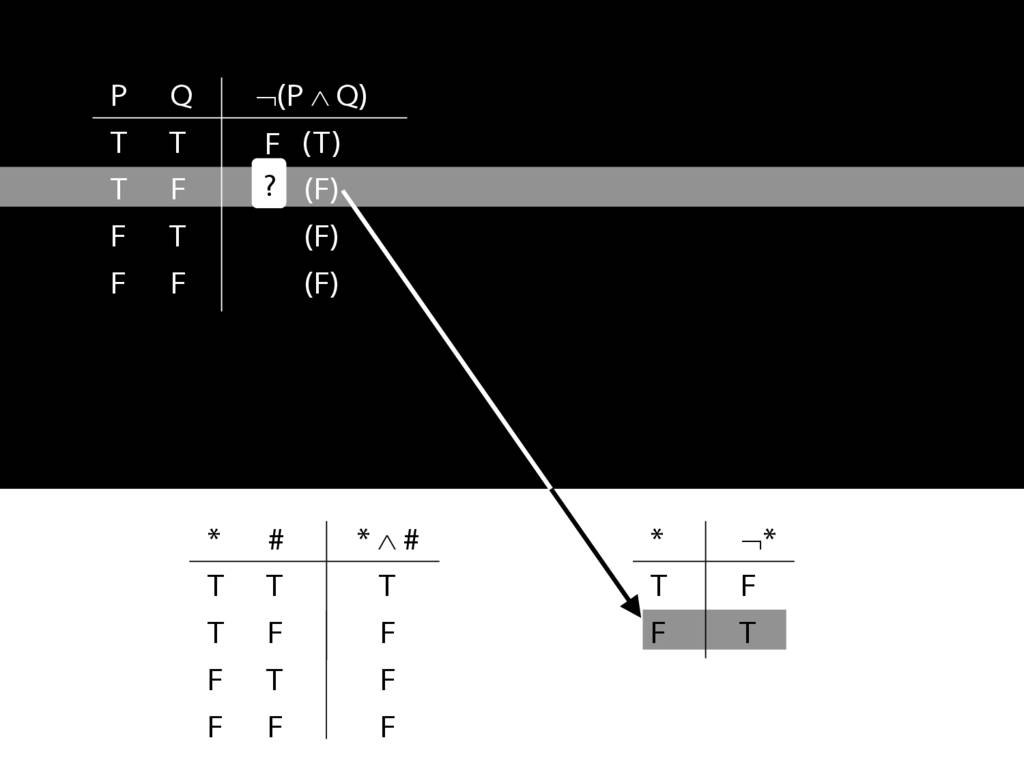

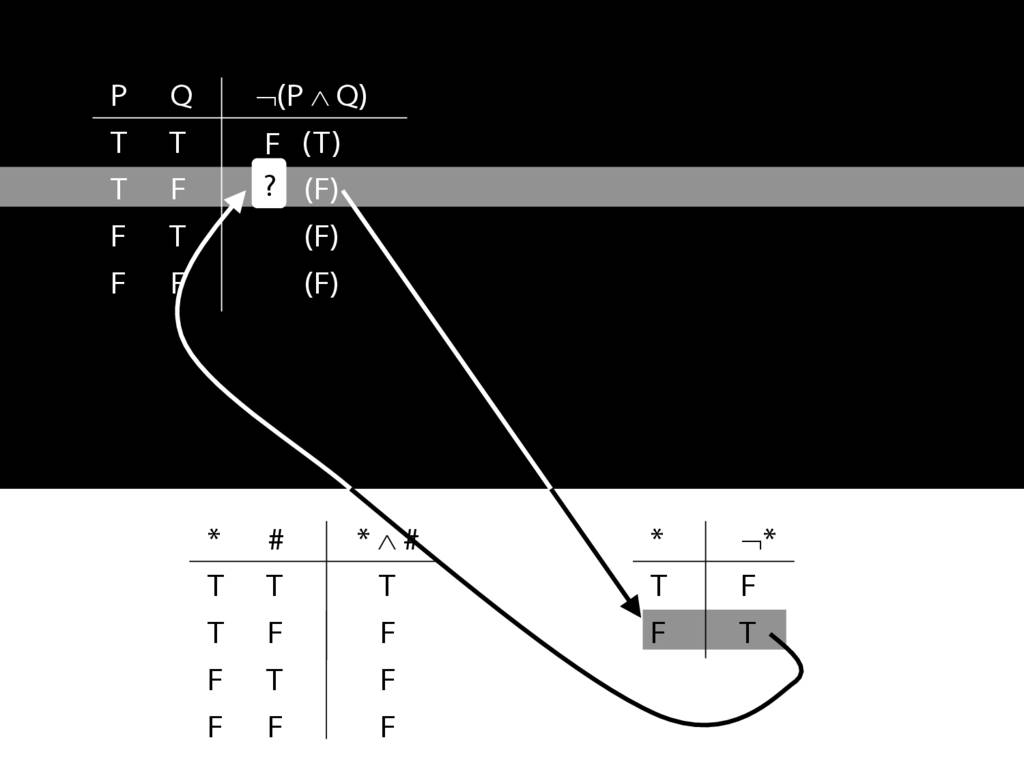

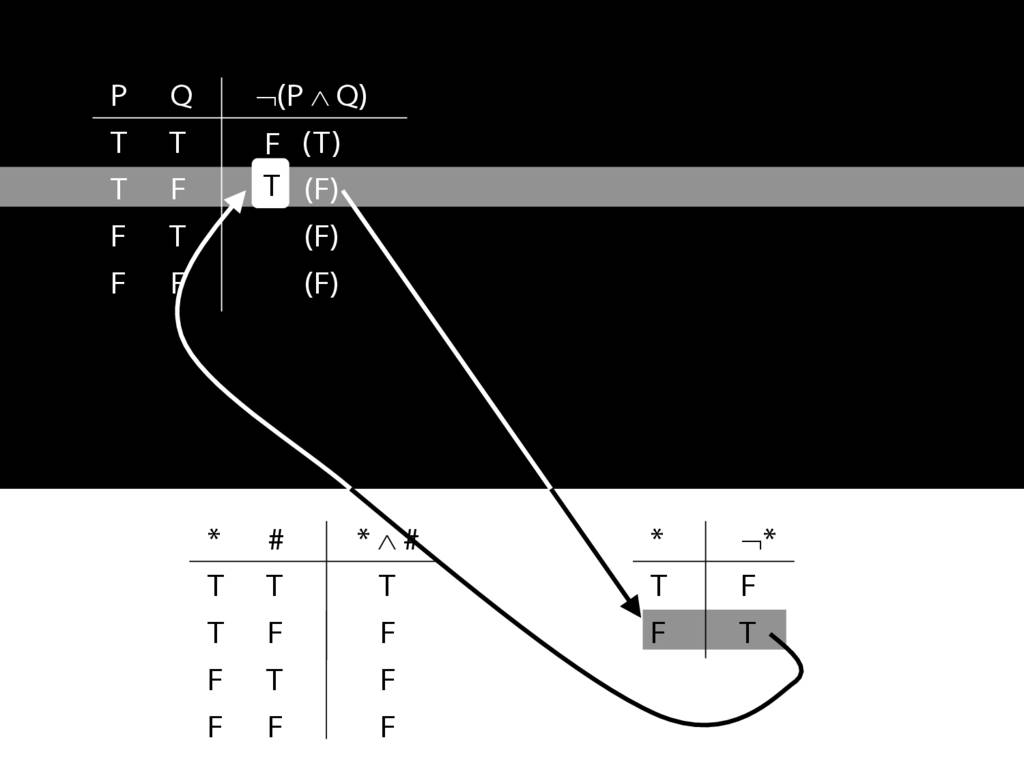

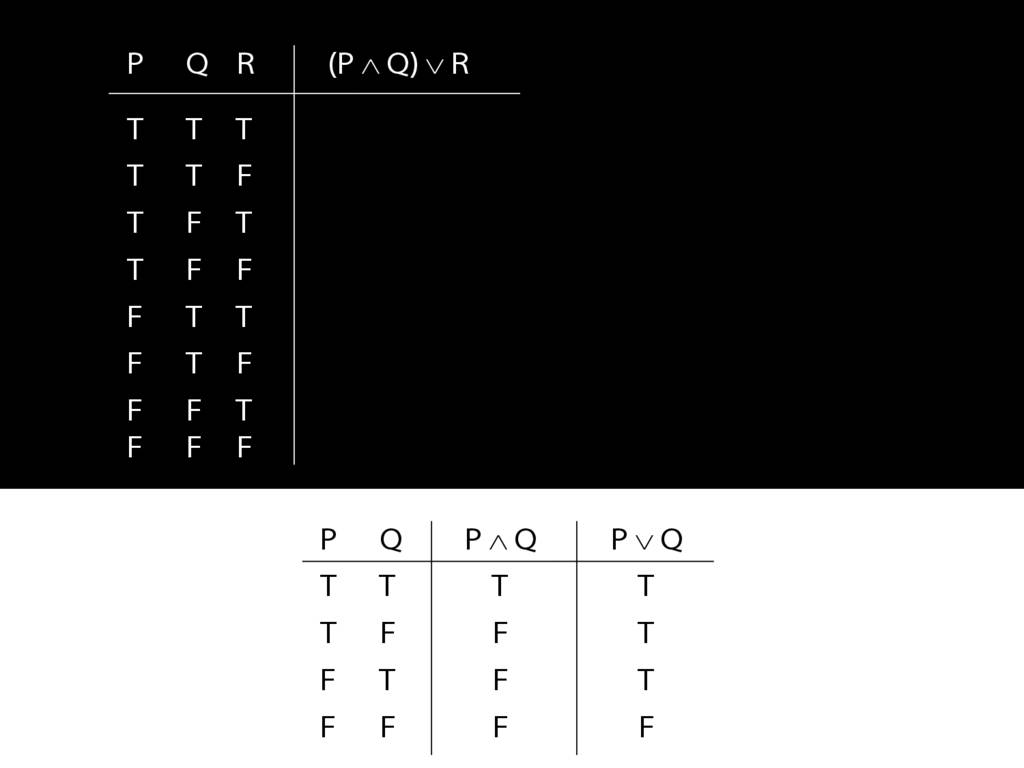

Lastly, what is the equivalent of the third sentence in awFOL?

Much as you would expect.

This is a non-atomic sentence (because it contains a connective).

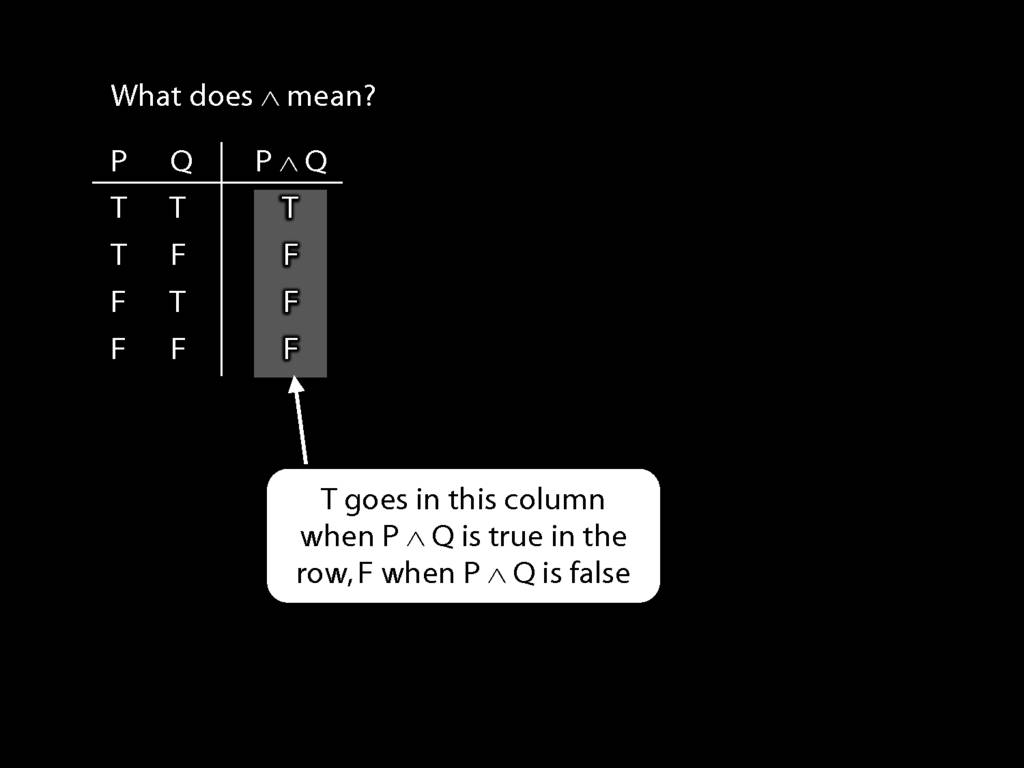

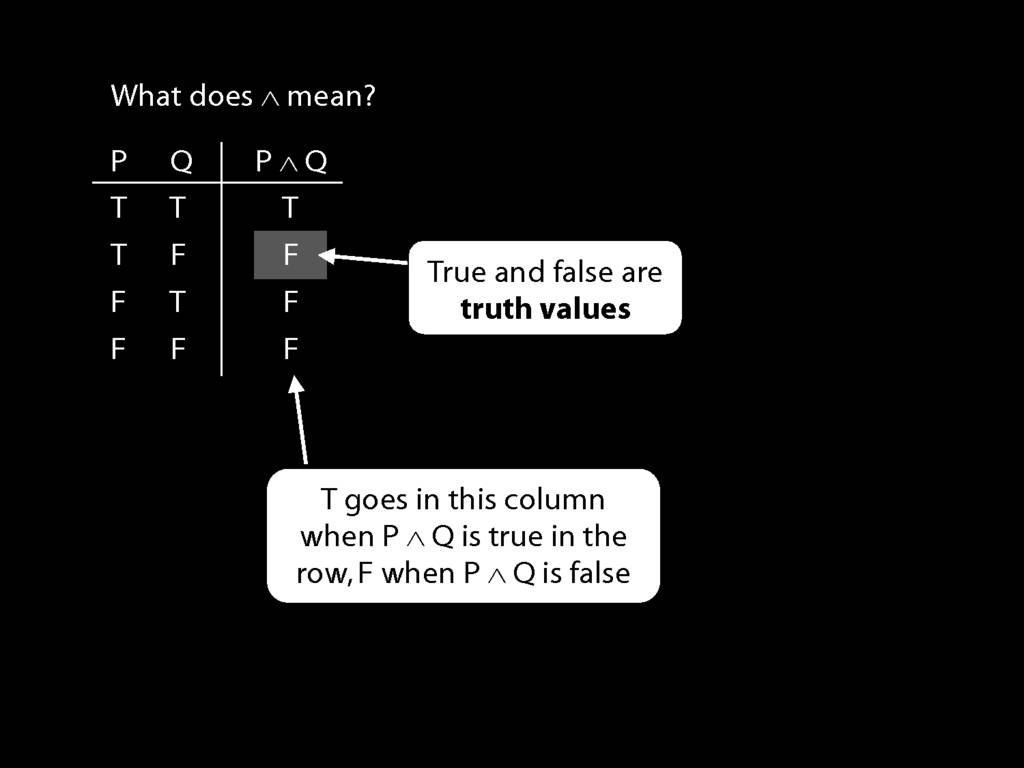

Note that where the English 'or' appears, we use a special symbol.

This symbol doesn't do exactly what the English 'or' does, as we'll see later.

Alles klar? Molto bene.

You might be thinking that this English sentence looks, well, ...

... a lot like this awFOL sentence. What's the point of learning a formal language?

How will it help us to understand logic?

(It's a bit tricky to answer this question as I haven't yet said what logic is.)